Culture Wars

The White of the AI

The essence of AI is not white oppression, racism, sexism, and colonialism, it is the automation of mathematics and logic.

“It is a truth little acknowledged that a machine in possession of intelligence must be white.” I do appreciate a Jane Austen reference and so I read on, hoping to find a stylish argument, only to be disappointed. The very next lines of the paper by Stephen Cave & Kanta Dihal in Philosophy and Technology said this:

In this paper, we problematize the often unnoticed and unremarked-upon fact that intelligent machines are predominantly conceived and portrayed as White. We argue that this Whiteness both illuminates particularities of what (Anglophone Western) society hopes for and fears from these machines, and situates these affects within long-standing ideological structures that relate race and technology.

The paper is entitled “The Whiteness of AI,” and the authors explain that they will use “white” and “black” for colour and “White” and “Black” for race. They go on to argue that AI is White on the basis that images of AI have a lot of white in them. This Whiteness, the authors suggest, discourages non-Whites from getting into AI. They say their article aims to contribute to the “literature on race and technology by examining how the ideology of race shapes conceptions and portrayals of artificial intelligence (AI).” The approach of the authors “is grounded in the philosophy of race and critical race theory… and work in Whiteness studies.”

I wondered how long it would take for the inevitable remarks on “decolonising AI” to materialise. Sure enough, the authors get there in the end, but first they speak of a “white racial frame.” The aim of such discourse is to conduct “an interrogation to ‘make strange’ this Whiteness, de-normalising and drawing attention to it.” Expanding on this they say: “One of the main aims of critical race theory in general, and Whiteness studies in particular, is to draw attention to the operation of Whiteness in Western culture. The power of Whiteness’s signs and symbols lies to a large extent in their going unnoticed and unquestioned, concealed by the myth of colour-blindness.” I remain unconvinced there is a “myth of colour-blindness” to be worried about in AI. I am more worried by the infection of science and engineering by critiques of dubious merit from the humanities versed in “Critical Race Theory” and “Whiteness Studies.” Such critiques tend to be heavy on narrative anecdote and light on statistical data.

The authors lay out the structure of their argument. They present “current evidence for the assertion that conceptions and portrayals of AI—both embodied as robots and disembodied—are racialised, then evidence that such machines are predominantly racialised as White” and proceed to offer us their “readings of this Whiteness.” Notably, they stress their methods are qualitative. They cite a Latino scholar who says: “Studying whiteness means working with evidence more interpretive than tangible; it requires imaginative analyses of language and satisfaction with identifying possible motivations of subjects, rather than definitive trajectories of innovation, production, and consumption.”

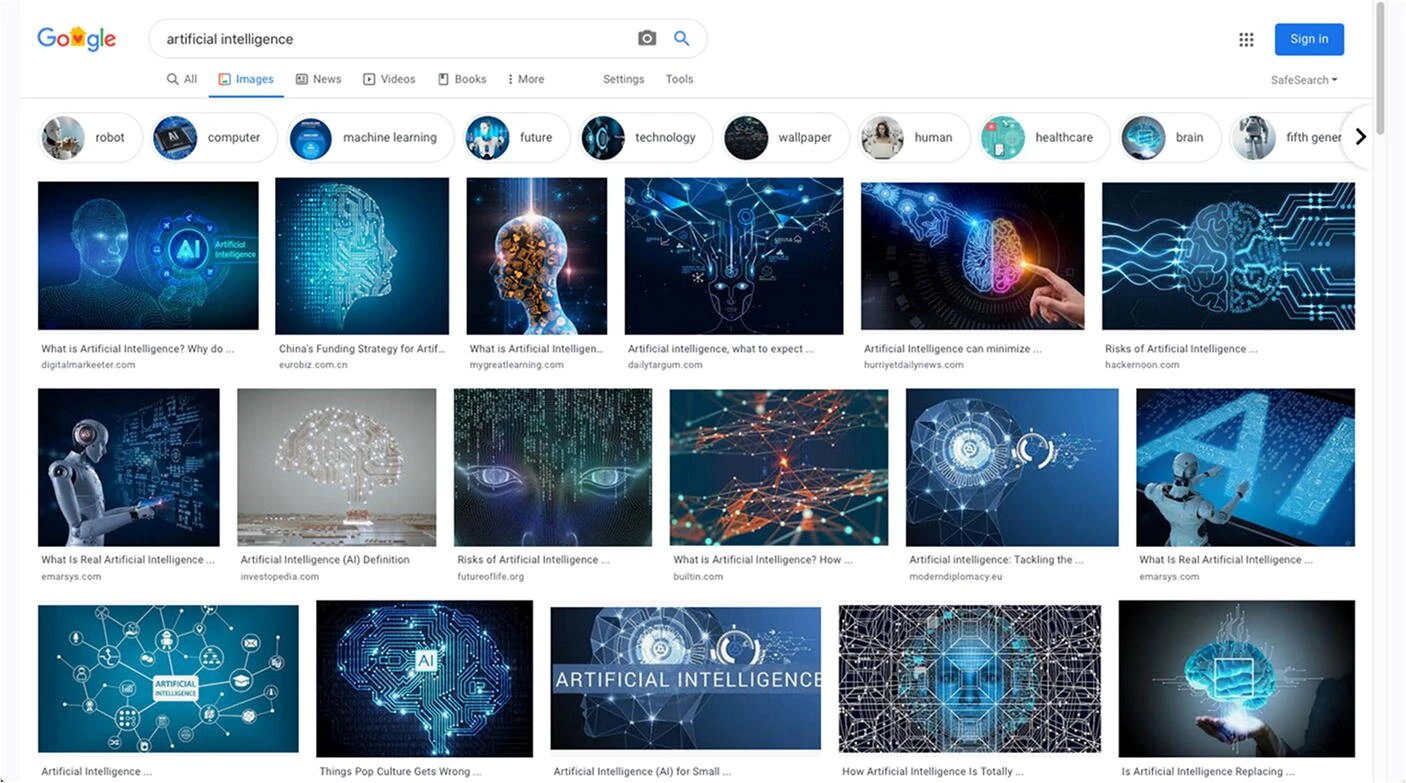

This is a convoluted way of saying: we the authors hereby give ourselves permission to go nuts with imagination and interpretation. They certainly do not want to attack AI by relying upon actual data, and the dataset they do offer in support of their argument is embarrassingly meagre. It consists of a mere 39 images: 35 images taken from Google, two book covers, one actual robot, and one actress digitally altered to look like a robot. The first 17 images come from a Google search using the string “artificial intelligence”:

What leaps out from this collection is not so much the Whiteness of AI but the blueness of backdrops. Once blue has been selected for the background, the centuries-old “rule of tincture” that derives from heraldry, shrinks foreground options to white and yellow (for a more modern look, hot pink, orange, and the brighter shades of green also provide strong contrast). Of these 17 images, two have recognisably white bodies (the first and sixth images from the left on row two) but they are clearly white plastic robots not white men. One face is sort of black (the third image from the left on row two). There are two faces that are sort of blue (the first image from the left on row one, the fourth image from the left on row three). There is one image with a white hand (fifth image from the left on row one). There are eight images with visible brains and over a dozen with visible circuitry.

I can find no compelling evidence for the Whiteness of AI here. There is an unsurprising correlation between AI, circuits, and brains. The circuits are artificial. The brains are intelligent. Since actual human brains are white-to-beige in colour regardless of whether they belong to blacks or whites, it is not especially surprising that most illustrative art shows them as white to maximise contrast and clarity. I also see a dominance of blue backgrounds with white foregrounds that have the occasional splash of orange, hot pink, and green. There is no dominance of the white race here either—just some white plastic faces. The prevalence of blue backgrounds strikes me as the greater curiosity. Why not green? Why not red? Is there some malign influence from Big Blue? But there is no racism mileage in that line of inquiry so the authors decline to pursue it.

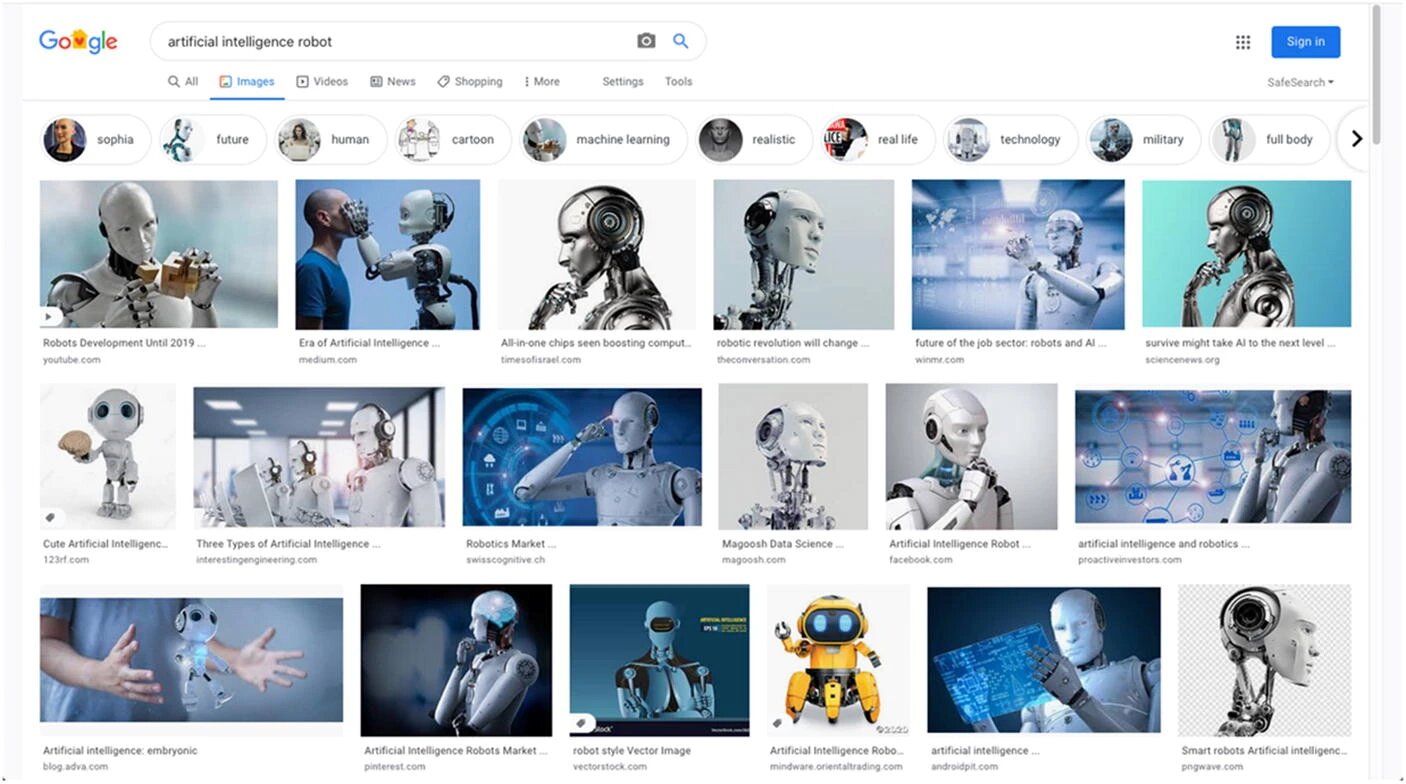

The second Google screenshot offers more convincing evidence of the Whiteness of AI, but it would be better expressed as a claim about the Whiteness of Robots. It shows the result for an image search for “artificial intelligence robot”:

These results better demonstrate the authors’ claim, but they offer a very limited sample. Several of these pictures depict the same robot photographed from different angles. For example, the third and sixth pictures in row one are images of the same robot. The second and third images in column one are of the same robot. The fourth image in row one, the fourth image in row two and the sixth image in row three are of the same robot. The sixth image in row two and the second in row three are of the same robot. These 18 pictures show less than 10 separate robots, and most of these “robots” are not actual working robots at all but rigs produced with graphic design tools that produce animated characters for games and movies. Two are real robots but most are artworks designed to strike a pose, not to undertake work. As for the colour, most robots have plastic surfaces. As the natural colour of plastic is white, it is hardly surprising that this is the most common colour for humanoid robots.

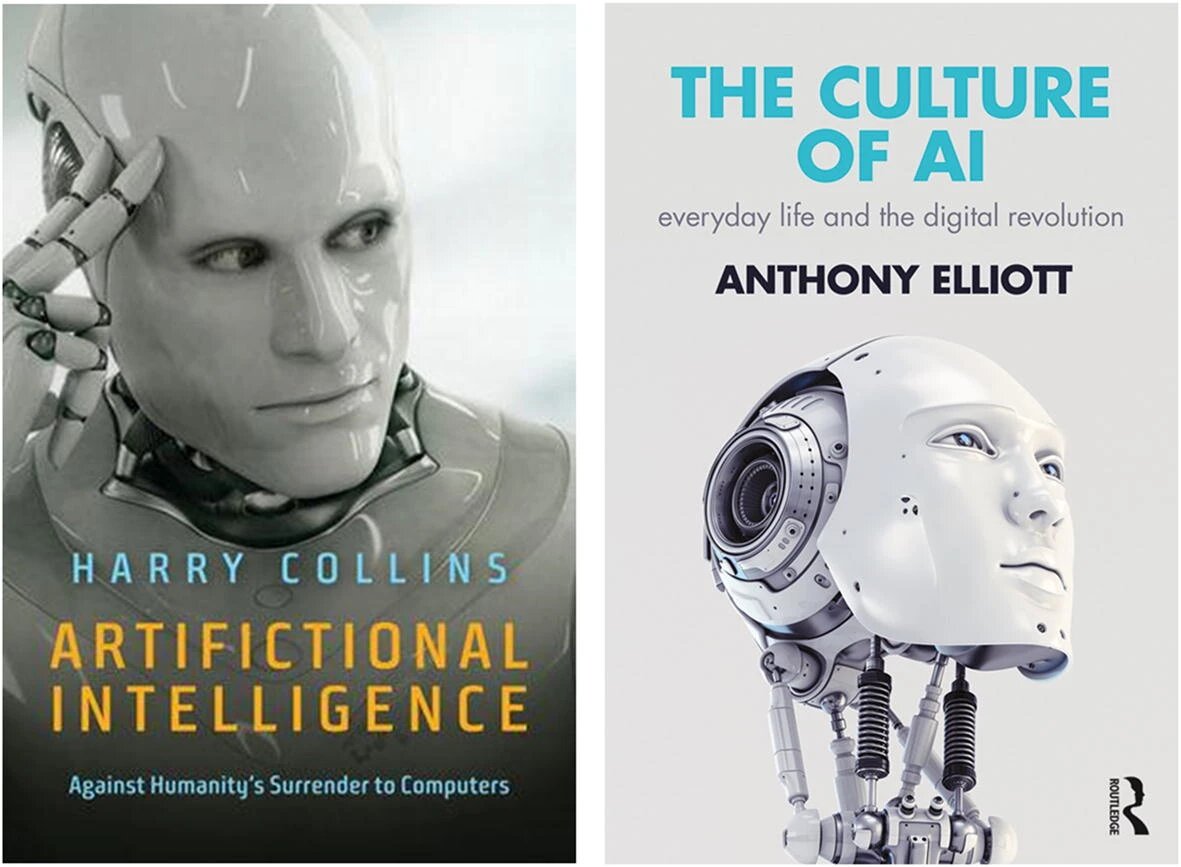

The authors also present two book covers. Having looked for covers for books on robots and AI myself on more than one occasion, I can attest to the lack of choice when it comes to stock images of intelligent looking robots.

These are not real robots, either. In the German edition of a book on AI and robotics I co-wrote, the publisher went with a white robot embryo produced by their in-house art department. Does this portrayal show the racial Whiteness of AI, the natural skeletal whiteness of embryonic bones, or is it just a strong cover design that makes you want to pick up the book? The last of these was what was intended. Apropos of nothing, my co-authors and I rejected a similar design with a blue background.

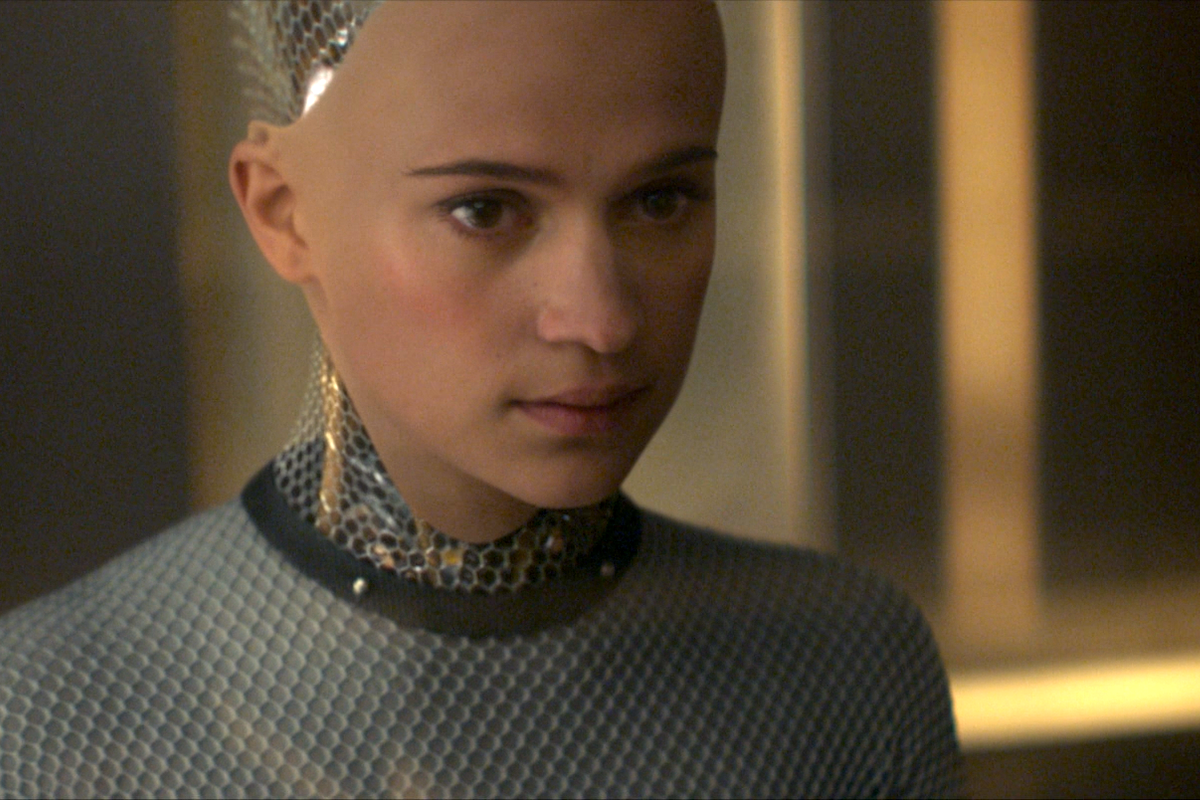

Finally, the authors turn to images of Sophia, a robot made by a Saudi citizen at Hanson Robotics, and Eva, the “fully functional” female sex robot who murders her creator, played by a digitally altered Alicia Vikander combined with an animated robot rig in the film Ex_Machina. Conspicuous by their absence are Kyoko the Japanese sex robot in Ex_Machina, various “robots” from Westworld, the black females Maeve and Charlotte, the black male Bernard and the Japanese male Musashi. Like Eva in Ex_Machina, these are human actors enhanced by special effects. One might also mention the red-eyed chromium skulled robots whose shiny feet crush white human skulls in the Terminator movies and the Cybermen of Doctor Who who come in two non-white colours (silver and bronze). Perhaps the most glaring omissions are the Japanese robots made by Hiroshi Ishiguro that look like Hiroshi Ishiguro. These are associated with research into the “uncanny valley” effect first noted by Masahiro Mori in the 1970s. None of these well-known robots support the Whiteness of AI, which is almost certainly why the authors excluded them.

They could also have included real robots like SoftBank Peppers or Preferred Networks Human Service Robots (which have a lot of white plastic with various accent colours) but they do not. This might be because a white plastic robot does not evoke racial whiteness much more than a white bathtub or a white fridge. Characterising AI as white in the first place is odd. Doing so on the basis of a Google search for “artificial intelligence robots” and a handful of cherry-picked images from books and movies is unconvincing. If you are going to persuade AI experts about the Whiteness of AI, your dataset should have a lot more than 39 images in it.

The essence of AI is not white oppression, racism, sexism, and colonialism, it is the automation of mathematics and logic. A convolutional neural network performing image classification can pick up the colour white and the colour black (easily) and the race White and the race Black (not so easily), but the authors are drawing a very long bow when they insinuate that AI is a racist conspiracy exercising dark, unseen, and symbolic power to keep the non-whites out. If anything these days, AI is obsessed with diversity and inclusion initiatives and hyper-sensitive about regression and classification bugs that can be tagged as “racist” by activists.

Anyone who believes AI is a predominantly white field should peruse the names of the authors at leading AI conferences. The author names of papers accepted at this year’s FAccT conference on fairness, accountability, and transparency in AI, for instance, do not provide compelling evidence that AI is some kind of Honky Town that seeks to exclude non-whites. The most common name among the accepted papers is Singh. Alternatively, the authors could have offered global employment statistics in support of their allegation about the Whiteness of AI. Unsurprisingly, they do not bother to do that either, because the numbers from India and China would crush their argument.

The authors argue that “race and technology are two of the most powerful and important categories for understanding the world as it has developed since at least the early modern period” and claim that “their profound entanglement remains understudied” due to “racial prejudice.” Incorrect. The actual reason is an obvious lack of relevance. Any culture with superior technology can invade, conquer, and subjugate another that has inferior technology. Race is accidental, not essential, to conquest and colonisation. It is true that the colonisers generally (and falsely) believed they were “racially superior” to the colonised. But what enabled colonisation was significant superiority in weaponry and technology not significant superiority in race.

“Power,” Mao said, “grows from the barrel of a gun.” Guns result from cultural factors such as science, business, engineering, and politics, not racial ones. Superior weaponry enabled four centuries of European colonisation and expansion. Yet after World War II, when the colonised got their hands on Kalashnikovs, the old colonial empires of Europe fell apart in less than four decades. By the late 1970s, hardly any colonies remained. Technology is the child of culture not race.

Today, science and engineering are open to all. This is particularly true of AI, which has comprehensive open source resources freely available online. The suggestion that a powerful set of “Whiteness” signs and symbols, “unnoticed and unquestioned, concealed by the myth of colour-blindness,” is discouraging non-white people like the CEO of Google from entering the AI industry is ludicrous. Millions of non-whites are working in AI already.