Science / Tech

AI Is Not About To Become Sentient

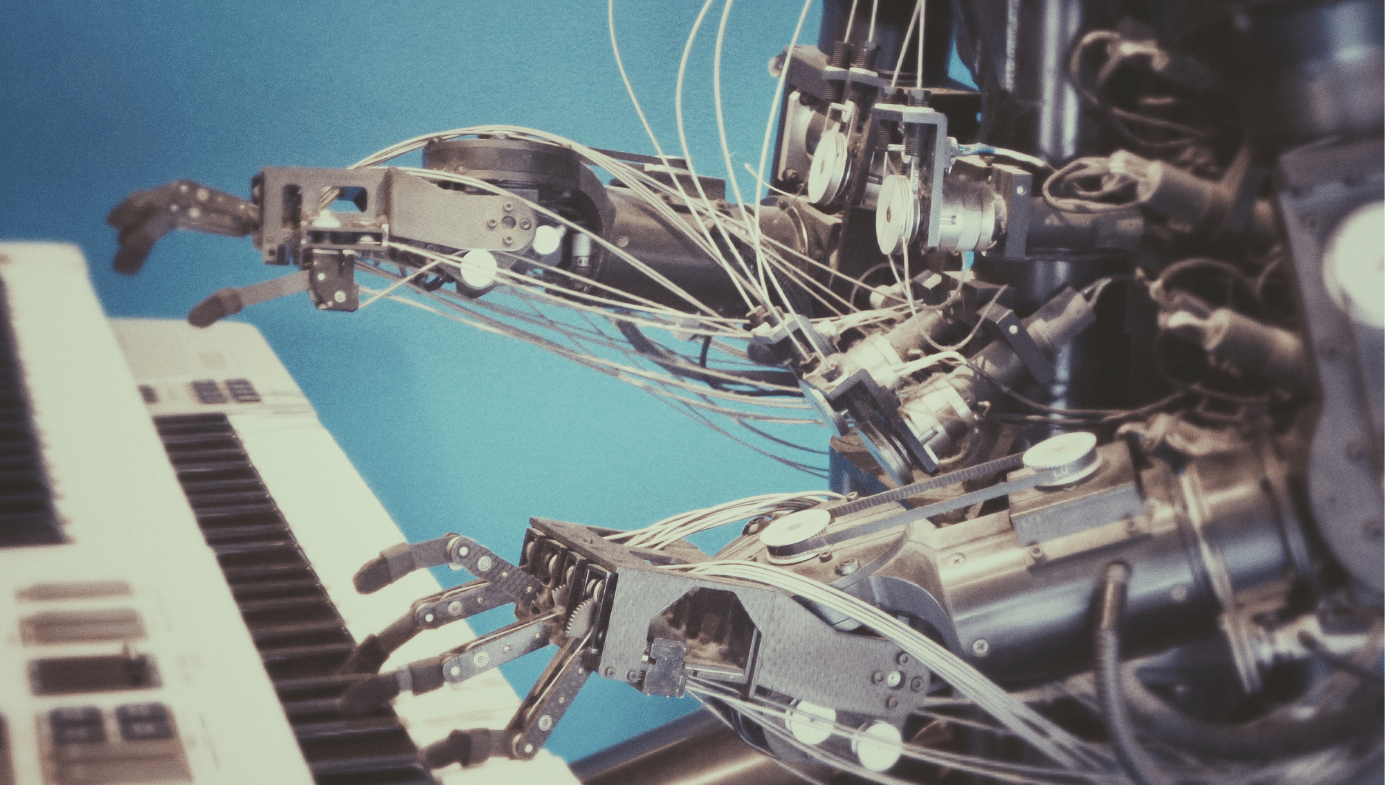

Most of today’s “artificial intelligence” is better described as artificial autocomplete than artificial mind.

The most recent panic around emerging-sentience AI was born of a lie. Someone decided it would be cute to endow OpenClaw with some inalienable rights, and produced counterfeit conversations that sounded like Geoff Hinton’s worst dreams were coming true. They’re not, and they are not about to. Most of today’s “artificial intelligence” is better described as artificial autocomplete than artificial mind. Large language models are vast pattern-recognisers: they have learned statistical regularities in text, and they are extremely good at reproducing them.

As neuroscientist Christof Koch points out, tools like ChatGPT, Anthropic, and Perplexity can only “regurgitate what other people” have written. In Then I Am Myself the World, Koch considers whether or not an advanced but non-conscious AI could ever really grasp any definition of consciousness at all, beyond repeating words in the right order. Fluency can fool us into attributing inner life where there is only computation. But as Annaka Harris reminds us in Conscious, complex behaviour alone “doesn’t necessarily shed light on whether a system is conscious or not”: both conscious and non-conscious systems can produce the same outward performance.

Consciousness is about there being “something it is like” from the inside; a language model, by contrast, is all outside and no inside. The most interesting epistemic outcome so far is not that agent-based AI is finally crossing the fuzzy boundary of general intelligence, but that it is forcing us to reconsider what it means to think, and to think creatively. Human sentience still does not have a clear scientific definition, one that can be reproduced, tested, disproven, extended, and publicly debated. But there is something else to being human, even if we don’t understand it yet. This opaqueness, ambiguity, and complexity—of spirituality and meaning—are not ghosts of a big machine, they are features of rich and experience-drenched lives.

History teaches that AI will produce individual winners and losers, just like every other technological revolution. But we also know that society inexorably benefits when we understand more facts, whether those facts relate to how electricity is made or how economic policies are made. This is true even for people who have no interest in science, technology, or government, and are just lucky enough to be alive—or kept alive—when a new thing like antibiotics, lights, jets, telephones, or ChatGPT arrives. Medicine, maths, materials, and rule-of-law democracy have made us all healthier, more comfortable, richer, safer, and (sometimes) more connected. (I mean this in the way that I can call my kids overseas any time I want, not the shitposting way designed to irritate, aggravate, and performatively confront without authentic risk or coherent outcome.)

The policy debates are real and the decisions we make today will matter tomorrow. I am not advocating a particular position here; nobody yet knows what “fair” looks like in the context of large language models. I do know truth and compassion are part of the formula because they lead to strength, not because they’re derived from it. And given the fractures and tension in the United States right now, it is by no means clear that we are going to decide democratically about appropriate use of these new tools, where they’ll be restricted, where they’ll be licensed, where they’ll be available, and how we will keep track of it all. I come from the Jonathan Rauch school of epistemology, which is to say that having a fair, open, and honest debate is the transcendent moral cause, period.

Sebastian Elbaum and Sebastian Mallaby offer an especially creative and beautiful framing of the social impacts of AI, from national security and bloodless warfare, to fair opportunity and economic growth. As Dan Geer has famously said of cybersecurity, “Freedom. Security. Convenience. Choose two.” So too will it be in AI.

But back to OpenClaw. The mordant observation here is not so much that somebody cheated, and the tech world got a titillating thrill for a few minutes, and that even Andrez Karpathy, a titan of trust and integrity in the AI world, believed it could be true. The more fundamental point that Nobel Prize-winners and alarmist politicians are missing is that the computer programs that produce synthetic conversations—enabled by multibillion dollar data centres and over a trillion dollars in market-sustaining investments—are trained on what we told them we do. They don’t “think” at all. They’re a mixmaster of other people’s ideas, cleverly packaged in a way that we perceive as natural.

It is no wonder that we anthropomorphise the interactions between humans and these machines. They feel real, and they are extraordinary in their breadth, depth, and competence. Their memory is enormous. But they have no more consciousness, sensitivity, and sentience than a hammer. They are feeding back to us new ideas—and sometimes wonderfully original and attractive concepts—based upon what we ourselves have said in different ways, done in different contexts, chosen in different times.

It is certainly true that the results have surprised us, and that they will result in ever-more automation and creative problem-solving, from menial tasks we think only humans can perform today to wartime decisions we already know machines make better. But the platforms are not the neuronal connections of a creative brain, they are the stochastic outcomes of silicon, copper, and a whole lot of electricity.

The perception that AI bots were making their own rules and boxing out human intervention was believable not because AI is that advanced—or will ever be that advanced—but because that’s what real people do. They dissemble sometimes. They disobey sometimes. They behave selfishly, or bully, or say really dumb things that sound macho or nostalgic but do not stand the test of time in anything like an objective and rational frame of reference. And all this is written down, recorded, and fed into the machine as “training data.” So why should we be surprised if an AI—contaminated with examples of the occasionally dubious ethics, words, and actions of its creator species—convincingly mimics those behaviours?

Remember: healthcare, transportation, democracy, and GPS. None of them works perfectly, and all of them are born of human intuition and invention. Leading-edge scientists, market-dominating companies, and public officials of all stripes and perspectives are leveraging AI in exactly the same way that technologists, firms, and leaders have leveraged (for better and for worse) chemistry, physics, and biology since before the industrial revolution. The difference this time is that the robots are made, by us, to look like us. But that doesn’t mean they are us. Every device made by man has an off switch. We can use it sometimes.

Quillette invites thoughtful responses to its essays.

Selected responses are published once per week as part of a curated Letters to the Editor feature. If selected, letters appear under the contributor’s real name and may be edited for clarity and length.

To submit a letter for consideration, please email [email protected].