Progressive Orthodoxy

A Puritanical Assault on the English Language

Social justice zealots think they can save the world by inventing absurd new ways to describe it.

It is a truism that people are often educated out of extreme religious beliefs. With good education comes the ability to think critically, which is the death knell for ideologies that are built on tenuous foundations. The religion of Critical Social Justice has spread at an unprecedented rate, partly because it makes claims to authority in the kind of impenetrable language that discourages the sort of criticism and scrutiny that would see it collapse upon itself. Some would argue that this is one of the reasons why the Catholic Church resisted translating the Bible into the vernacular for so long; those in power are always threatened when the plebeians start thinking for themselves and asking inconvenient questions.

This tactic of deliberately restricting knowledge produces epistemic closure, and is a hallmark of all cults. The elitist lexicon of Critical Social Justice not only provides an effective barrier against criticism and a means to sound informed while saying very little, but also signals membership and discourages engagement from those outside the bubble.

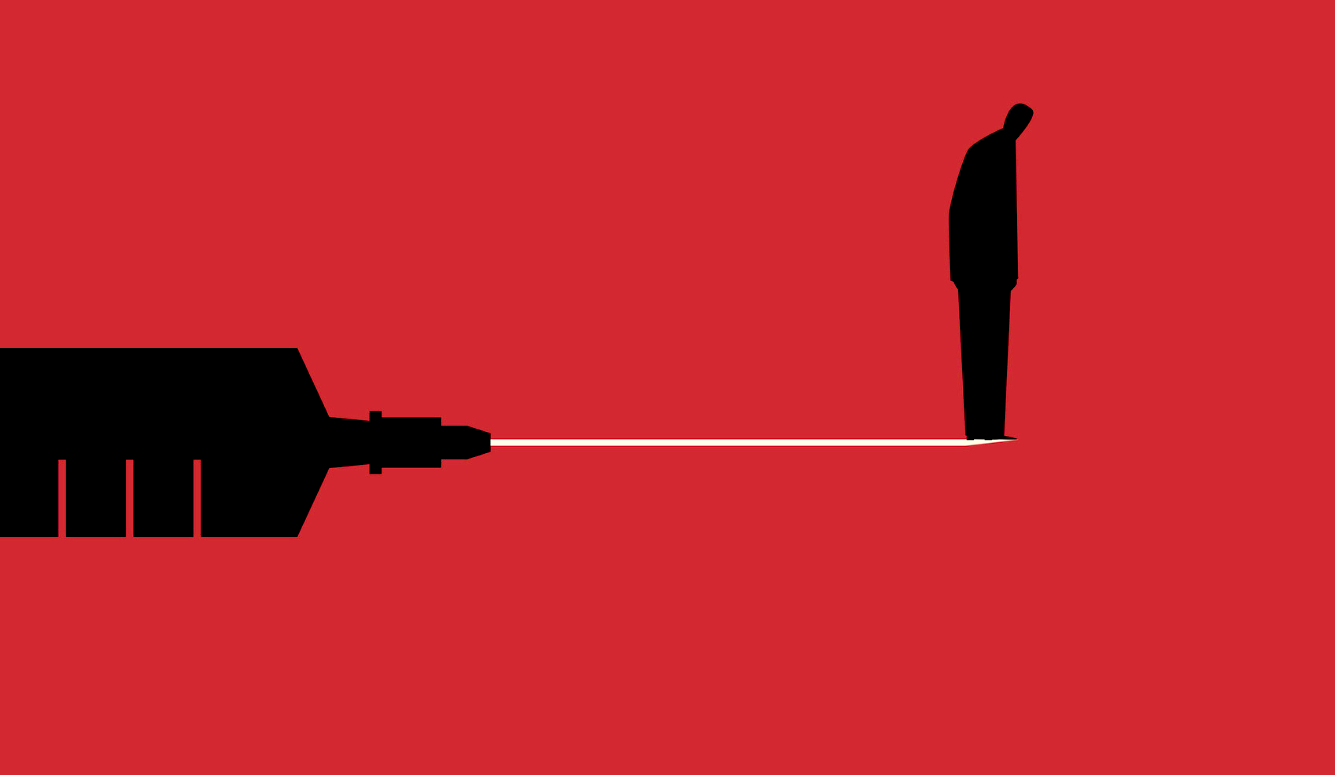

It is inevitable that the principle of freedom of speech should become a casualty when powerful people are obsessed with language and its capacity to shape the world. Revolutionaries of the postmodernist mindset would have us believe that societal change can be actuated through modifications to the language that describes it, which is why Max Horkheimer of the Frankfurt School maintained that it was not possible to conceive of the liberated world in the language of the existing world. As for the new puritans, they have embraced the belief that language is either a tool of oppression or a means to resist it. This not only accounts for their approval of censorship and “hate speech” legislation, but their inability to grasp how the artistic representation of morally objectionable ideas is not the same as an endorsement.

It further accounts for their hostility to debate. According to this view, the airing of toxic ideas enables their promulgation, and so there is a moral duty to ensure that they are silenced. This is the logic behind the practice of “no platforming” or “deplatforming,” which sees visiting speakers disinvited from appearing on university campuses due to their contentious opinions. The new puritans’ longed-for utopia, in which all forms of prejudice are eradicated from the human instinct, will apparently be brought about through the control of how we speak and, by extension, how we think.

In addition to calls for censorship, language can be manipulated through what is known as “concept creep,” by which words lose any meaning through endless misapplication. The most disturbing example has been the expanded meaning of terms such as “far right,” “fascist,” and “Nazi,” which has needlessly raised the temperature of current political debates. A new make-believe domain has emerged, in which many are gripped by an irrational conviction that we live in a country dominated by fascists, poised to rise and seize control like a rerun of Mussolini’s march on Rome. So one left-leaning commentator informs us that “fascist extremism and terrorism is being legitimised and fuelled by ‘mainstream’ newspapers and politicians alike.” Another insists that “all white people” are implicated “in white supremacy.” The rhetoric has become so ubiquitous that these terms have begun to lose their potency.

Fascist extremism and terrorism is being legitimised and fuelled by "mainstream" newspapers and politicians alike. pic.twitter.com/nHyjUqJhlb

— Owen Jones (@OwenJones84) August 13, 2017

So why is it that so many journalists and activists are persuaded that neo-Nazism has gone mainstream? Why do so many on social media feel the need to identify themselves as “anti-fascist”? Like most people, I have never met an actual fascist. I have encountered some racists, a few far-right advocates, and one white nationalist during the filming of a programme for the BBC—but no fascists, so far as I am aware. My default expectation of my fellow creatures is that they would instinctively oppose such pernicious ideas. Claiming to be an “anti-fascist” is rather like wearing a badge saying “I am not a paedophile”; it makes others wonder what you’re hiding.

The illusion of a crypto-fascist epidemic is buoyed by the misapprehension that white supremacists and neo-Nazis tend to keep their views to themselves. This is untrue; one of the problems we face in combating these ideologies is that fealty to the cause is considered a source of pride. By failing to use terms accurately and with care, commentators and journalists have created the impression that such groups are pervasive and have thereby inadvertently promoted them. It is no great leap to suppose that this goes some way to explaining why the far Right has lately been recruiting members with greater ease. Although still extremely marginal, there is evidence to suggest that the far Right is growing, and while we ought to take this very seriously, we should not allow the truth to be distorted through lazy hyperbole.

Then there is the slippery term “alt-right,” a catch-all that rivals “fascist” and “Nazi” for the way in which it is deployed so thoughtlessly. Even Jordan Peterson, the famous clinical psychologist whose opposition to tyranny in all its forms could not be more well documented, has been branded as “alt-right” by numerous media outlets. In common parlance, the term has become irrevocably associated with white nationalism and movements helmed by the likes of Richard Spencer. So when Peter Walker, political correspondent for the Guardian, claims that the meaning of “alt-right” is “subjective,” he is either being disingenuous or naïve. According to Walker, it “can be associated with a sort of highly robust, fairly confrontational libertarian right-leaning politics with a dash of support for Trump,” but his use of the modal verb is telling. That a phrase with such potentially libellous connotations can be defined in multiple ways should surely give journalists pause for thought. Unless, of course, their intention is to imply a correlation with white supremacy, safe in the knowledge that the get-out clause of ambiguity will excuse the smear.

We see the same problem with the notion of “Islamophobia,” which has been weaponised to great effect. As a consequence, people with legitimate criticisms of Islam are gratuitously pigeon-holed alongside the sort of reactionaries who shout abuse at women in hijabs or throw bacon at mosques. Even Muslim critics are dismissed as suffering from “internalised Islamophobia” if their ideas are not deemed sufficiently deferential. In a free society, no religious belief should be ringfenced from analysis or mockery, and the accusation of “Islamophobia” has become the primary tool by which to discourage potential critics.

We need to restore some clarity. We are right to call groups such as the British National Party “far right,” because they have always been dominated by those who believe in the concept of racial superiority, but once the meaning of the term spreads to incorporate civic nationalists, readers of right-leaning tabloids, those who voted for Brexit, or gender-critical feminists, the words become denuded of their power. Hysteria is no sound basis for political analysis, and nor is it advisable to allow genuine fascists the opportunity to claim greater support than they actually command. For all the alarmism of the new puritans, we do not live in a country in which racism, homophobia, misogyny or anti-trans hatred are considered in any way acceptable. Even the mildest suspicion of such tendencies can result in a form of social excommunication. That is not to say that such prejudices have been eliminated—human nature is far too flawed for that—but it is reassuring that we appear to have reached a civilised consensus.

There was a time when the right-wing press seemed to be dominated by fantasists. Reports abounded of asylum seekers being showered with benefits, ethnic minorities forcing local councils to ban the word “Christmas” in favour of “Winterval,” or nurseries teaching children to sing Baa, Baa, Rainbow Sheep so as not to offend black people. Such histrionic “PC gone mad” stories were typically propagated by reactionary tabloid polemicists. They were describing a fantasy Britain, one distorted by fear, ignorance, and possibly a few too many boozy press lunches. Not to be outdone, the new puritans have seemingly conjured a different kind of imaginary Britain, in which the behaviour of a few extremists is magnified to an absurd extent. These are the reactionaries of our present culture war, occupying a nightmare land of their own making.

Some sympathy is warranted here; it must be exhausting to harbour such convictions, untethered as they are from reality. But the fact remains that it is our security services and intelligence agencies who are taking on the handful of fascists in our midst. The rest are just scrapping with ghosts.

That the new puritans have consolidated their power through the manipulation of language might explain why they have secured so much influence over public libraries. During the past few years, we have seen a bizarre militancy from librarians who are keen to “decolonise” their collections or berate people for their reading habits. I am not suggesting here that there has been some kind of conspiracy among ideologues to infiltrate libraries by stealth, but rather that the role seems to attract activists who are convinced that society can be re-engineered through censorship of the words we say and the books we read.

I am aware of how ludicrous the concept of “woke librarians” might sound, but the evidence is compelling. Even the British Library has a “Decolonising Working Group,” which has successfully persuaded its management to review its collections, “powerfully reinterpret” statues of its founders, and put more than 300 authors on a watchlist if they have even the flimsiest of connections to the slave trade. One of the group’s more risible findings is that the library’s main building is a monument to imperialism because it resembles a battleship.

Recently I have noticed the weird rise of “woke librarians”; militant ideologues who are intent on revising the past and “decolonising” libraries.

— Andrew Doyle (@andrewdoyle_com) August 30, 2020

Needless to say, these are the last people who should be entrusted with the custodianship of knowledge.https://t.co/CH3Ygih6vG

When archivists at Homerton College, Cambridge, were engaged in a project to upload their collection of children’s literature to the Internet, they decided to apply “trigger warnings” to texts that might cause offence due to outdated racial stereotypes. These included Little House on the Prairie (1935) by Laura Ingalls Wilder, The Water Babies (1863) by Charles Kingsley, and various books by Dr Seuss. Typically, such gestures are a reliable indication that those responsible are ideologically captured, and this was confirmed by a statement issued on behalf of the college. The archivists apparently sought to make their digital collection “less harmful in the context of a canonical literary heritage that is shaped by, and continues, a history of oppression.” Note the familiar invocation of “harm” unfittingly applied to the written word, and the underlying assumption that art and literature is a means by which the oppressor class perpetuates its power over marginalised groups.

Most revealingly, the archivists stated that it would be “a dereliction of our duty as gatekeepers to allow such casual racism to go unchecked.” This is what has become known colloquially as “saying the quiet part out loud.” The role of the archivist is that of the custodian, not the gatekeeper. Yet it is clear from this statement that those in charge of this digital project consider it their duty to shield the public from potentially corrupting texts rather than to facilitate access. Any competent teacher will confirm that even young children are capable of appreciating the concept of historical context and how values shift over time, particularly with the proper guidance. These self-appointed “gatekeepers” evidently believe otherwise.

The application of “trigger warnings” has been common practice in universities since at least 2013. These can range from literary works on English Literature courses to the study of rape cases in law schools. One professor at Harvard University writes how a colleague was “asked by a student not to use the word ‘violate’ in class—as in ‘Does this conduct violate the law?’—because the word was triggering.” It is hardly crass to point out that if undergraduates cannot bear to hear about unpleasant crimes, they should avoid the study of law. It would be like someone who cannot stand the sight of blood specialising in heart surgery.

Those who defend the practice of trigger warnings argue that they are simply protecting certain students from the reignition of a pre-existing trauma. If someone has been the victim of a violent attack, the reasoning goes, they should be made aware in advance if a text contains distressing imagery. The intention is clearly compassionate, and few would deny that it is upsetting to be reminded of past experiences we would rather forget. Early in my short-lived teaching career, I was blindsided by an incident in an English Literature class that might have been prevented had warnings been issued. I was teaching a poem in which an execution by hanging was vividly described. As I was reading the text aloud, a pupil ran from the classroom in tears. It was only later that I discovered that one of her relatives had recently hanged himself. After this unfortunate incident, I reflected on whether a warning at the beginning of the lesson might have prevented this outcome, or if this was simply the consequence of a failure of communication among teaching staff.

As it turns out, there is a consensus among cognitive behavioural therapists that trigger warnings are counter-productive when it comes to trauma recovery. As Greg Lukianoff and Jonathan Haidt explained in The Coddling of the American Mind (2018), “avoiding triggers is a symptom of PTSD, not a treatment for it.” They quote Richard McNally, the director of clinical training at the Department of Psychology at Harvard University, who writes: “Trigger warnings are counter-therapeutic because they encourage avoidance of reminders of trauma, and avoidance maintains PTSD.”

There is a distinction to be drawn here between trigger warnings at universities and the kind of notifications we find before films aimed at helping parents to determine whether the content is suitable for their children. When the British Board of Film Classification (BBFC) alerts viewers to potentially upsetting material, they do not make the claim that it is “harmful,” only that it may be inappropriate for certain age groups. Trigger warnings, on the other hand, are justified on the basis of this putative nexus of words and violence, one that is informed by the ideology of the new puritanism.

Worse still, this flawed notion can be weaponised by activist students who wish to exert some degree of control over their teachers. A letter from seven anonymous academics to Inside Higher Ed drew attention to the potential risk:

We are currently watching our colleagues receive phone calls from deans and other administrators investigating student complaints that they have included ‘triggering’ material in their courses, with or without warnings. We feel that this movement is already having a chilling effect on our teaching and pedagogy.

Trigger warnings have also been deployed as a form of appeasement to activists, a signal that their concerns are being heeded even if they are groundless. For instance, after students and staff demanded that the Faculty of Classics at Cambridge acknowledge the “systemic racism” of this entire field of study, trigger warnings were added to ancient Greek and Roman literary works. Given the litany of sexual violence in these texts, one would have assumed that squeamish students would have avoided the subject altogether. Consider Ovid’s account in the Metamorphoses of how Adonis was conceived; his mother Myrrha tricked her own father into copulation and, while heavily pregnant, was transformed into a tree. This is surely the very definition of a dysfunctional family.

A further aspect of the faculty’s “action plan” was to add signs to the display of plaster casts of Roman and Greek sculptures explaining that their “whiteness” was not to be taken as a sign that the ancient world lacked diversity. Of course, this sort of performative handwringing is hardly necessary. The whiteness of the plaster casts can be readily accounted for by the fact that plaster is white.

Such needless and patronising explanations are entirely in keeping with the trigger-warning mentality, which perceives adults as being locked in a permanent state of infancy. In the summer of 2021, Brandeis University in Massachusetts published an “oppressive language list” of phrases best avoided by students and staff. Examples included “female-bodied,” “lame,” and “spirit animal.” The phrase “person experiencing housing insecurity” was recommended as an inoffensive alternative to “homeless person.” A category headed “violent language” featured phrases such as “rule of thumb” and “you’re killing it”; the latter apparently could be misconstrued as an allegation of homicidal intent. This is evidently a university for the congenitally literal-minded.

Brandeis University expands list of ‘oppressive’ words and phrases to avoid using https://t.co/ohJK1MRAms

— Boston Herald (@bostonherald) August 28, 2021

Of course when it comes to the culture wars, the United Kingdom is never far behind the United States. Soon after the publication of the “oppressive language list” at Brandeis, it was reported that the University of Glasgow had instructed staff to apply trigger warnings to course content to prevent distress. As an added twist, it was decreed that the phrase “trigger warning” might itself be triggering as it invokes guns and violent imagery, and so “content advice” was to be preferred.

Other universities have competed to see who can invent the most asinine warnings: Shakespeare’s Julius Caesar (1599) has a plot that “centres on a murder”; Robert Louis Stevenson’s Kidnapped (1886) “contains depictions of murder, death, family betrayal and kidnapping”; Ernest Hemingway’s The Old Man and the Sea (1952) includes scenes of “graphic fishing.” Even George Orwell’s Nineteen Eighty-Four has been slapped with a warning that students might find the contents “offensive and upsetting.” Of course, those who would assume that a famous dystopian novel would be inoffensive and uplifting probably shouldn’t be studying literature in the first place.

Political commentator Brendan O’Neill has described trigger warnings as “a slippery form of censorship,” insofar as they “don’t outright ban books but they do shroud them in suspicion; they treat them as dangerous objects; they tell readers, ‘Watch out—this book might hurt you.’” And once we countenance the premise that words can be “harmful,” the next logical step is the withdrawal or destruction of books. Following a review of its collections, the Waterloo Region District School Board in Canada identified and removed books that were considered “harmful to staff and students.” Other school libraries did away with copies of Harper Lee’s To Kill a Mockingbird (1960) and Margaret Atwood’s The Handmaid’s Tale (1985), following complaints about “racist, homophobic, or misogynistic language and themes.”

Those involved in these decisions evidently lack the most basic interpretative abilities. To Kill a Mockingbird is, of course, explicitly anti-racist, but for those who have invested words with the power to wound, the inclusion of racial slurs is sufficient to see it condemned. Never mind that the novel is set in the Deep South during the era of the Great Depression when such terms were commonplace, nor that Lee invites us to sympathise with the characters who believe, as does Atticus Finch, that “you never really understand a person until you consider things from his point of view … until you climb into his skin and walk around in it,” and how justice is the right of every man “be he any colour of the rainbow.”

When NewSouth Books published an edition of Mark Twain’s The Adventures of Huckleberry Finn (1884) in which the racial epithets were eliminated, they were demonstrating a similarly myopic approach. Like Atticus Finch, Huckleberry is a rare figure who can see beyond the ingrained prejudices of his time. Through its first-person narrative, we are invited to share the vantage point of a boy who instinctively rejects the prevailing conventions. The target of Twain’s satire is a community whose members believe themselves to be moral and godly but are happy to enslave and degrade their fellow creatures. In this we can detect Rousseau’s conception of the child uncorrupted by “civilisation”; it takes the innocence of a boy to see through the sanctimony and hypocrisy of a society built on exploitation.

The impulse to censor, or remove entirely, such explicitly anti-racist texts in the name of “anti-racism” is a reminder of how the new puritanism can only be sustained where critical thinking is absent. Language is reduced to a series of cyphers intended only to bolster oppression or resist it. The prioritisation of the “lived experience” of the reader—or, more often, the non-reader—means that even the most egregious misinterpretation may be taken as evidence of a text’s capacity to “stir up hatred.” How else might we explain the removal of The Handmaid’s Tale, a mainstay of contemporary feminist literature? The novel depicts a dystopian future in which women are reduced to broodmares for the ruling class. It is no accident that it is set in New England; Atwood draws a direct connection between the theocracy of this era and the totalitarianism of Gilead, her fictional dystopia, particularly in the treatment of women and the assumption of their inherent servility. Like the women of New England, who dressed plainly and were prohibited from using combs or mirrors, Atwood’s handmaids are attired in a manner that denies them an individual identity: ankle- length red skirts, a flat yoke with full sleeves, and white wings around the face that prevent them “from seeing, but also from being seen.”

Atwood has described her “take on American Puritanism” as “not that far behind” Nathaniel Hawthorne’s The Scarlet Letter (1850). Hawthorne’s novel tells the story of Hester Prynne, a woman convicted of adultery and forced to wear an embroidered letter A as a mark of shame and expiation for her sins. Both Atwood and Hawthorne share an ancestral connection to the puritans of New England. Hawthorne was the great-great-grandson of John Hathorne, one of the leading judges in the Salem witch trials, and found little pleasure in revisiting these ghosts of his family’s repressive past. Perhaps more than any other author, Hawthorne is most responsible for the view of the puritans as repressive, bigoted, and hypocritical. He considered The Scarlet Letter to be a “positively hell-fired story,” into which he “found it almost impossible to throw any cheering light.” Atwood’s family connection is through Mary Webster, one of the dedicatees of The Handmaid’s Tale, who was hanged for being a witch in 1683 but survived the execution process.

Those responsible for the removal of The Handmaid’s Tale from libraries might not be executing women who fail to conform, but they are certainly embodying the kind of ideological fervour that the novel explores. I am reminded of the comedian Victoria Wood’s short television play The Library (1989), which depicts a tyrannical librarian called Madge who likes to bowdlerise Jackie Collins novels with a felt-tip pen and draw bras on the women in breastfeeding manuals. “She thinks book-burning is a sensible alternative to oil-fired central heating,” Wood tells her audience in the show’s opening monologue. Little could she have known that, 30 years on, the absurdist notion of a librarian who approves of censorship would become a habitual figure in the industry.

Wood’s jibe was somewhat prescient, given that school board in south-western Ontario recently authorised the ritualistic burning of books for “educational purposes.” In what they described as a “flame purification” ceremony, almost 5,000 books were removed from shelves and were destroyed or recycled if they were judged to contain outdated racial stereotypes. Some of those that were burned had their ashes used as fertiliser to plant a tree. An uplifting, progressive, and environmentally conscious gesture, if one ignores the overtones of Fahrenheit 451.

At this point the book burnings are no longer just metaphorical.https://t.co/jr3FZfOM0r

— Pedro Domingos (@pmddomingos) September 8, 2021

The new puritans are similarly attracted to positions in which they are able to participate in the revision of dictionaries. Although the conventional role of the dictionary is to record common usage, the rise of the Critical Social Justice movement has meant that definitions are often modified to better reflect the ideological beliefs of staff members. Take America’s oldest dictionary, Merriam-Webster, whose contributors have deemed it necessary to change the definition of the word “racism” to “reflect systemic oppression.” Likewise, the Anti-Defamation League has changed the definition of “racism” on its website from “the belief that a particular race is superior or inferior to another” to “the marginalization and/or oppression of people of color based on a socially constructed racial hierarchy that privileges white people.” In this, they are following the diktats of critical race theorists who believe that “racism” is an equation—prejudice plus power—rather than prejudice or hatred towards individuals on the basis of their race, which is how the vast majority of people understand the term.

In White Fragility: Why It’s So Hard for White People to Talk About Racism (2018), Robin DiAngelo rejects as “simplistic” the notion that racism is best understood as “intentional acts of racial discrimination committed by immoral individuals.” Rather, racism is “a system” or “a structure, not an event.” Furthermore, the “prejudice plus power” formulation explains why the new puritans believe that only white people can be racist. So when two Indian teenagers were arrested at a football game in New Jersey having verbally abused and urinated on a group of black schoolgirls, the New York Times claimed that the perpetrators were “enacting whiteness.” What we might perceive as racism between ethnic minority groups is known instead as “colourism.” Furthermore, it is supposed that a white person cannot experience racism. DiAngelo even offers the theoretical scenario of a white person being “mercilessly” bullied due to the colour of their skin, but resorts to a definitional fudge to explain this away as an example of someone “experiencing race prejudice and discrimination, not racism.” As a linguistic gymnast, DiAngelo often ends up face-down on the mat.

Merriam-Webster has a track record of this kind of paternalistic behaviour. In 2019, “they” was added to the dictionary as a non-binary pronoun and was even judged to be “Word of the Year.” For all these efforts, the use of “they” as singular has not caught on with the general public; further evidence that most people are not the kind of malleable drones that the new puritans believe them to be. According to Oxford University Press, publisher of the Oxford English Dictionary, recent words of the year include “post-truth,” “climate emergency,” and “youthquake.” Note how these choices are conspicuously political. Apart from media circles, the term “youthquake” was hardly ever used in 2017—the year it was honoured—and has since all but disappeared.

In August 2020, Dictionary.com published a new entry for the acronym “TERF” (trans-exclusionary radical feminist), often used as a slur against gender-critical feminists or anyone who believes that there are biological differences between men and women. That Dictionary.com tweeted out a link to its definition, along with the phrase “Beware the TERF,” leaves us in no doubt as to where the company stands on this issue. Many feminists have expressed concerns that the more extreme trans activists are seeking what is tantamount to the erasure of womanhood, denying women’s rights to single-sex spaces and even attempting to shoehorn the neologism “womxn” into mainstream vocabulary, in spite of the fact that no one knows how to pronounce it. These feminists’ suspicions are certainly validated by Dictionary.com’s original choice of illustration for the TERF entry: the female symbol (a circle with a cross underneath) struck through with a red line. Nor was it particularly reassuring to see that one of Dictionary.com’s original example phrases, now deleted, was “punch a TERF.”

As the power and influence of the new puritans accelerates, we can expect to see more capitulation to these efforts at social engineering through the manipulation of language. Take the Encyclopedia Britannica’s transcript of Martin Luther King’s most famous speech: “I have a dream that … one day right there in Alabama, little Black boys and Black girls will be able to join hands with little white boys and white girls as sisters and brothers.” The attentive reader will note that the word “black” has been capitalised, but the word “white” has been left in lower case. This follows the patently ideological decision by the Associated Press to amend its style guide to reflect the view that “white people in general have much less shared history and culture, and don’t have the experience of being discriminated against because of skin colour.”

It sounds like the stuff of fantasy. Yet the proliferation of activists in libraries, dictionaries, schools, advertising, the arts, and the media makes complete sense when one considers that the devotees of the religion of Critical Social Justice have a vested interest in seizing control of outlets that influence how language is used. One thinks immediately of the science-fiction writer Philip K. Dick, who said that “the basic tool for the manipulation of reality is the manipulation of words. If you can control the meaning of words, you can control the people who must use the words.”

Excerpted, with permission, from The New Puritans: How The Religion of Social Justice Captured the Western World, by Andrew Doyle. Copyright © Andrew Doyle, 2022. Published by Constable, an imprint of Little, Brown Book Group.