Identity

The Bias that Divides Us

What our society is really suffering from is myside bias: People evaluate evidence, generate evidence, and test hypotheses in a manner biased toward their own prior beliefs, opinions, and attitudes.

As we sit here over six months after the initial lockdown provoked by COVID-19, the United States has moved out of a brief period of national unity into distressingly predictable and bitter partisan division. The return to this state of affairs has been fuelled by a cognitive trait that divides us and that our culture serves to magnify. Certainly many commentators have ascribed some part of the divide to what they term our “post-truth” society, but this is not an apt description of the particular defect that has played a central role in our divided society. The cause of our division is not that people deny the existence of truth. It is that people are selective in displaying their post-truth tendencies.

What our society is really suffering from is myside bias: People evaluate evidence, generate evidence, and test hypotheses in a manner biased toward their own prior beliefs, opinions, and attitudes. That we are facing a myside bias problem and not a calamitous societal abandonment of the concept of truth is perhaps good news in one sense, because the phenomenon of myside bias has been extensively studied in cognitive science. The bad news, however, is that what we know is not necessarily encouraging.

The many faces of myside bias

Research has shown that myside bias is displayed in a variety of experimental situations: people evaluate the same virtuous act more favourably if committed by a member of their own group and evaluate a negative act less unfavourably if committed by a member of their own group; they evaluate an identical experiment more favourably if the results support their prior beliefs than if the results contradict their prior beliefs; and when searching for information, people select information sources that are likely to support their own position. Even the interpretation of a purely numerical display of outcome data is tipped in the direction of the subject’s prior belief. Likewise, judgments of logical validity are skewed by people’s prior beliefs. Valid syllogisms with the conclusion “therefore, marijuana should be legal” are easier for liberals to judge correctly and harder for conservatives; whereas valid syllogisms with the conclusion “therefore, no one has the right to end the life of a fetus” are harder for liberals to judge correctly and easier for conservatives.

I will stop here, because I have not even begun to enumerate the many different paradigms that psychologists have used to study myside bias. As I show in my new book, The Bias That Divides Us, myside bias is not only displayed in laboratory paradigms, but it characterizes our thinking in real life as well. In early May 2020, demonstrations took place in several state capitols in the United States to protest mandated stay-at-home policies, ordered in response to the COVID-19 pandemic. Responses to these demonstrations fell strongly along partisan lines—one side deploring the societal health risks of the demonstrations and the other supporting the demonstrations. Only a few weeks later, these partisan lines on large public gatherings completely reversed polarity when new mass demonstrations occurred for a different set of reasons.

Many cognitive biases in the psychological literature are only displayed by a subset of subjects—sometimes even less than a majority. In contrast, myside bias is one of the most ubiquitous of biases because it is exhibited by the vast majority of subjects studied. Myside bias is also not limited to individuals with certain cognitive or demographic characteristics. It is one of the most universal of the cognitive biases.

The outlier bias

Although it is ubiquitous, myside bias is an outlier bias in the psychological literature in several respects. When my colleague Richard West and I began examining individual differences in cognitive biases in the 1990s, one of the first consistent results from our early studies was that the biases tended to correlate with each other. Another consistent observation in our earliest studies was that almost every cognitive bias was negatively correlated with intelligence as measured with a variety of cognitive ability indicators. Individual differences in most cognitive biases were also predicted by several well-studied thinking dispositions such as actively open-minded thinking. These findings have held for some of the most well-studied biases in the literature: anchoring biases, framing biases, overconfidence bias, outcome bias, conjunction fallacies, base-rate neglect, and many others.

This previous work framed our expectations about what we would find when we began investigating myside bias. The clear expectation was that it would show the same correlations with individual difference variables as do all the other biases. This body of previous work formed the context for the startling finding about the individual difference predictors of myside bias that we actually did observe. The really startling finding was: there weren’t many!

It turns out that myside bias is not predictable from standard measures of cognitive and behavioral functioning. The degree of myside bias that people show is not correlated with their intelligence or level of actively open-minded thinking; nor is it correlated with their educational level. It is not correlated with how much they display other biases. Furthermore, it is a bias that has very little domain generality. That is, myside bias in one domain is not a predictor of the myside bias shown in another domain. It is simply one of the most unpredictable of the biases in an individual differences sense.

Is myside bias even irrational?

Myside bias is an outlier bias in another important way. For most of the other biases in the literature (anchoring biases, framing effects, base rate neglect, etc.), it is easy to show that in certain situations they lead to thinking errors. In contrast, despite all the damage that myside bias does to our social and political discourse, it is shockingly hard to show that, for an individual, it is a thinking error.

In determining what to believe, myside bias operates by weighting new evidence more highly when it is consistent with prior beliefs and less highly when it contradicts a prior belief. This seems wrong, but it is not. Many formal analyses and arguments in philosophy of science have shown that in most situations that resemble real life, it is rational to use your prior belief in the evaluation of new evidence. It is even rational for scientists to do this in the research process. The reason that it is rational is that people (and scientists) are not presented with information that is of perfect reliability. The degree of reliability is something that has to be assessed. A key component of that reliability involves assessing the credibility of the source of the information or new data. For example, it is perfectly reasonable for a scientist to use prior knowledge on the question at issue in order to evaluate the credibility of new data presented. Scientists do this all the time, and it is rational. They use the discrepancy between the data they expect, given their prior hypothesis, and the actual data observed to estimate the credibility of the source of the new data. The larger the discrepancy, the more surprising the evidence is, and the more a scientist will question the source and thus reduce the weight given the new evidence.

This cognitive strategy is sometimes called knowledge projection, and what is interesting is that it is rational for a layperson to use it too, as long as their prior belief represents real knowledge and not just an unsupported desire for something to be true. What turns this situation into one of inappropriate myside bias is when a person uses, not a belief that prior evidence leads them to think is true, but instead projects a prior belief the person wants to be true despite inadequate evidence that it is, in fact, true. Psychologist Robert Abelson terms the first type of belief a testable belief. The second type of belief is technically termed a distal belief. A less abstract term for a distal belief would be to call it a conviction. The term conviction better conveys the fact that these types of beliefs are often accompanied by emotional commitment and ego preoccupation. They can sometimes derive from values or partisan stances. The problematic kinds of myside bias derive from people projecting convictions, rather than testable beliefs, onto new evidence that they receive. That is how we end up with a society that seemingly cannot agree on empirically demonstrable facts.

An example might help here. Imagine a psychology professor who was asked to evaluate the quality of a new study on the heritability of intelligence. Suppose the professor knows the evidence on the substantial heritability of intelligence, but because of an attraction to the blank-slate view of human nature, wishes that were not true—in fact, wishes it were zero. The question is, what is the prior belief that the professor should use to approach the new data? If the professor uses a prior belief that the heritability of intelligence is greater than zero and uses it to evaluate the credibility of new evidence, that would be the proper use of a prior belief. If instead they projected onto new evidence the prior belief that the heritability of intelligence equals zero, that would be an irrational display of myside bias, because it would be projecting a conviction—something that the professor wanted to be true, rather than a prior expectation based on evidence. Projecting convictions in this way is the kind of myside bias that leads to a failure of society to converge on the facts.

Beliefs as possessions and beliefs as memes

Most of us feel that beliefs are something that we choose to acquire, just like the rest of our possessions. In short, we tend to assume: (1) that we exercised agency in acquiring our beliefs, and (2) that they serve our interests. Under these assumptions, it seems to make sense to have a blanket policy of defending beliefs by having a myside bias. But there is another way to think about this—one that makes us a little more skeptical about our tendency to defend our beliefs, no matter what.

As I mentioned above, research has shown that people who display a high degree of myside bias in one domain do not tend to show a high degree of myside bias in a different domain—myside bias has little domain generality. However, different beliefs vary reliably in the degree of myside bias that they engender. In short, it might not be people who are characterized by more or less myside bias, but beliefs that differ in how strongly they are structured to repel ideas that contradict them. These facts about myside bias have profound implications because they invert the way we think about beliefs. Models that focus on the properties of acquired beliefs, such as memetic theory, provide better frameworks for the study of myside bias. The key question becomes not “How do people acquire beliefs?” (the tradition in social and cognitive psychology) but instead, “How do beliefs acquire people?”

To avoid the most troublesome kind of myside bias, we need to distance ourselves from our convictions, and it may help to conceive of our beliefs as memes that may well have interests of their own. We treat beliefs as possessions when we think that we have thought our way to these beliefs and that the beliefs are serving us. What Dan Dennett calls “the meme’s eye view” leads us to question both assumptions. Memes want to replicate whether they are good for us or not; and they don’t care how they get into a host—whether they get in through conscious thought or are simply an unconscious fit to innate psychological dispositions.

But how, then, do we acquire important beliefs (convictions) without reflection? In fact, there are plenty of examples in psychology where people acquire their declarative knowledge, behavioral proclivities, and decision-making styles from a combination of innate propensities and (largely unconscious) social learning. For example, in his book The Righteous Mind, Jonathan Haidt invokes just this model to explain moral beliefs and behavior.

The model that Haidt uses to explain the development of morality is easily applied to the case of myside bias. Myside-causing convictions often come from political ideologies: a set of beliefs about the proper order of society and how it can be achieved. Increasingly, theorists are modeling the development of political ideologies using the same model of innate propensities plus social learning that Haidt applied to the development of morality. For example, there are temperamental substrates that underlie a person’s ideological proclivities, and these temperamental substrates increasingly look like they are biologically based: measures of political ideology and values show considerable heritability; liberals and conservatives differ on two of the Big Five personality dimensions that are themselves substantially heritable; studies have found ideological position to be correlated with brain differences and neurochemical differences; and these differences in personality between liberals and conservatives seem to appear very early in life. [For footnotes and research citations, see here]

In short, the convictions that are driving your myside bias are in part caused by your biological makeup—not anything that you have thought through consciously. Of course, stressing that we didn’t think our way to our ideological propensities is dealing with only half of Haidt’s “innateness and social learning” formulation. However, for those of us who hold to the old folk psychology of belief (“I must have thought my way to my convictions because they mean so much to me”), the social learning part of Haidt’s formulation provides little help. Values and worldviews develop throughout early childhood, and the beliefs to which we as children are exposed are significantly controlled by parents, peers, and schools. Some of the memes to which a child is exposed are quickly acquired because they match the innate propensities discussed above. Others are acquired, perhaps more slowly, whether or not they match innate propensities, because they bond people to relatives and cherished groups.

In short, the convictions that determine your side when you think in a mysided fashion, often don’t come from rational thought. People will feel less ownership in their beliefs when they realize that they did not consciously reason their way to them. When a conviction is held less like a possession, it is less likely to be projected on to new evidence inappropriately.

The myside blindness of cognitive elites

The “innate plus social learning” approach to understanding the convictions that drive our myside bias combines with the empirical trend I mentioned earlier (cognitive sophistication does not attenuate myside bias) in a particularly important way. It creates a form of blindness about our own myside bias that is particularly virulent among cognitive elites.

The bias blind spot is an important meta-bias demonstrated years ago in a paper by Emily Pronin and colleagues. They found that people thought that various psychological biases were much more prevalent in others than in themselves, a much-replicated finding. In two studies, my research group found positive correlations between the blind spots and cognitive sophistication—more cognitively skilled people were more prone to the bias blind spot. This makes some sense, however, because most cognitive biases in the heuristics and biases literature are negatively correlated with cognitive ability—more intelligent people are less biased. Thus, it would make sense for intelligent people to think that they are less biased than others—because they are!

However, one particular bias—myside bias—sets a trap for the cognitively sophisticated. Regarding most biases, they are used to thinking—rightly—that they are less biased. However, myside thinking about your political beliefs represents an outlier bias where this is not true. This may lead to a particularly intense bias blind spot among certain cognitive elites. If you are a person of high intelligence, if you are highly educated, and if you are strongly committed to an ideological viewpoint, you will be highly likely to think you have thought your way to your viewpoint. And you will be even less likely than the average person to realize that you have derived your beliefs from the social groups you belong to and because they fit with your temperament and your innate psychological propensities. University faculty in the social sciences fit this bill perfectly. And the opening for a massive bias blind spot occurs when these same faculty think that they can objectively study, within the confines of an ideological monoculture, the characteristics of their ideological opponents.

The university professoriate is overwhelmingly liberal, an ideological imbalance demonstrated in numerous studies conducted over the last two decades. This imbalance is especially strong in university humanities departments, schools of education, and the social sciences; and it is specifically strong in psychology and the related disciplines of sociology and political science, the sources of many of the investigations studying cognitive differences among voters. Perhaps we shouldn’t worry about this, because it could be the case that the ideological position that characterizes most university faculty carries less myside bias. But this in-principle conjecture has not held up when tested empirically. In a recent paper, Peter Ditto and colleagues meta-analyzed 41 experimental studies of partisan differences in myside bias that involved over 12,000 subjects. After amalgamating all of these studies and comparing an overall metric of myside bias, Ditto and colleagues concluded that the degree of partisan bias in these studies was quite similar for liberals and conservatives. In short, there is no evidence that the particular type of ideological monoculture that characterizes the social sciences (left/liberal progressivism) is immune to myside bias.

This confluence of trends is a recipe for disaster when it comes to studying the psychology of political opponents. Nowhere has this been more apparent than in the relentless attempts to demonstrate that political opponents of left/liberal ideas are cognitively deficient in some manner. This has certainly characterized social science in the aftermath of the 2016 votes in the US and the UK. The assumption was that the psychologically defective and uninformed voters had endorsed disastrous outcomes that just happened to conflict with the views of hyper-educated university faculty. Regardless of how one views the outcomes of these votes, there is no strong evidence that the prevailing voters were any more psychologically impaired or ill-informed than were the voters on the losing side. And, as I show in The Bias That Divides Us, there are also no differences in rationality, intelligence, or knowledge separating people holding liberal ideologies from those on the conservative side.

An obesity epidemic of the mind

Anything that makes us more skeptical about our beliefs will tend to decrease the myside bias that we display (by preventing beliefs from turning into convictions). Understanding that your resident memes can make you fat in the same way that your genes can will help to cultivate skepticism about them. Organisms tend to be genetically defective if any new mutant allele is not a cooperator. This is why the other genes in the genome demand cooperation. The logic of memes is slightly different but parallel. Memes in a mutually supportive relationship within a memeplex would be likely to form a structure that prevented contradictory memes from gaining brain space. Memes that are easily assimilated and that reinforce resident memeplexes are taken on with great ease.

Social media have exploited this logic, with profound implications. We are now bombarded with information delivered by algorithms specifically constructed to present congenial memes that are easily assimilated. All of the congenial memes we collect then cohere into ideologies that tend to turn simple testable beliefs into convictions. In an earlier book, I described the parallel logic of how free markets come to serve the non-reflective first-order desires of both genes and memes.

Genetic mechanisms designed for survival in prehistoric times can be maladaptive in the modern day. Thus, our genetic mechanisms for storing and using fat, for example, evolved in times when doing this was essential for our survival. But these mechanisms no longer serve our survival needs in a modern technological society, where there is a McDonald’s on every other corner. The logic of markets will guarantee that exercising a preference for fat-laden fast food will invariably be convenient because such preferences are universal and cheap to satisfy. Markets accentuate the convenience of satisfying uncritiqued first-order preferences, and they will do exactly the same thing with our preferences for memes consistent with beliefs that we already have—make them cheap and easily attainable. For example, the business model of Fox News (targeting a niche meme-market) has spread to other media outlets on both the Right and the Left (e.g., CNN, Breitbart, the Huffington Post, the Daily Caller, the New York Times, the Washington Examiner). This trend has accelerated since the 2016 presidential election in the United States.

In short, just as we are gorging on fat-laden food that is not good for us because our bodies were built by genes with a selfish replicator survival logic, so we are gorging on memes that fit our resident beliefs because cultural replicators have a similar survival logic. And just as our overconsumption of fat-laden fast foods has led to an obesity epidemic, so our overconsumption of congenial memes has made us memetically obese as well. One set of replicators has led us to a medical crisis. The other set has led us to a crisis of the public communication commons whereby we cannot converge on the truth because we have too many convictions that drive myside bias. And we have too many mysided convictions because there is too much coherence to our belief networks due to self-replicating memeplexes rejecting memes unlike themselves.

The antidote to this obesity epidemic of the mind is to recognize that beliefs have their own interests, and for each of us to use this insight to put a little distance between our self and our beliefs. That distance might turn some of our convictions into testable beliefs. The fewer of our beliefs that are convictions, the less myside bias we are likely to display.

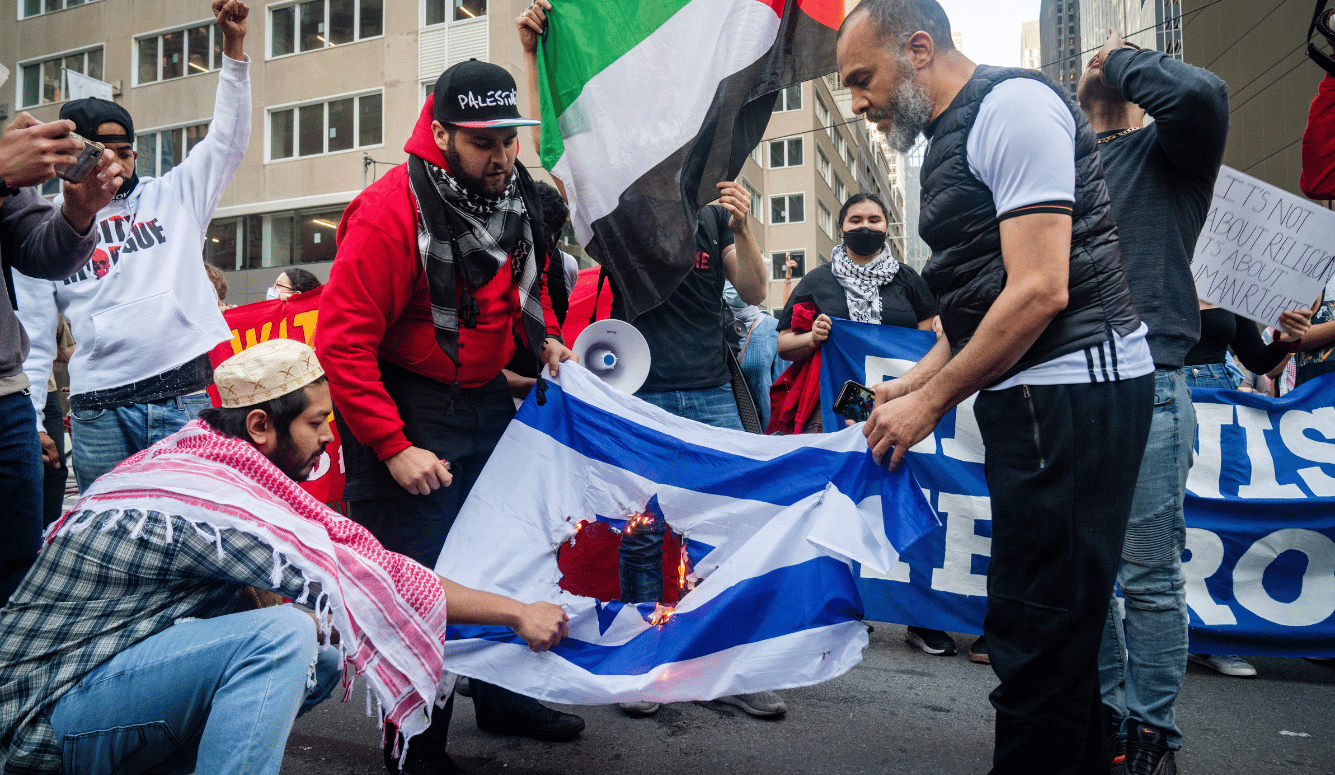

Myside bias and identity politics

If myside bias is the fire that has set ablaze the public communications commons in our society, then identity politics is the gasoline that is turning a containable fire into an epic conflagration. By encouraging people to view every issue through an identity lens, it creates the tendency to turn simple beliefs about testable propositions into full-blown convictions that are then projected onto new evidence. Although our identity is central to our narrative about ourselves, and many of our convictions will be centered around our identities, that doesn’t mean that every issue we encounter is relevant to our identities. Most of us know the difference, and do not always treat a simple testable proposition as if it represented a conviction. But identity politics encourages its adherents to see power relationships operating everywhere, and thus enlarge the class of opinions that are treated as convictions.

Identity politics advocates have succeeded in making certain research conclusions within the university verboten. They have made it very hard for any university professor (particularly the junior and untenured ones) to publish and publicly promote any conclusions that these advocates dislike. Faculty now self-censor on a range of topics. The identity politics ideologues have won the on-campus battle to suppress views that they do not like. But what these same politicized faculty members and students (and, increasingly, university administrators) cannot seem to see is that one cost of their victory is that they have made the public rightly skeptical about any conclusions that now come out of universities on charged topics. In the process of achieving their ideological dominance, they have neutered the university as a trusted purveyor of information about the topics in question.

University research on all of the charged topics where identity politics has predetermined the conclusions—immigration, racial profiling, gay marriage, income inequality, college admissions biases, sex differences, intelligence differences, and the list goes on—is simply not believable anymore by anyone cognizant of the pressures exerted by the ideological monoculture of the university. Whether or not some cultures promote human flourishing more than others; whether or not men and women have different interests and proclivities; whether or not culture affects poverty rates; whether or not intelligence is partially heritable; whether or not the gender wage gap is largely due to factors other than discrimination; whether or not race-based admissions policies have some unintended consequences; whether or not traditional masculinity is useful to society; whether or not crime rates vary between the races—these are all topics on which the modern university has dictated the conclusion before the results of any investigation are in.

The more the public comes to know that the universities have approved positions on certain topics, the more it quite rationally loses confidence in research that comes out of universities. As we all know from our college training in Popperian thinking, for research evidence to scientifically support a proposition, that proposition must itself be “falsifiable”—capable of being proven false. However, the public is increasingly aware that, in universities, for many issues related to identity politics, preferred conclusions are now dictated in advance and falsifying them in open inquiry is no longer allowed. We now have entire departments within the university (the so-called “grievance studies” departments) that are devoted to advocacy rather than inquiry. Anyone who entered those departments with a “falsifiability mindset” would be run out on a rail—which of course is why conclusions on specific propositions from such academic entities are scientifically worthless. University scholars serve to devalue data supporting conclusion A if they create a repressive atmosphere in which scholars are discouraged from arguing not-A, or pay too heavy a reputational price for presenting data in favor of proposition not-A. In their zeal to suppress proposition not-A, ideologically oriented faculty destroy the credibility of the university as a source of evidence in favor of A.

Of course, when this research makes its way into the general media, we have a doubling down on the lack of credibility. So, for example, a university professor describes research in the New York Times that leads to the conclusion that you should make your marriage “gayer.” Why? Because (wait for the drumroll) a university study found that gay marriages were less stressful and had less tension. The public is becoming more aware, however, that a heterosexual male researcher in a university who found that gay couples had more stress and tension than heterosexual couples would be ostracized. And the public is also becoming more aware that if, by some miracle, such a finding were to make its way through the review process of a journal in the social sciences, that the New York Times would never choose it for a prominent summary article with the title: “The Downside of Gay Marriages—More Stress and Tension”; whereas the actual article published (“Same-Sex Spouses Feel More Satisfied”) would be welcomed with open arms. The readers of the New York Times want to hear this conclusion—but not its converse. Both academia and the Times are simply serving their constituencies who are willing to pay for myside bias. Neither is a neutral arbiter of evidence on this particular topic, and the public increasingly knows this.

When the universities make it professionally difficult for academics to publish politically incorrect conclusions in one politically charged area, the public will come to suspect that the atmosphere in universities is skewing the evidence in other politically charged areas as well. When the public sees university faculty members urge sanctions against a colleague who writes an essay arguing that the promotion of bourgeois values could help poor people (the Amy Wax incident), then we shouldn’t be surprised when the same public becomes skeptical of research on income inequality conducted by university professors. When a professor compares the concepts of transracialism and transgenderism in an academic journal and dozens of colleagues sign an open letter demanding that the article be retracted (the Rebecca Tuvel incident), the public can hardly be blamed for being skeptical about university research on charged topics such as child rearing, marriage, and adoption. When university faculty members contribute to the internet mobbing of someone who discusses the evidence on differing interest profiles between the sexes (the James Damore incident), then we shouldn’t be surprised that the public is skeptical about research that comes out of universities regarding immigration. In short, we shouldn’t be surprised that only Democrats thoroughly trust university research anymore, and that independents, as well as Republicans, are much more skeptical.

The unique epistemic role of the university in our culture is to create conditions in which students can learn to bring arguments and evidence to a question, and to teach them not to project convictions derived from tribal loyalties onto the evaluation of evidence on testable questions. In contrast, identity politics entangles many testable propositions with identity-based convictions, transforming positions on policy-relevant facts into badges of group-based convictions. The rise of identity politics should have been recognized by university faculty as a threat to their ability to teach decontextualized argumentation. One of the most depressing social trends of the last couple of decades has been university faculty becoming proponents of a doctrine that attacks the heart of their intellectual mission.

When I talk to lay audiences about different types of cognitive processes, I use the example of broccoli and ice cream. Some cognitive processes are demanding but necessary. They are the broccoli. Other thinking tendencies come naturally to us and they are not cognitively demanding processes. They are the ice cream. In lectures, I point out that broccoli needs a cheerleader, but ice cream does not. This is why education rightly emphasizes the broccoli side of thinking—why it stresses the psychologically demanding types of thinking that people need encouragement to practice.

Perspective switching, for example, is a type of cognitive broccoli. Taking a person out of the comfort zone of their identities, or those of their tribes, was once seen as one of the key purposes of a university education. But when the university simply affirms students in identities they have assumed even before they have arrived on campus, then it is hard to see the value added by the university anymore. In fostering identity politics on their campuses, the universities are simply encouraging students to eat ice cream. No one needs to be taught to luxuriate in the safety of perspectives they have long held. It is something we will all naturally do. Instead, students need to be taught that, in the long run, myside processing will never lead them to a deep understanding of the world in which they live.