Free Speech

Stifling Free Speech Online: Australia’s Misinformation Bill

Every censorship regime in history has claimed to be protecting the public. But no regime can have prior knowledge of what is true or good. It can only know what the approved narratives are.

· 12 min read

Keep reading

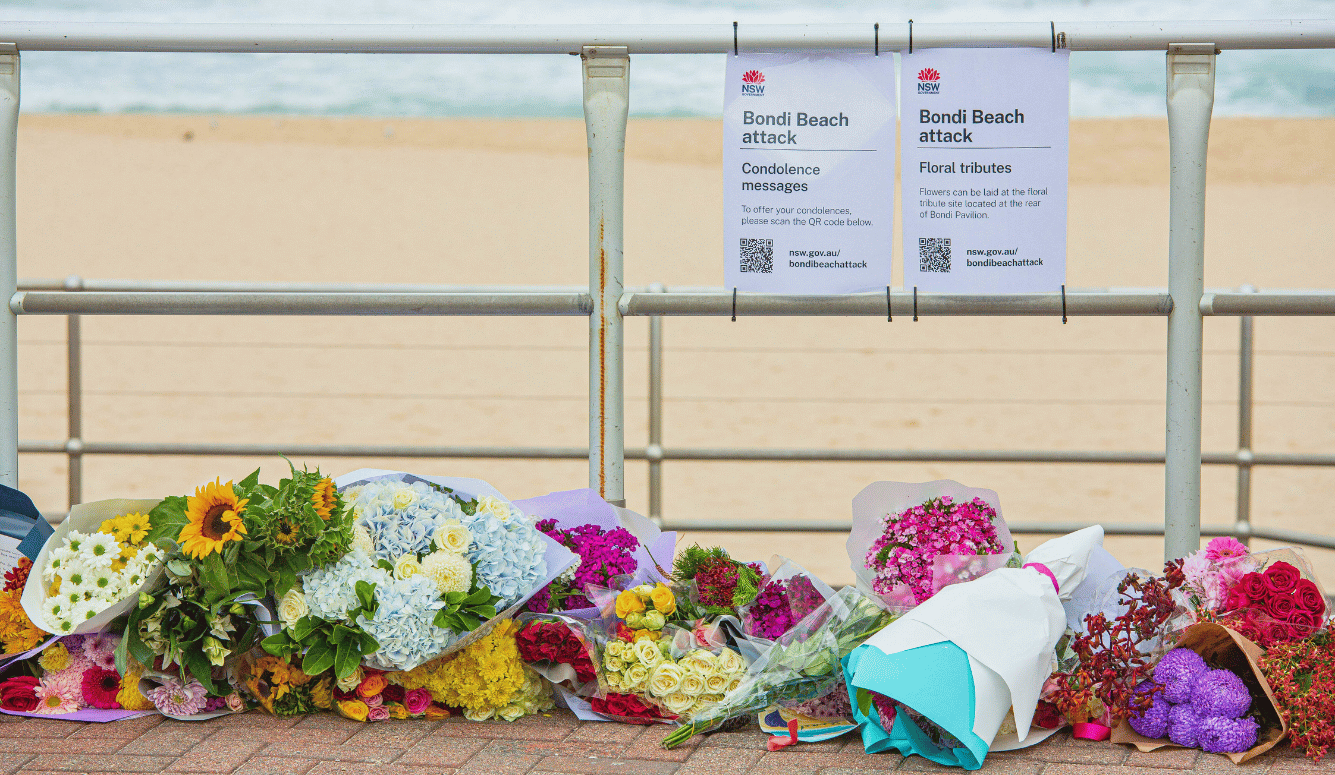

It’s My Party and I’ll Die If I Want To

Rosalind Arden

· 14 min read

Antifragility in Ukraine

Sergey Maidukov

· 7 min read

Richard Dawkins and the Claude Delusion

Sean Welsh

· 10 min read

Open Hiding

Izabella Tabarovsky

· 7 min read