Science / Tech

Who Should Fund Science?

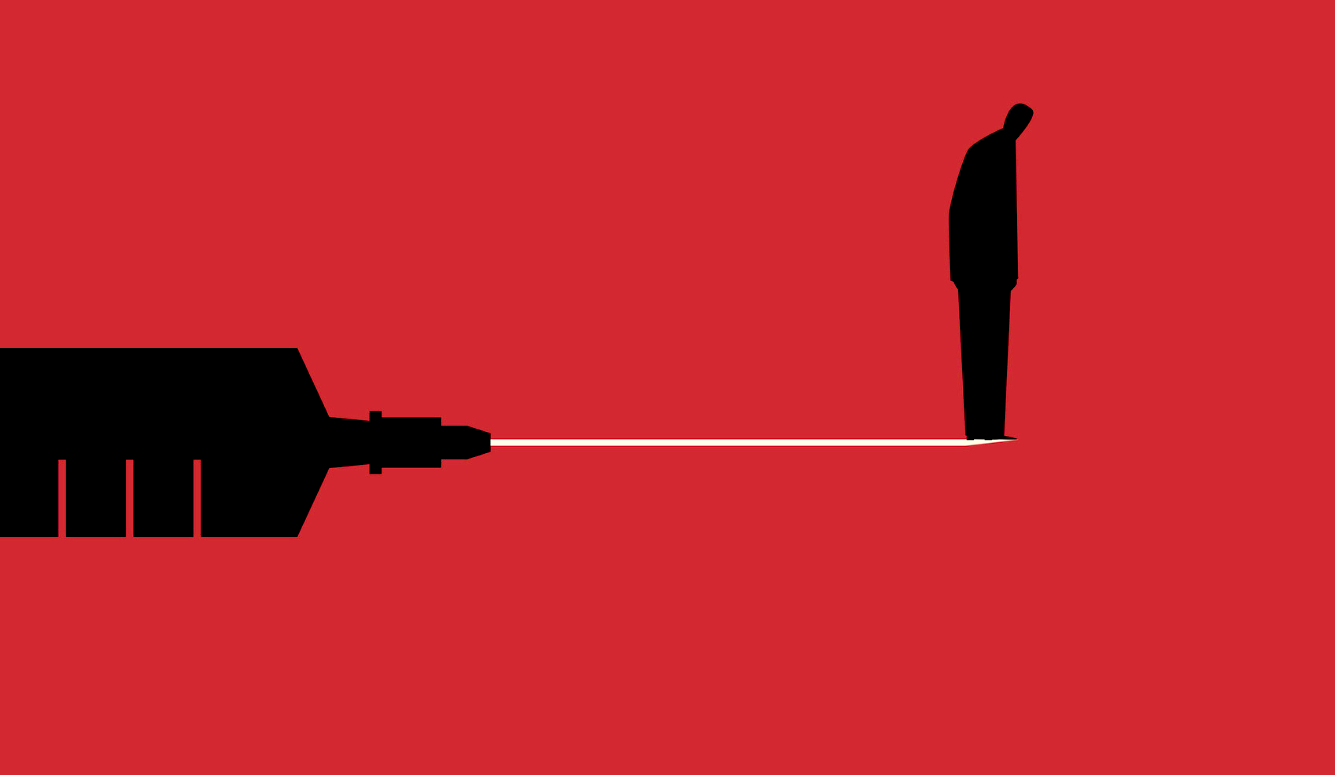

The notion that governments should fund science is built on falsehoods.

There appears to be near-unanimity of opinion that innovative discoveries require public funding of scientific research. A quote from the Lancet neatly summarizes this view: “When governments cut research funding, they are quite literally cutting their future discovery path and hence their future economic capabilities.” The Biden administration has followed suit by asking for a large increase in science and technology funding, which it describes as “the largest ever investment for federal R&D.” This is on top of previous budgetary requests for billions more dollars to fund the National Institutes of Health (NIH).

Cheerleaders for such an approach abound. Writers at Nature believe that such an approach will keep the US “competitive.” A statement from the National Science Foundation (NSF) reads, “The budget makes critical, targeted investments in the American people that will promote greater prosperity and economic growth for decades to come.” Science magazine also welcomed the proposal; its only real criticism was that it would be difficult to gain bipartisan support.

State investment in science is certainly nothing new, but what is fascinating is how easily it is now accepted as the de facto means of spurring scientific innovation. Pew polling confirms that, while government funding does not necessarily inspire trust in science, the public is far less likely to trust scientific findings when funded through an industry source. Additionally, 82 percent of Americans believe government investment in scientific research is usually worthwhile.

Many Western governments failed to respond adequately to the COVID-19 pandemic, so it is understandable that policymakers want to invest heavily in scientific research. After all, should we not ask our state leaders to invest more than ever in innovation in case a worse threat arises? But when considered on the merits of its economic, philosophical, and historical arguments, the claim that governments should be responsible for funding scientific research turns out to be a counterproductive myth.

Investments, Growth, and Two Models

For much of the last century, two canonical writings have underpinned the argument for the state funding of science. First, Vannevar Bush (then the head of the Office of Scientific Research and Development) wrote Science: The Endless Frontier at the close of the Second World War. Bush, who had seen vast amounts of government funds for defense research in wartime, did not wish to see these funds suddenly dry up. To salvage ongoing peacetime subsidies, he argued that basic research was the precursor to applied science, which would produce economic growth. Assuming the market would fail to do so, Bush urged the state to continue funding basic science. The second work was Kenneth Arrow’s 1962 essay, “Economic Welfare and the Allocation of Resources for Invention.” Fearful that the launch of Sputnik meant that the Soviet Union was about to overtake the West in terms of technological capability, Arrow proposed a theorem that supported the federal funding of scientific progress.

Bush’s work is described on its Princeton University Press page as the “landmark argument for the essential role of science in society and government’s responsibility to support scientific endeavors.” Arrow’s paper, meanwhile, has been referenced thousands of times over in support of state funding for science. Their arguments rest on two key assumptions: (1) the market will be insufficient for funding basic research because new ideas are too risky, and therefore, (2) the government must fund basic research because it is the precursor to economic growth. These assumptions rely on what Terence Kealey has described as the “Linear Model” of growth—Francis Bacon’s 17th-century idea that pure science is what leads to applied science which produces a stronger economy. But do such assumptions hold up under scrutiny?

In a 1989 paper titled “Why do firms do basic research (with their own money)?,” Nathan Rosenberg suggested that both large corporations and small biotechnology firms actually invest a fair amount in basic science. These contributions were apparently random and arose because firms were trying to solve industry-related problems. Bell Labs was offered as an example of adequate funding in the basic research of astrophysics merely for the purpose of improving satellite communication. The two scientists Bell Labs employed, Arno Penzias and Robert Wilson, just happened to stumble upon cosmic background radiation as one source of satellite interference, research that earned them a Nobel Prize. “Their finding,” Rosenberg remarks, “was about as basic as basic science can get, and it is in no way diminished by observing that the firm that employed them did so because they hoped to improve the quality of satellite transmission.” Rosenberg also suggests that basic research acts as a “ticket of admission” for engaging with other experts on subjects within the same scientific community. This might explain why the US business sector, which performed an increasing majority of R&D in 2017, was still spending an estimated 17 percent on basic research.

Of course, even if the private sector is incidentally performing basic science, one could still maintain that there is no clear economic incentive to perform this research, and thus, that public funding is still necessary for growth. In an earlier study titled “Basic Research and Productivity Increase in Manufacturing,” Edwin Mansfield demonstrated why this argument is unpersuasive. The more companies invested in basic research, he found, the greater their profits were. Decades later, further research confirmed that, in more technology-focused industries, the basic research contribution to productivity is a real phenomenon. Clearly, firms (especially those focused on technological innovation) are conducting plenty of basic research, and there are economic and social incentives to continue doing so.

Expanded to the national scale, the linear model and an emphasis on pure, basic science are shown to be inadequate for economic superiority. The most extreme example comes from the Soviet Union. Ironically, Arrow was commissioned to devise a theorem that would promote the government funding of science due to fears of advancing Soviet technologies. However, subsequent decades revealed the collapse and economic ruin that centralized planning had brought. As a Stanford University paper notes, by the 1980s, the Soviet Union had more scientists relative to its population than any other country and was quite competitive in the fields of math, physics, and computer science; and yet economic collapse followed anyway.

Even if the Soviet example is excluded, the linear model for economic growth is still full of holes. Consider Britain’s commitment to the government funding of science under the Labour Party in the 1960s. Harold Wilson, who was then serving as the country’s Labour Party prime minister, famously stated that a “new Britain” would need to be created using the “white heat” of the “scientific revolution.” Setting up the Department of Economic Affairs and the Ministry of Technology, Wilson believed that government planning for scientific research efforts would be the backbone of progress. However, Britain faced economic instability under Labour’s direction and had to apply for a nearly $4 billion dollar loan from the International Monetary Fund. So much for the linear model.

A few extra nails can be hammered into the public-funding and linear-model coffins. One of the heaviest areas of R&D investment in the US, the Department of Defense, issued a report titled “Project Hindsight,” which sought to evaluate the effectiveness of post-World War II projects. Of the 700-odd “events” studied, only two were found to have been the result of basic science. Decades later, in 2003, the Organization for Economic Co-operation and Development (OECD) issued a report on economic growth in OECD countries. Their conclusions noted that there were “high returns to growth from business sector R&D activities, in contrast to a lack of any positive effect from government R&D.” A 2007 report from the Bureau of Labor Statistics merely recapitulated what the OECD had already found—that returns to publicly funded R&D were next to nothing.

In summary, the linear model, as well as the notion that governments must fund basic research because private entities will not, is simply a falsehood. Arrow and Bush were wrong.

Who Selects Success and Failure?

If the argument for public funding fails on economic grounds, perhaps major leaps in innovation are the result of government investments not properly captured in economic models. If this were true, the historical record would vindicate major figures and discoveries operating through public-funding avenues. But once again, history tells a different tale.

In fact, the government often subsidizes failures and acts as a barrier to scientific innovation. Consider the story of Charles Babbage. His Encyclopaedia Britannica page suggests that he was some sort of conceptual genius, having invented the “Difference Engine,” which was the forerunner to the calculator and the modern computer. The full tale is recounted by Terence Kealey in his 1995 book, The Economic Laws of Scientific Research, and it is much more revealing about how smartly governments invest in technology. When Babbage first proposed the Difference Engine to the British government in 1823, they generously invested £17,000 in his project. About a decade later, with only a small fraction of the device completed, he had already begun drafting the design of a new project, the “Analytical Engine.” Rightly concerned, the British government eventually withdrew Babbage’s funding by 1842. Having wasted government funds on nothing, in 1830, Babbage still managed to publish a book under the not-especially-self-aware title, Reflections on the Decline of Science in England, and on Some of Its Causes. Kealey notes that the book came out a year before one of the most consequential scientific discoveries in Britain: the discovery of electromagnetic induction by Michael Faraday.

Babbage, far from being an exception, is the rule for government innovative foresight. In How Innovation Works, Matt Ridley notes multiple instances of governments funding lemons, while either private funders or hobby scientists created the next true technological improvement. Aeronautics provides an obvious example. The Wright brothers are correctly credited for having first achieved sustained flight in a motorized airplane. The US government did not fund the Wrights; instead, they provided large funds to Samuel Langley, whose attempt at a motor-powered airplane was an utter failure. The British government did much the same when trying to make airships that could cross oceans in the early 20th century. While the R100 aircraft was built privately and made its flight to Canada and back without problems, Ridley describes the publicly funded R101 as having “got as far as northern France and crashed, killing forty-eight of the fifty-four people on board, including the [air] minister.”

The track record of failed state investments is not purely a Western phenomenon. Japan’s previously titled Ministry of International Trade and Industry (MITI) followed the same pattern. A review of MITI’s input to industry notes that it “did not want Mitsubishi and Honda to build cars, and did not want Sony to purchase U.S. transistor technology.” If business leaders had listened to that advice, entire industries could have stalled, but Honda’s managers were not fond of listening to bureaucrats. Having been told by MITI that they could only build motorcycles, and that they must avoid using the color red, Honda produced the S360 automobile (in red) for the 9th Tokyo Motor Show in 1962 as a “middle finger” to MITI. Such a blot would be bad enough on the organization’s track record, were it the only one. However, a multi-decade analysis of MITI’s industrial policies demonstrated that they disproportionately chose to invest in areas of the economy with low returns. It is telling that Japan is often cited as a technological powerhouse, and the proportion of their R&D investment from the private sector ranks as one of the highest in the world.

We find the same trend in India’s seed industry. Now an incredibly profitable and renowned sector of agriculture, a historical review of seed production credits its growth to the “holy alliance between policy makers/administrators and the hard working farmers.” In reality, the private sector was responsible for massive growth after liberalizing the seed market in the 1980s. Publicly funded seed research was focused on open, pollinated crop varieties, while the private sector was investing in hybrid seeds and vegetable crops known to be more commercially viable with higher yields. While it is true that both private and public sectors contributed to seed industry research and growth, this understates the importance of private research and production to its success. As the authors of a paper titled “Monopoly and Monoculture: Trends in Indian Seed Industry” point out, by the 1990s, the state was buying 25 percent of its seeds from the private sector, and thus ongoing contributions from state-funded seed production cannot be easily disentangled from the commercial end. Another analysis clearly shows that while seed production did grow in a linear fashion during the 25 years it was dominated by the public sector, the growth trend increased the more private seed companies intervened. The profit motive can, incidentally, lead to an altruistic result because it meets real, pragmatic demands from the citizenry.

These examples notwithstanding, concerns may still arise that, without some planned areas of funding, many scientific aspirations will be left out in the cold. Corporations may invest quite heavily in R&D, but what about the lone hobby scientist or academic without the means to test their ideas? It turns out that a rich history of philanthropy and private investment can be found at the heart of many scientific breakthroughs. The Human Genome Project is often referred to as a prime example of public funding in practice, but the technology that enabled it—Leroy Hood’s automatic DNA sequencer—was funded through Sol Price, the father of warehouse retail stores. Thomas Edison also received private investments for his research through the likes of J.P. Morgan after he had earned a reputation as a fiercely competitive entrepreneur. Various philanthropic institutions, like the Rockefeller Foundation, the Wellcome Foundation, the Carnegie Foundation, and the Bill and Melinda Gates Foundation, have also greatly contributed to scientific funding. Despite common denunciations of Rockefeller as a “robber baron,” his foundation’s investments led to an understanding of how DNA functions as well as the ability to test and mass produce penicillin.

It should go without saying that many investments simply do not work out, and this is not specific to the public sector. Prior to his recent controversy, Ibram X. Kendi’s Center for Antiracism Research was gifted $10 million by Jack Dorsey in 2020 with no strings attached. Clearly, private investors can be moronic with their money. The difference is that when private investors act in this way, they may lose a massive sum with nothing to show for it but public embarrassment. But when governments do it, they are wasting taxpayers’ money (the results of which are often hidden behind a science journal paywall).

So-Called “Vested Interests”

The inevitable fallback argument for public funding goes like this: Whatever the flaws of government funding—from the slow pace of progress to the painstaking amount of time spent on grant proposals—these downsides are necessary to protect us from conflicts of interest that arise with industry relations. But while it is well-documented that business leaders will engage in foul play to maximize profits, public entities are also rife with vested interests, either to the detriment of technological innovation, or paradoxically, to the benefit of industry leaders looking to gain a monopoly.

Take the relationship between the US government and the agricultural industry. This was a major area of employment in the early 20th century, and the introduction of Checkoff programs allowed farmers to provide research and information on agricultural commodities to boost sales. These were initially a voluntary means of product promotion, but the programs grew to become a form of income support for farmers in times of economic hardship. Over time, interest groups consolidated, and the Checkoff programs became a way to fund a mere fraction of the largest bodies of the farming industry. This was much to the detriment of smaller farmers who had paid into the system and made it harder for the market to obey basic supply and demand. In this manner, publicly funded research efforts can move far beyond their original purpose when governments inevitably mix with special interests.

In medicine, it is an understatement to say that the attitude towards industry research funding is hostile. Medical-journal editorials often take a puritanical view of how science should be performed and they often cite the alleged harm private funding does to the process. This creates a bad-faith research environment in which any financial tie to industry is automatically viewed with suspicion. An analysis of over a hundred articles concerning physician-industry relationships noted a significant bias towards the negative side of these relations nearly 90 percent of the time. Much of the evidence presented to support negative conclusions was speculative, and in some cases, it was completely absent. The holier-than-thou approach to funding is puzzling when contrasted with the empirical evidence on sources for drug innovation. A survey on some of the most transformational drug therapies in the past 25 years found that industry-funded research once again resulted in much better returns than publicly funded inputs. The authors conclude:

Our analysis indicates that industry’s contributions to the R&D of innovative drugs go beyond development and marketing and include basic and applied science, discovery technologies, and manufacturing protocols, and that without private investment in the applied sciences there would be no return on public investment in basic science.

This has not stopped policy makers from cracking down on potential industry conflicts of interest for researchers and physicians. Following the gathering traction of the conflict-of-interest movement in the early 2000s, the NIH issued a ban on its workers acting as industry consultants or holding significant stock in such companies. In 2010, the US passed the Physician Payments Sunshine Act, which required all industry payments to physicians and teaching hospitals to be disclosed to the Center for Medicare and Medicaid Services. The beneficial effect of these regulations is difficult to discern. A survey of NIH workers indicated that the regulations had negatively impacted their perceived ability to carry out their mission, and a review of 106 research-misconduct cases found that 105 had occurred, not because of industry, but in a nonprofit-research setting.

The narrow focus of policymakers on financial conflicts of interest is likely a result of these relationships being easier to point out and litigate compared to other motives, but that does not mean that other incentives do not exist. Vested interests that affect the type and amount of scientific output can be personal or ideological as well—perhaps more so when coupled with the aspirations of political figures. For instance, the Biden administration, clearly pandering to the left wing of its voter base, has committed a portion of its scientific budget to gender and racial equity in STEM fields. This initiative comes at a time when US women have more graduate degrees than men across the board, and major corporations are already tripping over themselves to promote any racial minority they can find into a prominent position.

Politicians love to appear as though they are making historic scientific investments because it grabs positive headlines in the short term while ignoring the vested interests created in the long term. The Nixon administration invested $1.6 billion in cancer research with its National Cancer Act and was praised for it at the time. However, editorials now debate how beneficial the returns were for such a massive investment in basic cancer research while the budget just keeps growing. The George W. Bush administration later introduced the “American Competitiveness Initiative,” which called for $137 million in federal research and development, and even that was not enough to satisfy the Nature Genetics editorial board, which praised the funding but could not help asking for a more permanent investment. The Trump administration attempted to cut federal science budgets, and earned the ongoing ire of a community used to seeing these funds spiral upward. (The melodrama was completely unfounded—even Vox conceded that NIH budgets grew under Trump.)

Advocating for publicly funded science is, in a way, asking for resources to be increasingly allocated to people who must prioritize their political goals over scientific innovation. Why this is not the mainstream concern for conflict-of-interest discussions in such a dense literature base is baffling, but if there is still suspicion that industry’s financial motives lead to the corruption of evidence, consider the following: One of the most consequential medical findings for reducing the risk of a heart attack and stroke, the lowering of high blood pressure, was discovered by the life insurance industry.

Who Performs Science and Why

When writing A Theory of Justice, John Rawls introduced what he called the “Aristotelian Principle” defined as “other things equal, human beings enjoy the exercise of their realized capacities (their innate or trained abilities), and this enjoyment increases the more the capacity is realized, or the greater its complexity.” In addition to inspiring others to realize their abilities, Rawls believed that such an innate desire could contribute to societal stability.

The public-funding myth’s pervasiveness is a testament to how many people undervalue the presence of our innate Aristotelian curiosity as a key scientific ingredient. No contemporary figure exemplifies this better than Mariana Mazzucato. In her book The Entrepreneurial State, Mazzucato argues that economic and technological success in the US is the result of state investments (which she dubs “mission-oriented directionality”) rather than the “myth” of market-based innovation. Building on this thesis, Mazzucato’s follow-up book, The Value of Everything, argues for heavy taxation of businesses because they are capitalizing on products that are the result of previous investments (in her view, from the state) without reinvesting in society. Through state “extraction,” Mazzucato believes we can then experience the true value of businesses and innovators.

Mazzucato’s arguments are wholly unconvincing when reviewing major scientific advancements that arose without “directionality.” James Watt required no state direction to apply established practices he had learned to circumvent the inefficiencies of the steam engine (and although he worked in Glasgow, he was not an academic, he was a private contractor). Penzias and Wilson were trying to solve an industry problem for Bell Labs when they happened to discover background cosmic radiation. Penicillin was discovered by Alexander Fleming by chance. The main breakthrough in gene editing came from the yogurt industry. The list could go on. Even Mazzucato’s examples of successful state intervention are highly misleading. She cites railways as a good example of a major innovation through public investment, but as Ridley points out, “the British and global railway boom of the 1840s was an entirely private-sector phenomenon, notoriously so: fortunes were made and lost in bubbles and crashes.”

It is more often people trying to solve practical problems that leads to scientific innovation than directions given by a prophetic group of bureaucrats. When observing factory floor workers, Adam Smith understood how important such first-hand experience was to technological progress. Noting the “ingenuity of the makers of the machines,” Smith was impressed by adjustments made to machinery by the people working them to improve efficiency. He also saw the clear difference between the market-based (Glasgow) and public (Oxford) universities he spent time in. Whereas Smith was impressed by the scholarship he encountered at Glasgow, he was disappointed by the idleness of professors at Oxford who enjoyed good salaries but seemed to have no incentive to teach their students. Public investments, even those made to presumably altruistic scientists, should be viewed with suspicion according to Smith: “I have never known much good done by those who affected to trade for the public good.” He would no doubt be confused by the various publications that read as if scientific funding was some moral responsibility to be adjudicated by the state.

Smith might also be confused by the constant calls to address underfunded PhD pursuits. Contrary to popular belief, the West has a surplus of scientists, whose market of talent is distorted by government funding. A Boston University article notes that most postdoctoral researchers are supported by NIH grants, and when these funds contracted, the “scarcity of federal resources had left too many bright, highly trained postdocs competing for too few faculty positions.” This should come as no surprise if scientific funding is coupled to a basic understanding of economics. Saturating the market with PhDs may fill up universities with underpaid workers doing basic science, but it does little to spur Aristotelian curiosity. A 2015 survey of scientists found that they generally agreed the “publish or perish” university model, as well as the constant need to secure and compete for funding, was not only undermining innovative research, but also likely leading to less trustworthy research methods as well. Unsurprisingly, some have sought a solution to this by cutting PhD program enrollment.

The work of the most notable scientific achievers in history can be predicted, not by the state’s commitment to funding PhDs, but by how much ambient freedom is present to allow these efforts to occur. Charles Murray compiled a vast compendium of significant worldwide figures in the arts and sciences for his book Human Accomplishment. He discovered that a political framework of de facto freedom and a city that operated as a political or financial center were some of the strongest predictors for the emergence of renowned figures. Unsurprisingly, the number of figures with significant scientific accomplishments to their name in totalitarian states was dwarfed when compared to those in the US and Europe. The democratic framework and open exchange of goods and ideas was what allowed such historical giants to realize and explore their innate potential. Consistent with Murray’s analysis, Thales of Miletus—considered by some to be the first ever scientist—lived in the city at a time when it was known as a thriving commercial hub. Long before the myth of public funding took hold, scientific thinkers were thriving and better served by an open market.

Conclusions

The vision of scientists unencumbered by any worry of funding due to a generous state may be an alluring picture, but like any other utopian vision, it is simply not a good reflection of the actual road to discovery. The historical record is riddled with episodes of happenstance, philanthropy, and private investors all contributing to scientific progress regardless of any so-called conflicts of interest. In contrast to the claims made by people like Arrow and Bush, the empirical evidence does not support a linear model of growth from basic research, but from applied science and the private entities that support it.

Nevertheless, Western governments appear to have been rattled into renewing public funding for science. This is understandable given that many public-health agencies failed to successfully respond to a highly transmissible virus. But in some ways, the wrong lessons have been learned. While governments can, of course, make some amazing scientific advancements (a man walking on the moon comes to mind), the pragmatic concerns of any nation are better served by industry. At a time when we need innovative technologies to combat whatever future problems may arise, it is the liberalization of the scientific market that is needed most to allow the West to embrace the Aristotelian principle.