Psychology

Six Great Ideas from Adam Grant's 'Think Again'

Westerners have been reading the works of Aristotle for more than 2,000 years, and his ideas even animated the Islamic Golden Age.

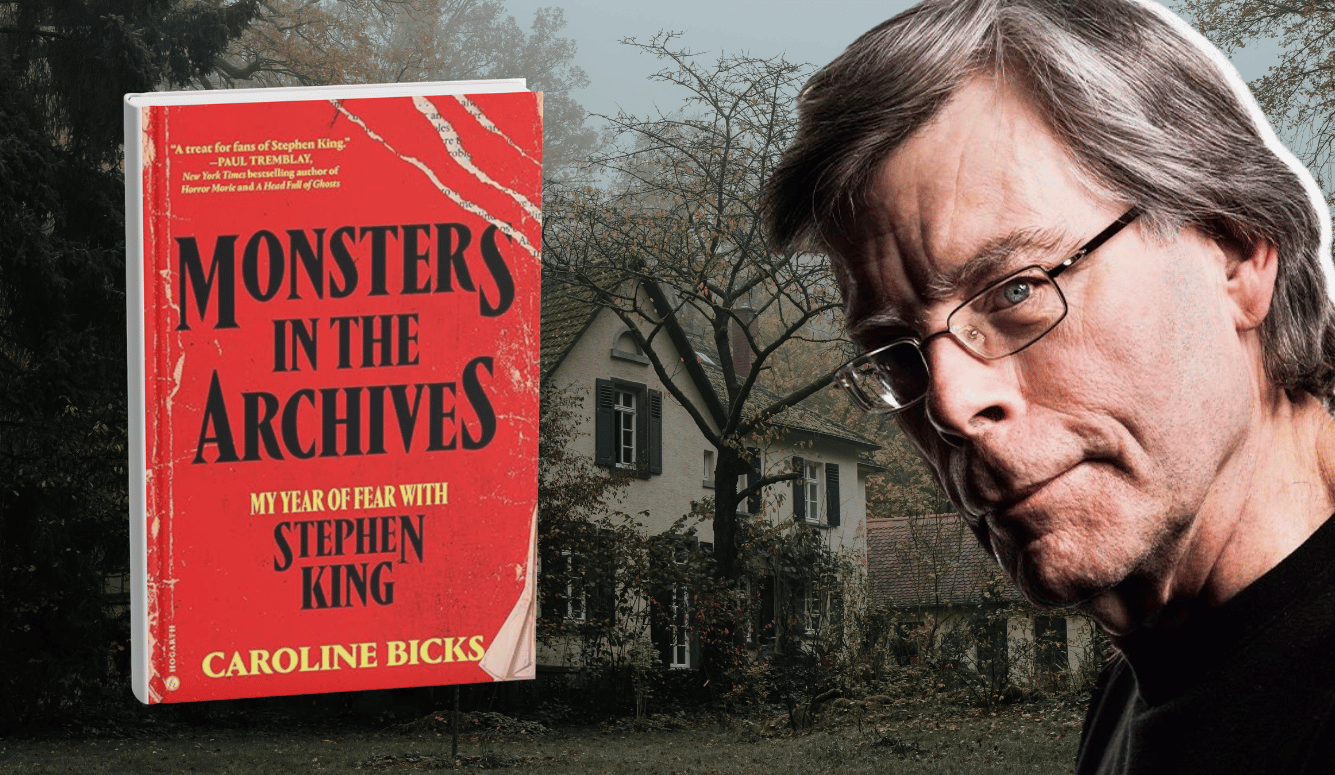

A review of Think Again: The Power of Knowing What You Don’t Know by Adam Grant. Viking Press, 320 pages (February 2021).

Of everything a person must maintain, his mind is most important, and king of all is his connection to reality. Yet, our tether to the world can be tenuous. Our ideas, particularly our most fundamental ones, help us make sense of the world. But if they’re wrong, they completely shift the image, like a turn of the kaleidoscope.

Nature is no child’s toy, though, and to be commanded, it must be obeyed. So, as much as we hate to feel the ground move beneath our feet, we have real incentives to get things right, even if that means upending ideas we’ve held for decades. And, according to Wharton professor Adam Grant, the trait of regularly rethinking one’s beliefs, big and small, is what puts the best thinkers a cut above the rest. In fact, a propensity toward frequent and flexible rethinking in the face of new evidence may even outstrip IQ as an indicator of one’s intellectual trajectory. That’s fortunate, because rethinking and recognizing what we don’t know are skills we can cultivate. Here, from Grant’s Think Again, are some great ideas on how and why to do so.

Recognize the problem

“You must not fool yourself—and you are the easiest person to fool,” said Richard Feynman, Nobel-winning physicist. Laureates of another kind—David Dunning and Justin Kruger, winners of the 2000 Ig Nobel prize—backed this truism with data, showing that we are particularly prone to fooling ourselves when we know just enough to be dangerous, which partly explains why mortality rates spike every summer as fresh residents take up the mantle of medicine.

Grant echoes Phil Tetlock’s claim that overconfidence manifests in our tendency to emulate the behavior of preachers, prosecutors, and politicians: We preach the virtues of our ideas without seeking meaningfully to address conflicting views; we prosecute opposing views with a greater focus on winning than truth; and we politick for support among our constituents instead of engaging in thoughtful dialogue. We might even fall for the “I’m-not-biased” bias, giving ourselves more credit for objectivity than our thinking habits deserve. Realizing, in our cooler moments, that we’re more fallible than we sometimes think opens the growth loop, propelling us from doubt, to curiosity, to investigation, to new learning.

Think more like a scientist

I can’t tell you how much I admire Jason Crawford, creator of the “Roots of Progress” project. When he set out to tell “the story of human progress—and how we can keep it going,” he set aside his prior beliefs to completely re-examine the evidence. He recently posted a quote from a hero of medicine, Louis Pasteur, that captures that scientific spirit:

Keep your early enthusiasm, dear collaborators, but let it ever be regulated by rigorous examinations and tests. Never advance anything which cannot be proved in a simple and decisive fashion.

Worship the spirit of criticism. If reduced to itself, it is not an awakener of ideas or a stimulant to great things, but, without it, everything is fallible; it always has the last word. What I am now asking you, and you will ask of your pupils later on, is what is most difficult to an inventor.

It is indeed a hard task, when you believe you have found an important scientific fact and are feverishly anxious to publish it, to constrain yourself for days, weeks, years sometimes to fight with yourself, to try and ruin your own experiments and only to proclaim your discovery after having exhausted all contrary hypotheses.

But when, after so many efforts, you have at last arrived at a certainty, your joy is one of the greatest which can be felt by a human soul, and the thought that you will have contributed to the honor of your country renders that joy still deeper.1

We can think less like preachers, prosecutors, and politicians—and more like scientists— says Grant, by regularly asking ourselves of any meaty conclusion: What evidence would convince me otherwise? This focuses our own inner debates on causal connections instead of prior commitments, on facts instead of feelings.

If there is a feeling with which we should concern ourselves, it’s what Grant calls “the joy of being wrong”—the recognition that every false belief we overturn makes our mental maps of the world that much more accurate. We turn what, in regard to proper thinking, Leonard Peikoff called “red lights,” which won’t sit squarely with new evidence, into “green lights,” clearing the road for new learning.

We can set ourselves up for more such joys by developing the trait of confident humility: confidence in our abilities alongside active questioning about whether we’ve adopted the right strategy or are even tackling the right projects. Those oh-so-wise Greeks called hubris a sin, and perhaps we can better understand why by grasping, as Grant points out, that humility and its cognates derive from a root meaning “close to the ground.” Humility, he says, is not about chucking our self-confidence, but about ensuring that our thinking is well grounded.

Persuade by listening

Is there anyone who

Ever remembers changing their mind from

The paint on a sign

Is there anyone who really recalls

Ever breaking rank at all

For something someone yelled real loud one time

~“Belief,” John Mayer

If Think Again were a song, it would probably be “Belief” by John Mayer, not just because both are punchy and poignant, but because both reflect on the destructive nature of strongly held but bad ideas and the flat-footed ways in which people often attempt to persuade others. We should take a lesson, says Grant, from people such as Daryl Davis, the black musician who has helped hundreds leave the Ku Klux Klan, and Arnaud Gagneur, the “vaccine whisperer” whose motivational interviewing methods have been used to help thousands of parents see that the MMR and other common vaccines are safe for their kids.

Davis has said that he’s never persuaded anyone. This might be a touch too modest, but his point is that he doesn’t do what Grant admits to having long done; he doesn’t “logic bully” others into corners like a modern Socrates or George Berkeley. He approaches fraught conversations not like a war, but like a dance, emphasizing common ground, listening reflectively, and encouraging others to pause on their own doubts. After all, logic bullying doesn’t work. This was the conclusion, anyway, of the greatest and most consequential ambassador in American history, Benjamin Franklin:

[A]s the chief ends of conversation are to inform or to be informed, to please or to persuade, I wish well-meaning, sensible men would not lessen their power of doing good by a positive, assuming manner, that seldom fails to disgust, tends to create opposition, and to defeat every one of those purposes for which speech was given to us.2

We can be more effective by helping others see the cracks in their own overconfidence and pointing them to the sledgehammer than by attempting the demolition ourselves. An eloquent demonstration of this (though Grant doesn’t discuss it) is the difference between Davis and the vigilantes within the BLM movement, a difference Davis captured in his 2016 documentary, Accidental Courtesy (highly recommended). As with the story of the vaccine whisperer, it shows that you cannot help people change their own minds by trying to deny their autonomy.

Research also suggests that trying to “put yourself in someone else’s shoes” by simply imagining what life is like for others doesn’t break down prejudices or change minds but can actually increase resistance. “Across twenty-five experiments,” Grant writes, “imagining other people’s perspectives failed to elicit more accurate insights—and occasionally made participants more confident of their own inaccurate judgments.” What a shocker—we can’t imagine what we don’t know. If we want to persuade others, we have to understand them, and if we want to understand them, we have to talk to them.

The greater the distance between us and an adversary, the more likely we are to oversimplify their actual motives and invent explanations that stray far from their reality. What works is not perspective-taking but perspective-seeking: actually talking to people to gain insight into the nuances of their views. That’s what good scientists do: instead of drawing conclusions about people based on minimal clues, they test their hypotheses by striking up conversations.

So, too, thought the great Enlightenment thinker and student of John Locke, Shaftesbury, who was fond of pointing out that the truly liberal state (or polis) leaves men free to “polish” one another through conversation and trade, giving rise to polite, civil society.

All Politeness is owing to Liberty. We polish one another, and rub off our Corners and rough Sides by a sort of amicable Collision. To restrain this, is inevitably to bring a Rust upon Mens Understandings. ’Tis a destroying of Civility, Good Breeding and even Charity it-self, under pretence of maintaining it.3

Acknowledge complexity

Westerners have been reading the works of Aristotle for more than 2,000 years, and his ideas even animated the Islamic Golden Age. Despite the fact that what we have are but dry lecture notes, not works Aristotle actually published, they remain a staple of the persuasive arts, and for good reason. Not only did Aristotle’s book on persuasive speaking, Rhetoric, anticipate many of the lessons on persuasion in Think Again, his corpus seems implicitly to commend another of the book’s ideas.

Read a modern political platform or newspaper op-ed and you will likely see what psychologists call “binary bias,” the tendency to abruptly reduce complex debates to two positions. Pick up the Nicomachean Ethics, on the other hand, and you will see Aristotle laying out a whole range of views on various topics. Aristotle acknowledged complexity, and that signals to readers that he was paying attention, that he was looking not just for ideas confirming his own views or convenient foils to them, but for all evidence and contrary opinions. He was in touch with the full range of views—not a mere cherry-picked subset—and he often explained those views clearly alongside his own.

Far from reducing one’s credibility or persuasiveness, acknowledging complexity can attract readers, especially when it’s clear you’ve considered the ideas they actually hold and not some straw-man version of them. “A dose of complexity can disrupt overconfidence cycles and spur rethinking cycles,” says Grant. “It gives us more humility about our knowledge and more doubts about our opinions, and it can make us curious enough to discover information we were lacking.” As John Stuart Mill put it, he who seeks “objections and difficulties, instead of avoiding them, and has shut out no light which can be thrown upon the subject … has a right to think his judgment better than that of any person, or any multitude, who have not gone through a similar process.”4 Could there be a better proof of this than the lasting legacy of Aristotle, known for centuries simply as “the philosopher”?

Find challengers, not followers

Orville and Wilbur Wright were so close they said they “thought together,” but as Grant points out, you could as aptly say they fought together. The brothers enjoyed “scrapping” about ideas, but as heated as their debates got, they never came to blows. That’s because, although they challenged each other to think long and hard about their respective ideas, they didn’t make things personal. They engaged in what psychologists call “task conflict,” not relationship conflict. They prodded one another to think and work smarter and harder, and their results speak for themselves.

Although we’re not all fortunate enough to be born into what Grant calls a “challenge network,” we can and ought to build our own if we want to do extraordinary things. This worked for Brad Bird when he was spearheading projects at Pixar, where even some of the world’s most farsighted and creative executives balked at one of his first proposals. To prove them wrong and get the project done, Bird didn’t go looking for yes-men. He recruited Pixar’s misfits, the disagreeable people he knew would call him on his bad ideas. Their passionate sparring led to The Incredibles, which went on to win the Oscar for best animated feature, grossing more than $631 million. “We learn more from people who challenge our thought process than those who affirm our conclusions,” writes Grant. “Strong leaders engage their critics and make themselves stronger. Weak leaders silence their critics and make themselves weaker.”

It’s easy and comfortable to team up with those who will follow us off any cliff. But to do big things, we need to assemble a challenge network of independent-minded value-creators who will push us to rethink our pet projects and ideas—without undermining morale and cooperation by flinging personal slights.

Lead by transparency

Zooming out, how do we build agile organizations where the rethinking habit and the scientific mindset are baked into the DNA? How, especially, can those leading massive companies do this when they can’t possibly interact with every contributor?

When asked to consult on this topic by the Gates Foundation, Grant ran an experiment to test the standard advice: getting managers to model behavior and ask their teams for feedback on how to improve. Afterward, employees reported greater psychological safety, a marker of willingness to take risks and try new things, but this didn’t last. In another group in the experiment, Grant advised managers “to tell their teams about a time when they benefited from constructive criticism and to identify the areas that they were working to improve now.” A year later, these teams were still reaping benefits.

By admitting some of their imperfections out loud, managers demonstrated that they could take it—and made a public commitment to remain open to feedback. They normalized vulnerability, making their teams more comfortable opening up about their own struggles. Their employees gave more useful feedback because they knew their managers were working to grow. That motivated managers to create practices to keep the door open: they started holding “ask me anything” coffee chats, opening weekly one-on-one meetings by asking for constructive criticism, and setting up monthly team sessions where everyone shared their development goals and progress.

Grant also counsels leaders to throw out “best practices,” which demand conformity. Instead hold teams accountable, not just for outcomes, but for the quality of thinking that produces them. “Along with outcome accountability, we can create process accountability by evaluating how carefully different options are considered,” he writes. “Research shows that when we have to explain the procedures behind our decisions in real time, we think more critically and process the possibilities more thoroughly.”

Telling the story of NASA’s safety woes and its responses to them, he shows why a positive outcome, such as a successful launch, is mere luck when it is not the product of thorough vetting, where everyone on the team is free (and encouraged) to raise red flags and initiate the process of rethinking and investigation. On the other hand, when a carefully considered approach doesn’t lead to the desired outcome, it’s not a failure but a thoughtful experiment. “I have not failed,” said Thomas Edison. “I’ve just found 10,000 ways that won’t work.”

Although the product of much data, Grant’s book is so chock full of stories that its 257 pages fly by like an intellectual roller coaster. Those wanting to nerd out on the details of this or that study may consult the notes at the back of the book; such analysis doesn’t weigh down the main text.

Grant himself acknowledges his book’s biggest flaw. It provides few clues about where rethinking a given issue ought to end. Certainly, we need not endlessly rethink such things as how to evaluate Nazis or the institution of slavery. No doubt, rethinking often is valuable, but without a contrasting discussion on the natures of certainty and arbitrary doubt, we can’t say on principle when to leave the jury out or call for a verdict.

Certainty, of course, is complex, contextual, and hotly debated—of that we can be certain. Although it might be a topic more appropriate for philosophers of science than organizational psychologists, Grant’s contributions to that discussion likely would be interesting and fruitful. And such work might help him think again—and more deeply—about thinking again.

Nonetheless, calls for confident humility and scientific methodology are of perennial importance, and Grant’s makes for fascinating, thought-provoking reading. Pick it up, and begin leveraging the power of knowing what you don’t know.

References

1 William Safire, Lend Me Your Ears: Great Speeches in History, updated and expanded (New York: W. W. Norton & Company, 2004), 536.

2 Benjamin Franklin, Writings (New York: Library Company of America, 1987), 1322.

3 Michael B. Gill, “Lord Shaftesbury,” Stanford Encyclopedia of Philosophy, updated September 9th, 2016, https://plato.stanford.edu/entries/shaftesbury/.

4 John Stuart Mill, On Liberty, chapter two, “Of the Liberty of Thought and Discussion,” h struct/phl201/modules/Philosophers/Mill/mill_on_liberty.html (accessed May 21st, 2021).