Europe

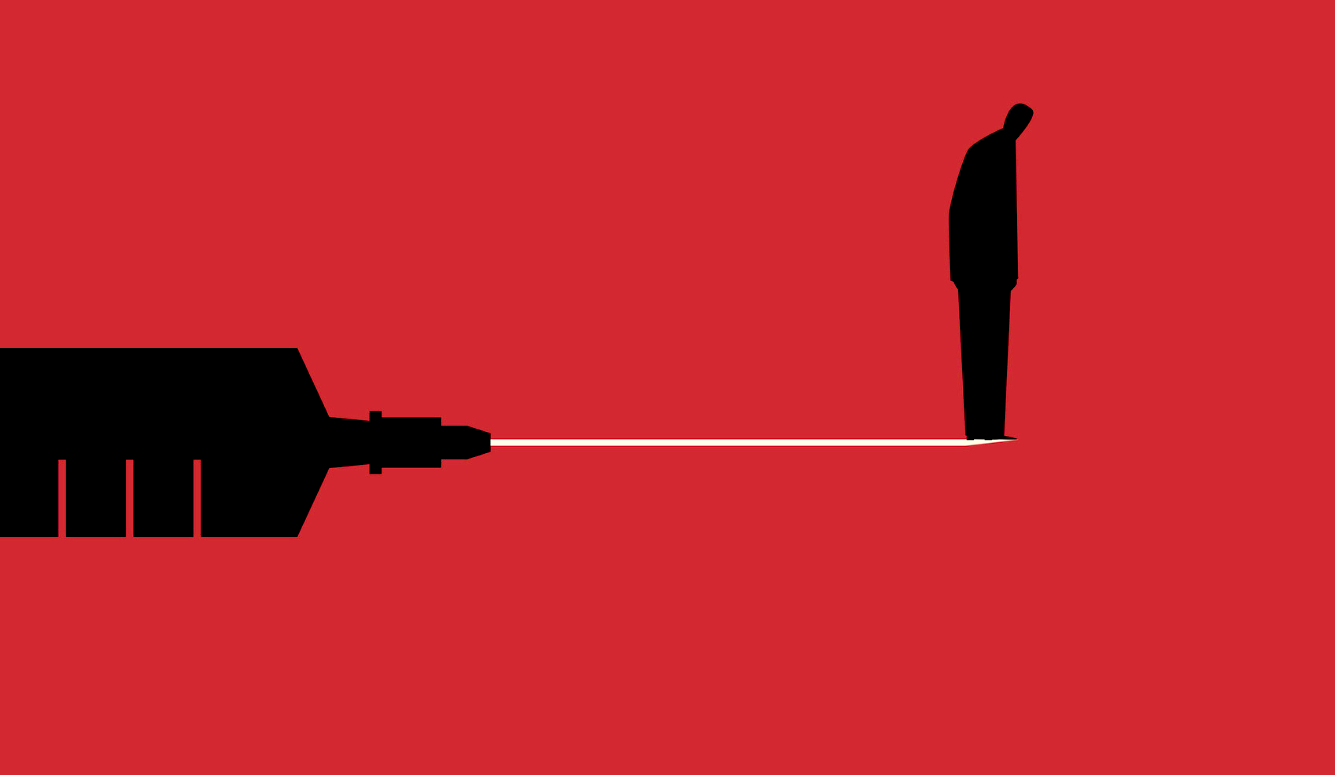

The Moral Panic Behind Internet Regulation

This will make the internet a much less free place to speak compared to Speakers’ Corner at Hyde Park—the place which is supposed to represent Britain’s commitment to free speech.

This is a contribution to “Who Controls the Platform?”—a multi-part Quillette series. Submissions related to this series may be directed to [email protected].

In the present era of growing polarization, one thing that people from across the political spectrum now agree on is their dislike of Big Tech. The political Left complains that Facebook, Google, Twitter, and Amazon have become “monopolies.” They also blame global technology platforms for Brexit, the rise of Donald Trump, and white nationalism. It is much easier, after all, to blame online manipulation for the downfall of the center-left than acknowledge the disconnect between the intelligentsia and the working-class voters that the Left once represented.

Meanwhile, critics on the Right blame Big Tech for a comparable shopping list of evils, including being biased against conservatives, giving a platform to terrorists, enabling pedophiles to groom children and distribute indecent images, and boosting populist figures on the Left and Right who threaten the center-right’s own electoral base. This is mixed, particularly in the U.K., with a traditional conservative refrain of “Please, won’t someone think of the children?” who are now supposedly being endangered by excessive screen time and addiction to “likes” and multi-player games.

A Moral Panic

We are squarely in the middle of a moral panic. Moral panics, according to social scientist Stanley Cohen’s classic formulation, proceed in five stages. In the first stage, a phenomenon is defined as a “threat to societal values and interests.” Alcohol, satanic cults, and youth culture have all been objects of popular opprobrium. In the second stage, the issue is “presented in a stylized and stereotypical fashion by the mass media,” and then in the third stage, “the moral barricades are manned by editors, bishops, politicians and other right-thinking people.” The fourth stage, which is where we are now with the internet and social media, is when “socially accredited experts pronounce their diagnoses and solutions.” In the final stage the issue “disappears, submerges or deteriorates.”

The fourth stage is the most dangerous, because that is when the power of the state is most likely to be used to cure the alleged social malady, often in a shortsighted, extreme and illiberal manner. It is the stage when alcohol is banned, jail sentences are increased, and innocent people are victimized. It is difficult to make good public policy in the midst of a moral panic.

Many Quillette contributors have criticized Big Tech for becoming censorious with respect to legal but controversial speech, and for being more likely to ban or shadow ban users who challenge the orthodoxies of the intersectional Left, including feminists. This clampdown, however, does not exist in a vacuum. In recent years, technology companies have faced the threat of state regulation—and in some cases its implementation—that requires them to enforce tough “terms of service,” and the state can impose harsh penalties if the companies fail to comply. In recent months the Canadian Government has called for “digital platform companies” to “redouble their efforts to combat… social harms,” and said these companies “should expect public regulation…if they fail to protect the public interest.”

This rhetoric helps explain why, for example, Facebook has chosen to remove all white nationalist content in recent weeks, which culminated with the banning of accounts associated with the British National Party, the English Defence League, and the National Front, all of which have been staples on the fringes of British politics for years. Twitter has banned several right-wing candidates standing in the U.K. in the forthcoming European Parliament elections, including Carl Benjamin and Tommy Robinson.

The EU, and in particular the U.K., has the dubious distinction of leading the democratic world in internet censorship. In 2016, the EU presented the Code of Conduct on Countering Illegal Hate Speech Online to Facebook, Microsoft, Twitter and YouTube. This code, developed by officials employed by the European Commission, an unelected body, came with the threat of legislation if companies don’t “voluntarily” sign up. It requires them to review and remove so-called “illegal hate speech” within 24 hours, and helps explain why these companies have become more aggressive at policing their own users.

This was swiftly followed by Germany’s Netzwerkdurchsetzungsgesetz (NetzDG) law, introduced in 2017, requiring large social media platforms to remove illegal content within 24 hours or face fines of up to €50 million. Inevitably, such laws rely on inconsistent and subjective definitions of what constitutes “hateful” or “offensive” speech and social media companies face considerable difficulty in understanding what content they’re expected to moderate out of existence and what is permitted. Not surprisingly, given the potential fines involved, they have erred on the side of caution. The German law has led to right-wing politicians having their accounts removed from Twitter and the suspension of an anti-racist German satirical magazine. A broad left-right coalition, including The Free Democratic (FDP), Green and Left parties, have all called for the law to be replaced.

In September of last year, the European Commission introduced the Code of Practice on Disinformation, another “self-regulatory” regime that puts pressure on tech companies to remove content in the context of the EU’s upcoming elections. Considering the difficulty in distinguishing “fake news” from genuine political commentary, this has the potential to undermine free speech. Ironically, the EU has now complained that Facebook responded to the code by banning cross-country advertising, which is perfectly legal in EU elections.

The European Parliament has also passed the updated Copyright Directive, which could lead to blocking of swaths of content with unknown origins for European users, and is currently considering new regulations designed to ban terrorist content. In recent months, Australia has rushed through laws to compel tech companies to provide access to encrypted messages, and, following the Christchurch terrorist attack, to jail tech company executives that fail to remove terrorist content, or depictions of murder or rape.

However, the harshest and broadest online speech regulations in any Western democracy are set to be introduced by the Government of the U.K.

The U.K. Leading the Democratic World in Online Censorship

“Because, as a society, one of our greatest responsibilities lies in keeping our children safe,” declared the British Home Secretary, Sajid Javid, earlier this month, announcing the launch of the Online Harms white paper. (In the U.K., a white paper is a formal statement of government policy prior to legislation.) Stanley Cohen would have struggled to find a more perfect exemplar of the rhetoric of a moral panic. Javid claims that the internet is a “hunting ground for monsters” who want to victimize children, groom terrorists, and display “depraved” material. Where some people see technologies that build communities of shared interests, democratize access to information, and create millions of jobs, Javid sees danger everywhere—“in games, apps, chat rooms and more.” He announced that tech companies have “failed to take responsibility” and declared: “I warned you. And you did not do enough. So it’s no longer a matter of choice.”

While the increasing clamor for the regulation of digital platforming companies may be an international phenomenon, it has also been amplified by Britain’s peculiar political context. The U.K. Conservative Government, led by Theresa May, is currently failing to deliver on the central plank of the Tory manifesto—Brexit—and lacks any other meaningful domestic policy agenda. Meanwhile, the British political media is obsessed with the jostling within the Conservative Party over who is to become the next prime minister when May finally resigns, which is expected to happen later this year. Notably, Javid only responded to criticism of the proposals when it was put in the context of his well-known leadership ambitions. With a poll last year finding that 4/5ths of the British public have concerns about going online, Big Tech has become a politically convenient punching bag for these ambitious Tory politicians.

The press release announcing the Online Harms white paper is titled: “U.K. to introduce world first online safety laws.” Apparently, the British Government wants to lead the democratic world when it comes to online censorship, proudly trying to out-do China, Saudi Arabia, Turkey, and Russia. This is all coming from a center-right Government that supposedly believes in free speech (but has a questionable track record in this regard). The white paper seeks to make the U.K. the “safest” place in the world to use the internet by imposing a “duty of care” on technology companies to remove “harmful” content, although it has failed to provide concise definitions for these terms. This “duty” is to be enforced by a powerful new regulator. If company executives fail to uphold these as-yet undefined standards, they could face hefty fines, jail time, or even see their companies lose access to web hosting and payment services or, worse, be blocked. In sum, the U.K. Government wants technology companies to be responsible for enforcing an expansive state censorship regime.

In Scope: the Whole Internet

The “duty of care” will not just apply to the likes of YouTube, Facebook, and Twitter but to any organization “that allows users to share or discover user-generated content or interact with each other online.” Notably, the white paper doesn’t say anything about how law enforcement will be properly resourced to fight the criminals engaging in illegal activity online. The focus is squarely on legal sites, as if they are morally culpable for the unlawful actions of others. This includes file hosting sites, public discussion forums, retailers with customer reviews, non-profit organizations with comments on their blogs, messaging services, and search engines. It will even include private group conversations of a yet-to-be-defined size. While this is unlikely to cause Facebook any problems—it has the resources to hire the moderators and develop the algorithms and build relationships with the regulators to meet these demands—it will be potentially catastrophic for smaller companies, particularly start-ups, that could end up being crippled by the compliance costs. This could result in solidifying the market dominance of the technology giants that the policy is primarily targeting—one reason, perhaps, why Mark Zuckerberg has given the thumbs up to regulation.

The proposed regime initially appeared to extend to news websites, which have user-generated content in the form of comments sections below articles. Within days, however, Culture Secretary Jeremy Wright announced that “journalistic or editorial content would not be affected.” This statement, however, raises more questions than it answers. What counts as “journalistic or editorial content”—does it include online news sites such as Breitbart? And what about personal blogs? Would only publications with a print edition be exempt? What about when newspaper articles are shared on social media? If they are flagged by Twitter or Facebook as containing “harmful” content, will they be removed or hidden by search engines? What about the impact of removing content from websites on journalists researching articles? None of these questions have been answered.

What “Harms”?

The range of “harms” this new regulator is expected to eliminate is extraordinarily large. Companies will not only be expected to deal with “illegal” material, such as terrorist activity, child sexual exploitation, incitement to violence, and hate crimes, but also to prohibit “unacceptable content,” which the white paper defines as “harms with a less clear legal definition.” As examples of these “harms,” it gives “trolling,” “cyberbullying,” and “disinformation”—vague, subjective concepts that aren’t defined in English law and necessitate subjective boundary setting. After all, one person’s fake news is another’s fact and one person’s trolling is another’s biting political commentary. Will it still be possible to criticize politicians in a forthright manner, or will this be “trolling”? Is a trenchant attack on Muslim immigration permissible commentary or “cyberbullying”? Is a meme making fun of Hillary Clinton “disinformation” or is it content which reflects an honestly-held political viewpoint by a large segment of the American public?

The overreach is breathtaking. The U.K. Government is explicitly seeking to outlaw swaths of currently legal speech online. This will make the internet a much less free place to speak compared to Speakers’ Corner at Hyde Park—the place which is supposed to represent Britain’s commitment to free speech. For example, when discussing what constitutes a “hate crime,” the white paper states that the Government expects the regulator to go well beyond forms of speech prohibited by English law and prohibit legal speech. The regulator will be expected to provide

Guidance to companies to outline what activity and material constitutes hateful content, including that which is a hate crime, or where not necessarily illegal, content that may directly or indirectly cause harm to other users—for example, in some cases of bullying, or offensive material.

This invites the question: offensive to whom, how, and when? Offensive speech, by its very nature, is in the ears of the listener—offense is taken, not given. That is a very low bar for speech censorship, made even lower by the words “may directly or indirectly” give offense, which covers more or less anything, and would be clearly unconstitutional in the U.S.

It’s not as if the U.K. authorities have a good track record when it comes to tolerating “offensive” speech. There have been reports of the police arresting up to nine people a day for offensive messages online, including threatening to arrest a Catholic journalist for using the wrong gender pronoun on Twitter earlier this year, and the authorities in Scotland successfully brought a case against a YouTuber for teaching a pug to do a Nazi salute. Under the proposed new regime, internet censorship would get worse. The combination of an extremely broad and unclear definition of “harm” alongside the threat of swingeing fines and criminal prosecutions will lead online companies to err on the side of extreme caution and remove reams of content.