Art & Culture

The Case for Moral Doubt

When you find yourself in a moral stalemate, where appeals to rationality and empirical reality have been exhausted.

I don’t know if there is any truth in morality that is comparable to other truths. But I do know that if moral truth exists, establishing it at the at the most fundamental level is hard to do.

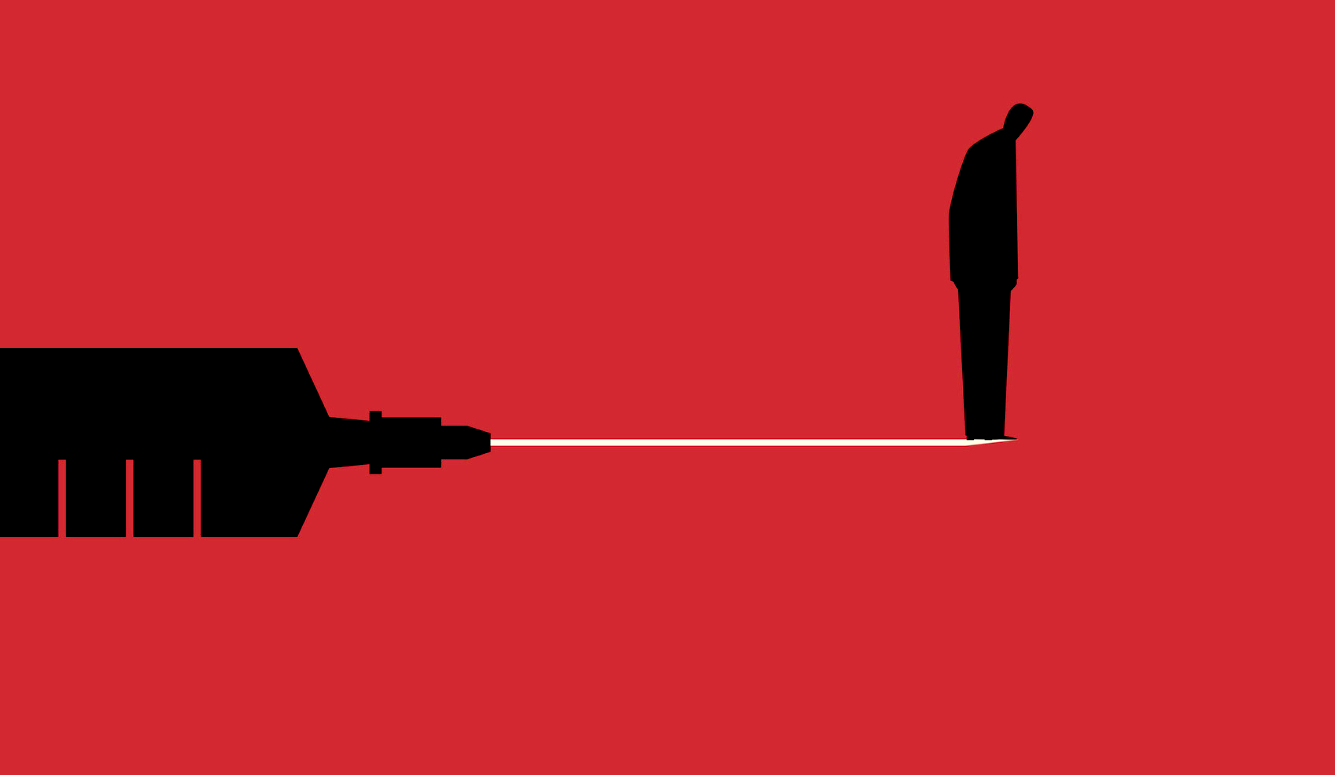

Especially in the context of passionate moral disagreement, it’s difficult to tell whose basic moral values are the right ones. It’s an even greater challenge verifying that you yourself are the more virtuous. When you find yourself in a moral stalemate, where appeals to rationality and empirical reality have been exhausted, you have nothing left to stand on but your deeply-ingrained values and a profound sense of your own righteousness.

You have a few options at this point. You can defiantly insist that you’re the one with the truth – that you’re right and the other person is stupid, morally perverse, or even evil. Or you can retreat to some form of nihilism or relativism because the apparent insolubility of the conflict must mean moral truth can’t exist. This wouldn’t be psychologically satisfying, though. If your moral conviction is strong enough to lead you into an impassioned conflict, you probably wouldn’t be so willing to send it off into the ether. Why would you be comfortable slipping from intense moral certainty to meta-level amorality?

You’d be better off acknowledging the impasse for what it is – a conflict over competing values that you’re not likely to settle. You can agree to disagree. No animosity. No judgment. You both have dug your heels in, but you’re no longer trying to drive each other back. You can’t convince him that you’re right, and he can’t convince you, but you can at least be cordial.

But I think there is an even better approach. You can loosen your heels’ grip in the dirt and allow yourself to be pushed back. Doubt yourself, at least a little. Take a lesson from the physicist Richard Feynman and embrace uncertainty:

You see, one thing is, I can live with doubt, and uncertainty, and not knowing. I think it’s much more interesting to live not knowing than to have answers which might be wrong. I have approximate answers and possible beliefs and different degrees of certainty about different things. But I’m not absolutely sure of anything, and there are many things I don’t know anything about, such as whether it means anything to ask why we’re here, and what the question might mean. I might think about it a little bit; if I can’t figure it out, then I go onto something else. But I don’t have to know an answer. I don’t feel frightened by not knowing things, by being lost in the mysterious universe without having any purpose, which is the way it really is, as far as I can tell – possibly. It doesn’t frighten me.

If Feynman can be so open to doubt about empirical matters, then why is it so hard to doubt our moral beliefs? Or, to put it another way, why does uncertainty about how the world is come easier than uncertainty about how the world, from an objective stance, ought to be.

Sure, the nature of moral belief is such that its contents seem self-evident to the believer. But when you think about why you hold certain beliefs, and when you consider what it would take to prove them objectively correct, they don’t seem so obvious.

Morality is complex and moral truth is elusive, so our moral beliefs are, to use Feynman’s phrase, just the “approximate answers” to moral questions. We hold them with varying degrees of certainty. We’re ambivalent about some, pretty sure about others, and very confident about others. For none of them are we absolutely certain – or at least we shouldn’t be.

While I’m trying only to persuade you to be more skeptical about your own moral beliefs, you might be tempted by the more global forms of moral skepticism that deny the existence of moral truth or the possibility of moral knowledge. Fair enough, but what I’ve written so far isn’t enough to validate such a stance. The inability to reach moral agreement doesn’t imply that there is no moral truth. Scientists disagree about many things, but they don’t throw their hands up and infer that no one is correct, that there is no truth. Rather, they’re confident truth exists. And they leverage their localized skepticism – their doubt about their own beliefs and those of others – to get closer to it.

The moral sphere is different to the scientific sphere, and doubting one’s moral beliefs isn’t necessarily valuable because it eventually leads one closer to the truth – at least to the extent that truth is construed as being in accordance with facts. This type of truth is, in principle, more easily discoverable in science than it is in morality. But there is a more basic reason that grounds the importance of doubt in both the moral and scientific spheres: it fosters an openness to alternatives.

Embrace this notion. It doesn’t have to mean acknowledging that you don’t know and then moving on to something else. And it doesn’t mean abandoning your moral convictions outright or being paralyzed by self-doubt. It means abandoning your absolute certainty and treating your convictions as tentative. It means making your case but recognizing that you have no greater access to the ultimate moral truth than anyone else. Be open to the possibility that you’re wrong and that your beliefs are the ones requiring modification. Allow yourself to feel the intuitive pull of the competing moral value.

There’s a view out there that clinging unwaveringly to one’s moral values is courageous and, therefore, virtuous. There’s some truth to this idea. Sticking up for what one believes in is usually worthy of respect. But moral rigidity – the refusal to budge at all – is where public moral discourse breaks down. It is the root of the fragmentation and polarization that defines contemporary public life.

Some people have been looking for ways to improve the ecosystem of moral discourse. In a study, Robb Willer and Matthew Feinberg1 demonstrated that when you reframe arguments to appeal to political opponents’ own moral values, you’re more likely to persuade them than if you make arguments based on your own values. “Even if the arguments that you wind up making aren’t those that you would find most appealing,” they wrote in The New York Times, “you will have dignified the morality of your political rivals with your attention, which, if you think about it, is the least that we owe our fellow citizens.”