Science

Inside the Superforecasters

Tetlock recruited 284 pundits and had them make 82,000 individual predictions from 1983 to 2003.

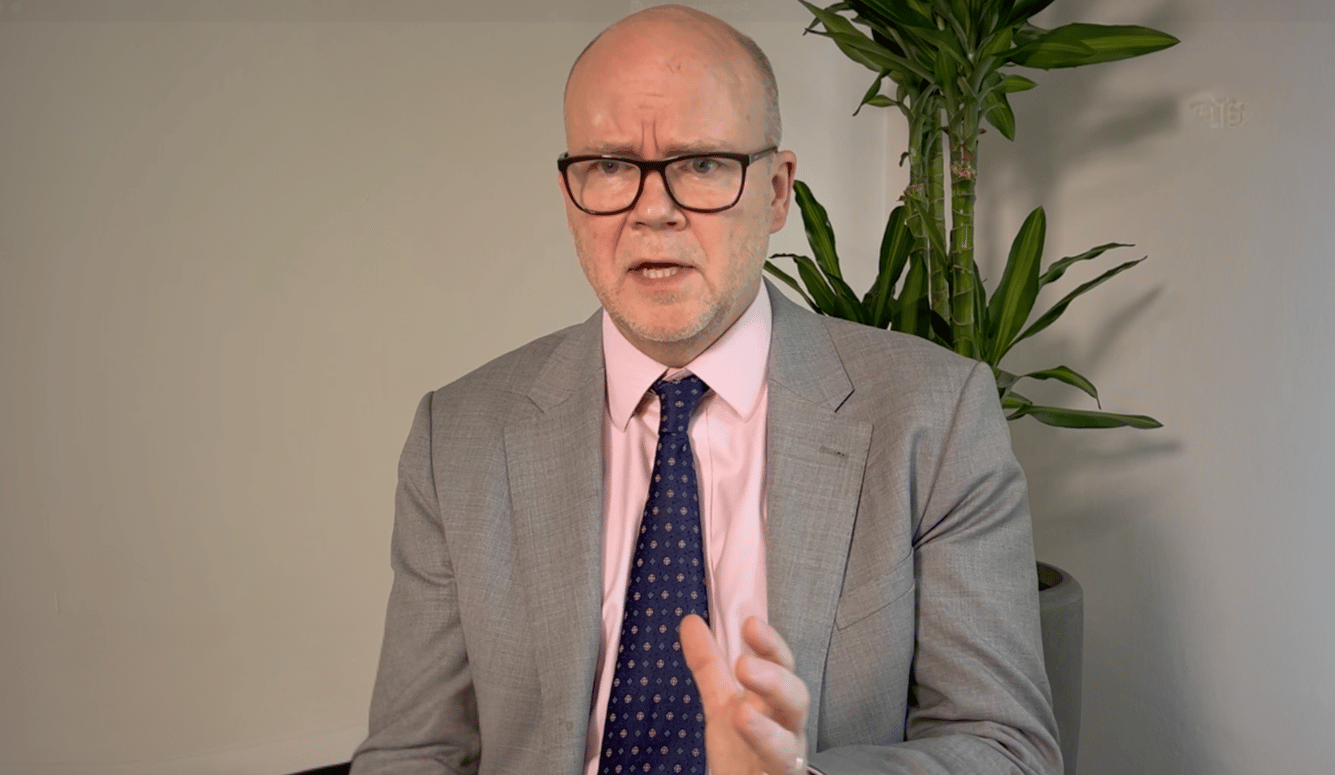

How did you find out about superforecasting?

Four years ago an email entitled “Philip Tetlock requests your help” appeared in my inbox: “If you’re willing to experiment with ways to improve your forecasting ability and if being part of cutting edge scientific research appeals to you, then we want you to help us generate forecasts of importance to national security.”

Who is Philip Tetlock?

Phil Tetlock is a political scientist and psychologist. He is the author of a major 20 year study in geopolitical forecasting, published in 2005 as 'Expert Political Judgement'.

He had tried to find a definitive answer to whether pundits and experts,

(while having acknowledge command of their subjects) were any good at predicting how their specialized areas would appear in the future.

What happened?

Tetlock recruited 284 pundits and had them make 82,000 individual predictions from 1983 to 2003. For example pundits were asked ‘will economic growth stay on trend, increase or go into recession?’ To which they had to answer ‘increase, decrease or status quo’. This simple 3 answer multiple choice format made comparing their performances rather easy.

How did the pundits perform?

Stripped of their weasel words and Barnum statement equivocating they performed very poorly. On average, they were no better than ‘dart throwing monkeys’.

It wasn’t all bad for them however, some of them did manage to beat the average. But the pundits who had had the most media and governmental attention over their careers actually performed the worst.

They turned out to be predictably, humanly fallible and poorly incentivised. They fell victim to the whims of producers demanding bold, confident precise future scenarios and beholden to their human irrationality. (This, of course, was being contemporaneously investigated by Tversky and Kahneman).

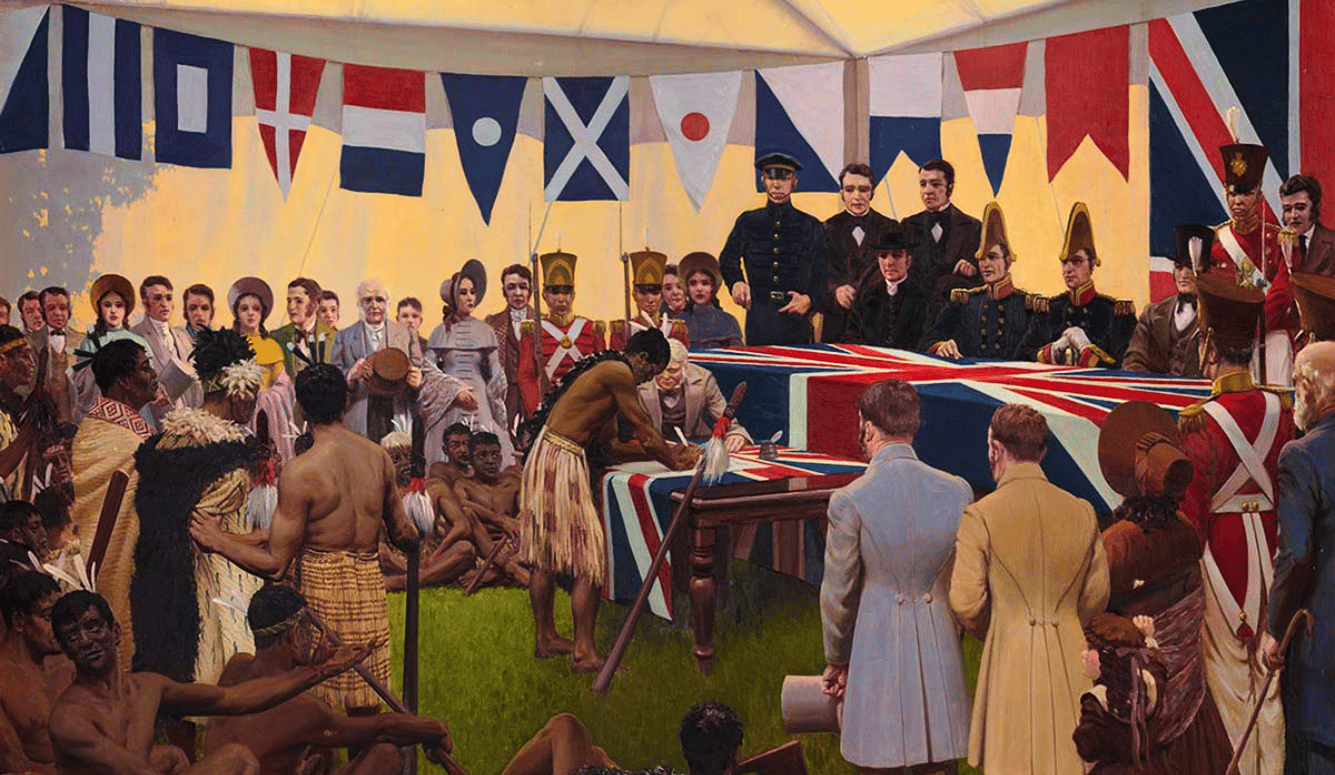

What are some tests of forecasting ability?

The conjunction fallacy is used with a geopolitical twist. (The conjunction fallacy is more commonly known for Linda the feminist banker). Tversky and Kahneman instead asked a 1982 sample of political scientists to estimate either:

- ‘the chance of a Russian invasion of Poland and a complete suspension of diplomatic relations between the US and the USSR, sometime in 1983’ or

- ‘ a complete suspension of diplomatic relations between the US and the USSR, sometime in 1983 for any reason.’

The average answer for the Poland-invading scenario was 4%. But for the necessarily much more likely potential outcome of a suspension of relations for any reason (including of course the invasion of Poland) was only 1%.

In sum, a rather poor showing.

How does one improve?

Let’s say we wanted to try to get the best possible forecasts instead of demonstrate how bad the field currently is. Taking the lessons from Expert Political Judgment, Tetlock identified three pillars of potential futurology progress:

- Incentivise accuracy

- Help people catch their biases

- Identify track records of forecasters and weigh them accordingly

These ideas initially didn’t find as ready an audience until 2006, a year after the publication of Expert Political Judgment. This occurred when the US government instituted a shakeup of the intelligence services.

Along with the creation of a Director of National Intelligence came a new intelligence research agency, IARPA (Intelligence Advanced Research Projects Activity) known colloquially as ‘DARPA for spies’. It was tasked with conducting ‘high-risk/high-payoff research programs that have the potential to provide our nation with an overwhelming intelligence advantage over future adversaries’.

Why is good forecasting so important?

The Intelligence Community faced criticism for the false negative of 9/11 and the false positive of Iraq’s missing WMDs. The Intelligence Community needed (and still needs) to improve.

So how did you – a university student – get involved?

IARPA’s ‘Office Of Anticipating Surprise’ (led by the superbly titled Director of Anticipating Surprise) set up the largest ever tournament for forecasters to see what could be improved. Several university teams entered, including Tetlock’s group at University of Pensylvania and Berkeley, who set about recruiting forecasters to join their team, reaching out to blogs, personal networks, asking their friends and colleagues to pass on requests for volunteers.

Which is how I came by that email.

How did the forecasters come to be known as super-forecasters?

Forecasting around 100 questions a year, we were given $250 in Amazon vouchers and the promise of validation should the forecasts come true for our inconvenience. In an environment of rationality and geopolitical training incentivised only by Brier score (a simple measure of forecasting accuracy) thousands of regular people submitted their best estimates on Russian troop movements, North Korean Missile tests or the spread of global diseases.

The results were very good. The only requirement for entry to which was an undergraduate degree and the willingness to give it a go, and the weighted average forecasts of the main sample beat the 4 year programme’s final projected goals in year one. The project has also produced novel findings for psychological science, the most important being that there are some people who, independent of knowledge about a particular region or subfield, are just really good at forecasting.

The idea that forecasting is an independent skill to domain specific expertise seems rather novel. What impact do you think it will have?

The idea that forecasting is an independent skill is absolutely revolutionary to organisations involved in intelligence gathering or dissemination. They nearly all recruit by regional expertise and use subject matter knowledge as a proxy for future predictive power. But Tetlock and the team that he and his wife have created (fellow psychology professor Barbara Mellors) and named ‘the Good Judgment Project‘ discovered that this isn’t nearly as important as one might think. An amateur with a good forecasting record will generally outperform an expert without one.

For example, putting the top 2% of performers, the ‘Superforecasters’ into teams, enlarged their lead over the already highly performing sample to 65%, and beat the algorithms of competitor teams by between 35 and 60%, the open prediction markets by 20-35%, and (according to unconfirmed leaks from the Intelligence Community) the forecasts of professional government intelligence analysts by 30%. Some people are just very good at forecasting indeed.

What makes someone a superforecaster?

Part of it is personality, part of it the environment and incentives in which the forecasts take place, and there’s a role for training too.

Superforecasters were, in comparison to the general population of forecasting volunteers, more actively open minded- actively trying to disprove their own hypotheses, with a high fluid IQ, high need for cognition and a comfort with numbers.

But even the best forecasters need the right incentives- people who are rewarded for being ‘yes men’ will never give you their best work regardless of potential.

Can superforecasting be trained?

Given three hours of rationality and source-discovery training, nearly everyone (even relatively poor performers) got better. Being encouraged to think about the ‘outside view’ of a situation, looking for comparisons, base rates, and a healthy dose of Baysian thinking works wonders for one’s Brier score.

What has your experience been like?

My personal experience of fellow superforecasters was one of similarity- despite coming from a great variety of national and educational backgrounds, the first time we all gathered in one place together over at Berkeley college in California, a chap sitting next to me (now a colleague and pal) whispered that he ‘felt like that scene at the end of E.T. where he returns to his home planet and meets all the other E.T.s’. The personality factors certainly seemed dominant in the room. It’s amazing where an email can take you.

Is the Good Judgement Project still recruiting?

Yes. The search for new superforecasters is still ongoing, if you’d like to try your hand we are accepting all comers at GJOpen.com.