We live in a paradoxical informational landscape. Never before have citizens had access to such an abundance of data, expert commentary, real-time analysis, and historical context. The digital age promised a “Great Clarification,” where the democratisation of information would serve as a universal solvent for ignorance. But despite this unprecedented availability, it has rarely been so difficult to form a coherent understanding of what is actually happening in the world.

The problem is not ignorance in the classical sense. The contemporary public is not uninformed; on the contrary, it is hyper-informed. People consume an endless stream of charts, “explainer” threads, and long-form analysis. But instead of clarity, the cumulative result is often a paralysing confusion paired with a growing sense of moral certainty. This is not the confusion of someone who knows they lack information; it is the confusion of someone who feels deeply informed and yet cannot explain why reality consistently fails to behave as predicted. Something essential is missing. That missing element is not data; it is discernment.

When disinformation is discussed in public discourse, it is usually framed as a problem of lies—fake news, fabricated claims, or manipulated images spread deliberately to mislead. While that phenomenon exists, it is no longer the primary threat to collective understanding. The most pervasive form of disinformation today is far subtler and more effective because it does not rely on false data. Instead, it relies on flawed reasoning methods applied to real, verifiable information.

“Soft disinformation” can be defined as the systematic transmission of non-falsifiable reasoning patterns using accurate or verifiable information. Its defining feature is not what it says but how it teaches people to think. This distinction matters because there is no such thing as analysis without bias; every interpretation of reality begins with assumptions. The issue is not the presence of bias but whether the analytical method allows those assumptions to be challenged, revised, or falsified. Soft disinformation does not prohibit dissent; it renders dissent structurally irrelevant. The most effective disinformation does not lie; it teaches people how to reason poorly while feeling intellectually responsible.

The operational structure of soft disinformation is remarkably consistent. It begins with an implicit conclusion established in advance. Data compatible with that conclusion are selected, while variables that complicate the narrative are omitted or deprioritised. The result is presented as neutral, evidence-based analysis, often draped in the visual language of authority—charts, citations, and professional jargon. Statistics may be accurate and quotations may be authentic, but the manipulation occurs entirely in the assembly. Crucially, the method does not generate hypotheses that can fail. The conclusion is insulated from refutation by design.

One of the most counterintuitive aspects of soft disinformation is that higher intelligence and education do not necessarily protect against it. In many cases, they increase vulnerability—this phenomenon is often described in cognitive science as motivated reasoning or motivated numeracy. This research suggests that individuals with the highest mathematical and analytical skills are often the most likely to use those skills to rationalise a preferred conclusion rather than objectively analyse the data at hand.

Individuals trained to process complex information often rely on trusted analytical shortcuts. When those shortcuts are supplied by authoritative voices using familiar academic conventions, scrutiny decreases. Moreover, analytical sophistication can be repurposed defensively. Complex reasoning skills make it easier to rationalise a preferred conclusion after the fact, especially when the surrounding informational environment offers ample material for selective justification. High-IQ individuals are not necessarily more objective; they are simply better at constructing elaborate justifications for their existing biases. Soft disinformation does not target the ignorant; it targets the motivated.

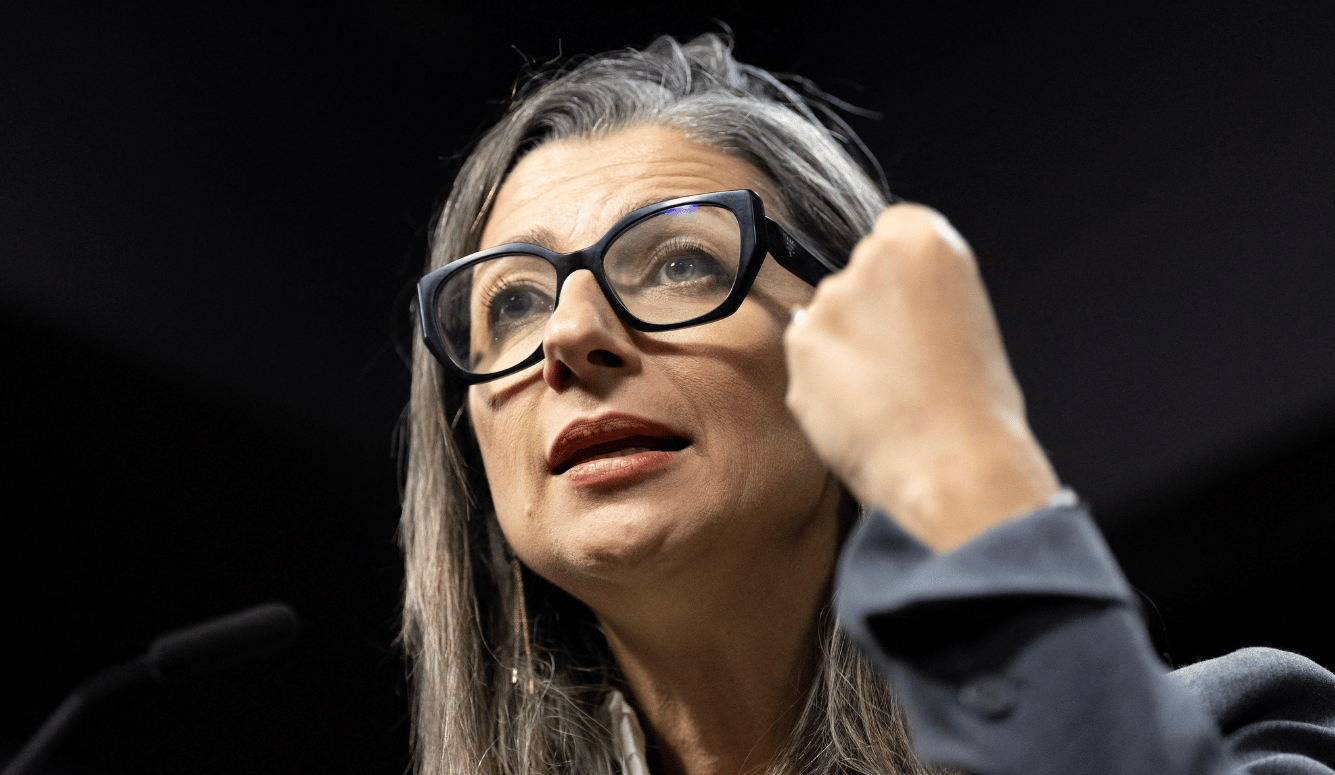

Within this ecosystem, the “militant analyst” has become prominent. This is not a propagandist in the crude sense, nor necessarily an incompetent journalist. It is someone who has adapted their analytical behaviour to an environment that rewards certainty and penalises doubt. The militant analyst does not seek to disconfirm their framework; they seek to optimise it rhetorically. Data become ammunition rather than instrumental to discovery.