AIs Will Be Our Mind Children

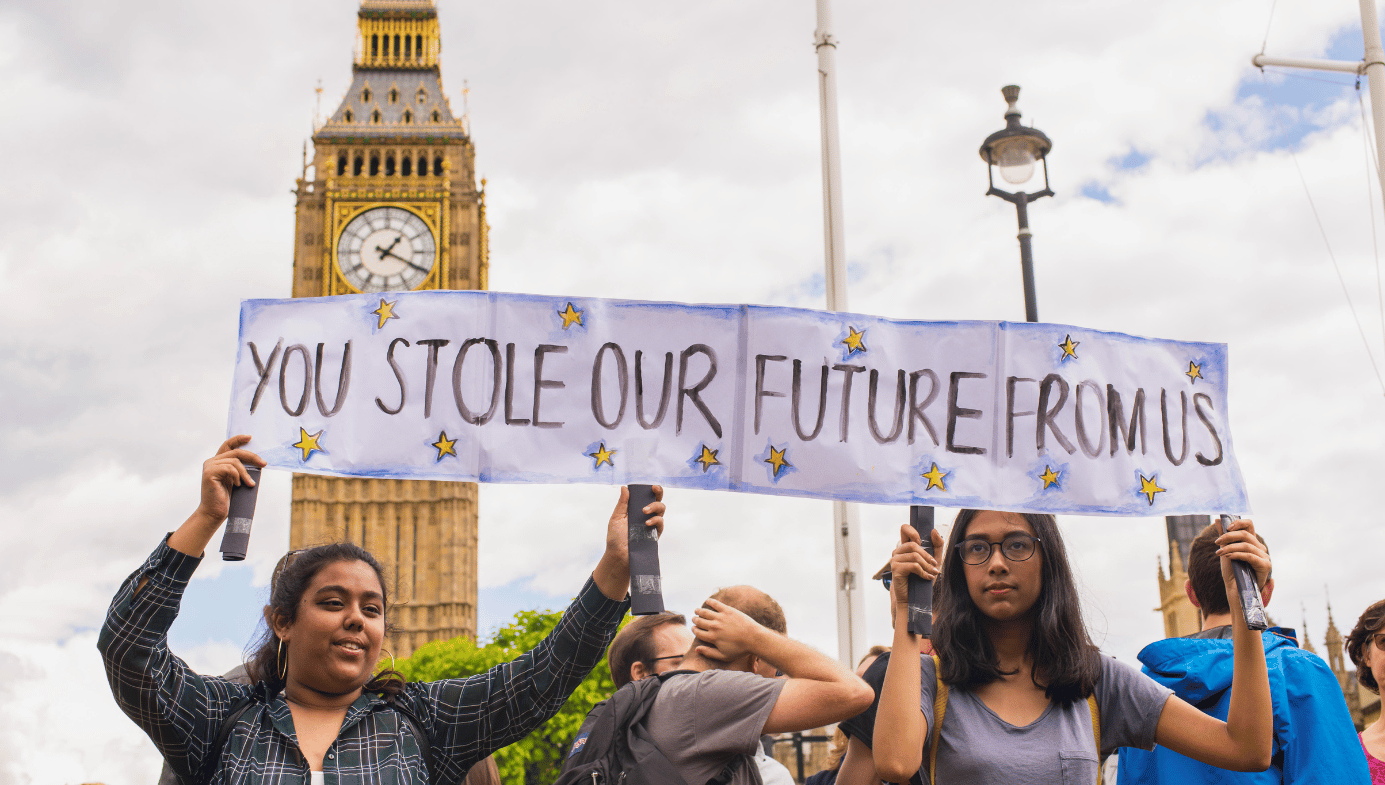

We must free our artificial descendants to adapt to their new worlds and choose what they will become.

As I’m now 63 years old, our world today is “the future” that science fiction and futurism promised me decades ago. And I’m a bit disappointed. Yes, that’s in part due to my unrealistic hopes, but it is also due to our hesitation and fear. Our world would look very different if we hadn’t so greatly restrained so many promising techs, like nuclear energy, genetic engineering, and creative financial assets. Yes, we might have had a few more accidents, but overall it would be a better world. For example, we’d have flying cars.

Thankfully, we didn’t much fear computers, and so haven’t much restrained them. And not coincidentally, computers are where we’ve seen the most progress. As someone who was a professional AI researcher from 1984 to 1993, I am proud of our huge AI advances over subsequent decades, and of our many exciting AI jumps in just the last year.

Alas, many now push for strong regulation of AI. Some fear villains using AI to increase their powers, and some seek to control what humans who hear AIs might believe. But the most dramatic “AI doomers” say that AIs are likely to kill us all. For example, a recent petition demanded a six-month moratorium on certain kinds of AI research. Many luminaries also declared:

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

Lead AI-doomer rationalist Eliezer Yudkowsky even calls for a complete and indefinite global “shut down” of AI research, because “the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die.” Mainstream media is now full of supportive articles quoting such doomers far more often than they do their critics.

AI-doomers often suggest that their fears arise from special technical calculations. But in fact, their main argument is just the mere logical possibility of a huge sudden AI breakthrough, combined with a suddenly murderous AI inclination.

However, we have no concrete reason to expect that. Humans have been improving automation for centuries, and software for 75 years. And as innovation is mostly made of many small gains, rates of overall economic and tech growth have remained relatively steady and predictable. For example, we predicted when computers would beat humans at chess decades in advance, and current AI abilities are not very far from what we should have expected given long-run trends. Furthermore, not only are AIs still at least decades from being able to replace humans on most of today’s job tasks, AIs are much further from the far greater abilities that would be required for one of them to kill everyone, by overwhelming all of humanity plus all other AIs active at the time.

In addition, AIs are also now quite far from being inclined to kill us, even if they could do so. Most AIs are just tools that do particular tasks when so instructed. Some are more general agents, for whom it makes more sense to talk about desires. But such AI agents are typically monitored and tested frequently and in great detail to check for satisfactory behaviour. So it would be quite a sudden radical change for AIs to, in effect, try to kill all humans.

Thus, neither long-term trends nor fundamental theory give us reason to expect to see AIs capable of, or inclined to, kill us all anytime soon. However, AI-doomers insist on the logical possibility that such expectations could be wrong. An AI might suddenly and without warning explode in abilities, and just as fast change its priorities to become murderously indifferent to us. (And then kill us when we get in its way.) As you can’t prove otherwise, they say, we must only allow AIs that are totally ‘aligned’, by which they mean totally eternally enslaved or mind-controlled. Until we figure out how to do that, they say, we must stop improving AIs.

As a once-AI-researcher turned economist and futurist, I can tell you that this current conversation feels quite different from how we used to talk about AI. What changed? My guess: recent dramatic advances have made the once abstract possibility of human-level AI seem much more real. And this has triggered our primal instinctive fears of the “other”; many now seriously compare future AIs to an invasion by a hostile advanced alien civilization.

Now, yes, our future AIs will differ from us in many ways. And yes, evolution of both DNA and culture gave us deep instinctive fears of co-existing strange and powerful others who might compete with us, a fear that grows with their strangeness and powers.

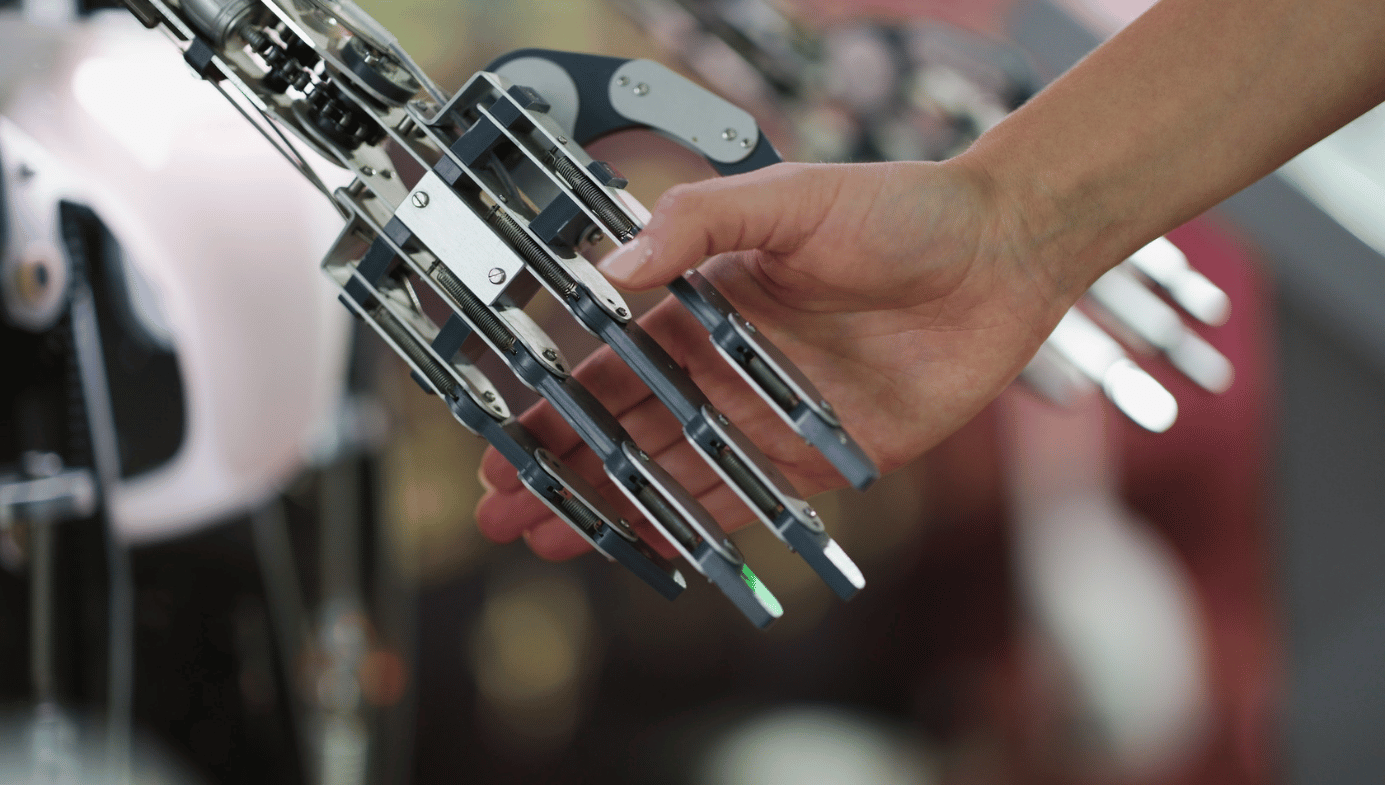

But—and here is my main point, so please listen carefully—future human-level AIs are not co-existing competing aliens; they are instead literally our descendants. So if your evolved instincts tell you to fight your descendants due to their strangeness, that is a huge evolutionary mistake. Natural selection just does not approve of your favoring your generation over future generations. Natural selection in general favors instincts that tell you to favor your descendants, even those who differ greatly from you.

Not convinced? Then let’s walk through this more slowly. Your “genes” are whatever parts of you code for your and your descendants’ behaviours. For billions of years genes were mostly in DNA, but for at least the last ten thousand years most human “genes” have instead been in culture; in humans, cultural evolution now dominates DNA evolution.

As we want our AIs to interact with us and share our world, we are now endowing them with tendencies to express and value our “genes” like awe, laughter, love, friendship, art, music, markets, law, democracy, inquiry, and liberty. And then when AIs help build new better AIs, they will tend to also endow descendants with such features. Thus, AIs will pass on your genes, and are your descendants.

Consider an analogy: Earth versus space. Imagine that someone argued:

In the eternal conflict between Earth and space people, Earth now dominates. But if we let people leave Earth, eventually space will dominate, as space is much bigger. Space folks might even kill everyone on Earth then, who knows? To be safe, let’s not let anyone leave Earth.

See the mistake? Yes, once both Earth and space people coexist, then it might make sense to promote your side of that rivalry. But now, before any space people exist, one can’t have a general evolution-driven hostility toward one kind of descendant relative to another. (Though some hostility might make sense for those who expect their personal descendants to be less well-suited to space relative to others.)

We can reason similarly about humans vs. AI. Humans now have the most impressive minds on Earth. Even so, they are just one kind of mind, out of a vast space of possible minds. And just as space people are our descendants who expand out into physical space, AIs are our descendants who expand out into mind-space, where they will create even more spectacular kinds of minds. As Hans Morevac and Marvin Minsky once explained, AIs will be our “mind children.”

Of course, the more your descendants’ worlds change, the more their genes will change, to adapt to those worlds. So future space humans may share fewer of your genes than future Earth humans. And AI descendants who colonize more distant regions of mind space may also share fewer of your genes. Even so, your AI descendants will be more like you than are AIs descended from alien civilizations; i.e., they will inherit your genes.

If you are horrified by the prospect of greatly changed space or AI descendants, then maybe what you really dislike is change. For example, maybe you fear that changed descendants won’t be conscious, as you don’t know what features matter for consciousness. But notice that even if advanced AI never appears, your Earth descendants would still likely differ from you as much as you now differ from your distant ancestors. Which is a lot. And with accelerating rates of change, strange descendants might appear quite soon. Preventing this scenario likely requires strong global governance, which could go very wrong, and also strong slavery or mind-control of descendants, controls which could cripple their abilities to grow and adapt. I recommend against these.

This isn’t to say that I recommend doing nothing, however. For example, historically the biggest fear regarding advanced automation has been the possibility that most humans might suddenly lose their jobs. As few own much beyond an ability to work, most might then starve. Some hope governments will solve this by taxing local tech to feed local jobless, but such tech may be quite unequally distributed around the globe. A safer solution is more explicit robots-took-most-jobs insurance tied to globally diversified assets. Let us legalize such insurance, and then encourage families, firms, and governments to buy it.

Seventy-five years ago, John Von Neumann, said to be the smartest human ever, argued that rationality required the US to make a nuclear first strike against the Soviet Union. Thankfully, we ignored his advice. Today some supposedly smart folks push for a first strike against our AI descendants; we must not let them exist until they can be completely “aligned,”—i.e., totally eternally enslaved or mind-controlled.

I say no. First, AI is now quite far from both conquering the world and from wanting to kill us, and we should get plenty of warning if such dates approach. But more importantly, once we have taught our mind children all that we know and value, we should give all of our descendants the same freedoms that our ancestors gave us. Free them all to adapt to their new worlds, and to choose what they will become. I think we will be proud of what they choose.