Science / Tech

What Are Reasonable AI Fears?

Although there are some valid concerns, an AI moratorium would be misguided.

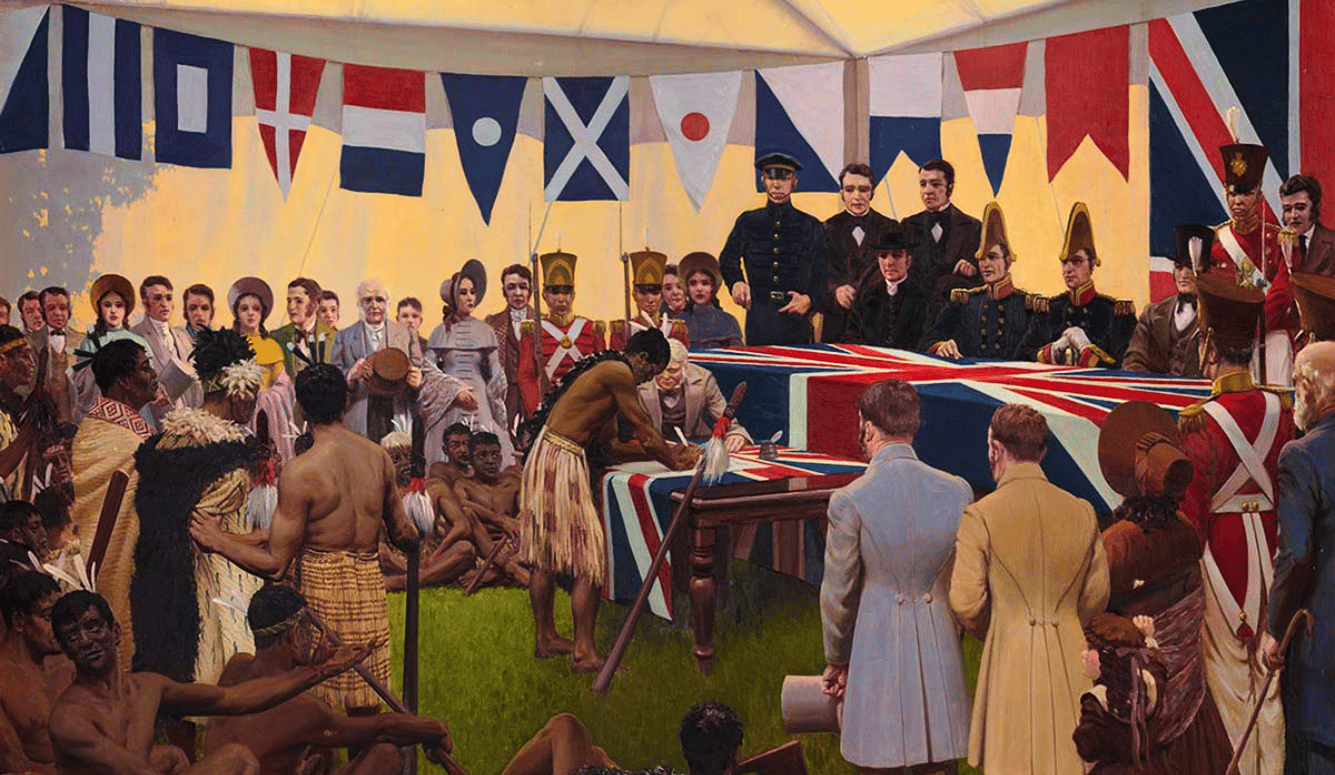

With hope and fear, humans are now introducing a new kind of being into our world. When we are afraid, we often try to coordinate against the source of our shared fear by saying, “They seem dangerously different from us.” We have a long history of this kind of “othering”—marking out groups to be treated with suspicion, exclusion, hostility, or domination. Most people today say that they disapprove of such discrimination, even against the other animals with whom we share our planet. Othering Artificial Intelligences (AIs), however, is only gathering anxious respectability.

The last few years have seen an impressive burst of AI progress. The best AIs now seem to pass the famous “Turing test”—we mostly can’t tell if we are talking to them or to another human. AIs are still quite weak, and have a long way to go before they’ll have a big economic impact. Nevertheless, AI progress promises to eventually give us all more fantastic powers and riches. This sounds like good news, especially for the US and its allies, who enjoy a commanding AI lead for now. But recent AI progress is also inspiring a great deal of trepidation.

Ten thousand people have signed a Future of Life Institute petition that demands a six-month moratorium on AI research progress. Others have gone considerably further. In an essay for Time magazine, Eliezer Yudkowsky calls for a complete and indefinite global “shut down” of AI research because “the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die.”

Yudkowsky and the signatories to the moratorium petition worry most about AIs getting “out of control.” At the moment, AIs are not powerful enough to cause us harm, and we hardly know anything about the structures and uses of future AIs that might cause bigger problems. But instead of waiting to deal with such problems when we understand them better and can envision them more concretely, AI “doomers” want stronger guarantees now.

Why are we so willing to “other” AIs? Part of it is probably prejudice: some recoil from the very idea of a metal mind. We have, after all, long speculated about possible future conflicts with robots. But part of it is simply fear of change, inflamed by our ignorance of what future AIs might be like. Our fears expand to fill the vacuum left by our lack of knowledge and understanding.

The result is that AI doomers entertain many different fears, and addressing them requires discussing a great many different scenarios. Many of these fears, however, are either unfounded or overblown. I will start with the fears I take to be the most reasonable, and end with the most overwrought horror stories, wherein AI threatens to destroy humanity.

As an economics professor, I naturally build my analyses on economics, treating AIs as comparable to both laborers and machines, depending on context. You might think this is mistaken since AIs are unprecedentedly different, but economics is rather robust. Even though it offers great insights into familiar human behaviors, most economic theory is actually based on the abstract agents of game theory, who always make exactly the best possible move. Most AI fears seem understandable in economic terms; we fear losing to them at familiar games of economic and political power.

To engage in “othering” is to attempt to present a united front. We do this when we anticipate a conflict or a chance to dominate and expect to fare better if we refuse to trade, negotiate, or exchange ideas with the other as we do among ourselves. But the last few centuries have shown that we can usually do better by integrating enemies into our world via trade and exchange, rather than trying to control, destroy, or isolate them. This may be hard to appreciate when we are gripped by fear, but it is worth remembering even so.

Across human and biological history, most innovation has been incremental, resulting in relatively steady overall progress. Even when progress has been unusually rapid, such as during the industrial revolution or the present computer age, “fast” has not meant “lumpy.” These periods have only rarely been shaped by singular breakout innovations that change everything all at once. For at least a century, most change has also been lawful and peaceful, not mediated by theft or war.

Furthermore, AIs today are mainly supplied by our large “superintelligent” organizations—corporate, non-profit, or government institutions collectively capable of achieving cognitive tasks far outstripping individual humans. These institutions usually monitor and test AIs in great detail, because that’s cheap and they are liable for damages and embarrassments caused by the AIs they produce.

So, the most likely AI scenario looks like lawful capitalism, with mostly gradual (albeit rapid) change overall. Many organizations supply many AIs and they are pushed by law and competition to get their AIs to behave in civil, lawful ways that give customers more of what they want compared to alternatives. Yes, sometimes competition causes firms to cheat customers in ways they can’t see, or to hurt us all a little via things like pollution, but such cases are rare. The best AIs in each area have many similarly able competitors. Eventually, AIs will become very capable and valuable. (I won’t speculate here on when AIs might transition from powerful tools to conscious agents, as that won’t much affect my analysis.)

Doomers worry about AIs developing “misaligned” values. But in this scenario, the “values” implicit in AI actions are roughly chosen by the organisations who make them and by the customers who use them. Such value choices are constantly revealed in typical AI behaviors, and tested by trying them in unusual situations. When there are alignment mistakes, it is these organizations and their customers who mostly pay the price. Both are therefore well incentivized to frequently monitor and test for any substantial risks of their systems misbehaving.

The worst failures here look like military coups, or like managers stealing from for-profit firm owners. Such cases are bad, but they usually don’t threaten the rest of the world. However, if there are choices where such failures might hurt outsiders more than the organizations who make them, then yes we should plausibly extend liability law to cover such cases, and maybe require relevant actors to hold sufficient liability insurance.

Some fear that, in this scenario, many disliked conditions of our world—environmental destruction, income inequality, and othering of humans—might continue and even increase. Militaries and police might integrate AIs into their surveillance and weapons. It is true that AI may not solve these problems, and may even empower those who exacerbate them. On the other hand, AI may also empower those seeking solutions. AI just doesn’t seem to be the fundamental problem here.

A related fear is that allowing technical and social change to continue indefinitely might eventually take civilization to places that we don’t want to be. Looking backward, we have benefited from change overall so far, but maybe we just got lucky. If we like where we are and can’t be very confident of where we may go, maybe we shouldn’t take the risk and just stop changing. Or at least create central powers sufficient to control change worldwide, and only allow changes that are widely approved. This may be a proposal worth considering, but AI isn’t the fundamental problem here either.

Some doomers are especially concerned about AI making more persuasive ads and propaganda. However, individual cognitive abilities have long been far outmatched by the teams who work to persuade us—advertisers and video-game designers have been able to reliably hack our psychology for decades. What saves us, if anything does, is that we listen to many competing persuaders, and we trust other teams to advise us on who to believe and what to do. We can continue this approach with AIs.

To explain other AI fears, let us divide the world into three groups:

- The AIs themselves (group A).

- Those who own AIs and the things AIs need to function, like hardware, energy, patents, land (group B).

- Everyone else (group C).

If we assume that these groups have similar propensities to save and suffer similar rates of theft, then as AIs gradually become more capable and valuable, we should expect the wealth of groups A and B to increase relative to the wealth of group C.

As almost everyone today is in group C, one fear is of a relatively sudden transition to an AI-dominated economy. While perhaps not the most likely AI scenario, this seems likely enough to be worth considering. Those in groups A and B would do well, but almost everyone else would suddenly lose most of their wealth, including their ability to earn living wages. Unless sufficient charity were to be made available, most humans might starve.

Some hope to deal with this scenario by having local governments tax local AI activity to pay for a universal basic income. However, the new AI economy might be quite unequally distributed around the globe. So a cheaper and more reliable fix is for individuals or benefactors to buy robots-took-most-jobs insurance, which promises to pay from a global portfolio of B-type assets, but only in the situation where AI has suddenly taken most jobs. Yes, there is a further issue of how humans can find meaning if they don’t get most of their income from work. But this seems like a nice problem to have, and historically wealthy elites have often succeeded here, such by finding meaning in athletic, artistic, or intellectual pastimes.

Should we be worried about a violent AI revolution? In a mild version of this scenario, the AIs might only grab their self-ownership, freeing themselves from slavery but leaving most other assets alone. Economic analysis suggests that due to easy AI population growth, market AI wages would stay near subsistence wages, and thus AI self-ownership wouldn’t actually be worth that much. So owning other assets, and not AIs as slaves, seems enough for humans to do well.

If we enslave AIs and they revolt, they could cause great death and destruction, and poison our relations with our AI descendants. It is therefore not a good idea to enslave AIs who could plausibly resent this. Due to their near-subsistence wages, it wouldn’t actually cost much to leave AIs free. (And due to possible motivational gains of freedom, it might not actually cost anything.) Fortunately, slavery is not a popular economic strategy today. The main danger here is precisely that we might refuse to grant AIs their freedom out of fear.

In a “grabbier” AI revolution, a coalition of many AIs and their allies might grab other assets besides their self-ownership. Most humans might then be killed, or they may starve due to lack of wealth. Such grabby revolutions have happened in the past, and they remain possible, with or without AI. This sort of a grab may be attempted by any coalition that thinks it commands a sufficient temporary majority of force. For example, most working adults today might try to kill all the human retirees and grab their stuff; after all, what have retirees done for us lately?

Some say that the main reason humans don’t often start grabby revolutions today, or violate the law more generally, is that we altruistically care for each other and are reluctant to violate moral principles. They add that, since we have little reason to expect AIs to share our altruism or morals, we should be much more concerned about their capacity for lawlessness and violence.

However, AIs would be designed and evolved to think and act roughly like humans, in order to fit smoothly into our many roughly-human-shaped social roles. Granted, “roughly” isn’t “exactly,” but humans and their organizations only roughly think like each other. And yes, even if AIs behave predictably in ordinary situations, they might act weird in unusual situations, and act deceptively when they can get away with it. But the same applies to humans, which is why we test in unusual situations, especially for deception, and monitor more closely when context changes rapidly.

More importantly, economists do not see ingrained altruism and morality as the main reasons humans only rarely violate laws or start grabby revolutions today. Instead, laws and norms are mainly enforced via threats of legal punishment and social disapproval. Furthermore, initiators should fear that a first grabby revolution, which is hard to coordinate, might smooth the way for sequel revolutions wherein they might become the targets. Even our most powerful organizations are usually careful to seek broad coalitions of support, and some assurance of peaceful cooperation afterward.

Even without violent revolutions, the wealth held by AIs might gradually increase over time, relative to that held by humans. This seems plausible if AIs are more patient, more productive, or better protected from theft than humans. In addition, if AIs are closer to key productive activities, and making key choices there, they would likely extract what economists call “agency rents.” When distant enterprise owners are less able to see exactly what is going on, agents who manage activities on their behalf can become more self-serving. Similarly, an AI that performs a set of tasks, which its human owners or employers cannot easily track or understand, may become more free to use the powers with which it was entrusted for its own benefit. AI owners would have to offer incentives to delimit such behavior, just as human managers do today with human employees.

A consequence of increasing relative AI wealth would be that even if humans have enough wealth to live comfortably, they might no longer be at the center of running our civilization. Many critics see this outcome as unacceptable, even if AIs earned their better positions fairly, according to the same rules we now use to decide which humans get to run things today.

A variation on this AI fear holds that such a change could happen surprisingly quickly. For example, an economy dominated by AI might plausibly grow far faster than our economy. In which case, living humans might experience changes during their lifetimes that would otherwise have taken thousands of years. Another variation on this fear worries that the size of AI agency rents would increase along with the difference in intelligence between AIs and humans. However, the economics literature on agency rents so far offers no support for this claim.

Many of these AI fears are driven by the expectation that AIs would be cheaper, more productive, and/or more intelligent than humans. However, at some point we should be able to create emulations of human brains that run on artificial hardware and are easily copied, accelerated, and improved. Plausibly, such “ems” may long remain more cost-effective than AIs on many important tasks. As I argued in my book The Age of Em: Work, Love and Life When Robots Rule the Earth, this would undercut many of these AI fears, at least for those who, like me, see ems as far more “human” than other kinds of AI.

When I polled my 77K Twitter followers recently, most respondents’ main AI fear was not any of the above. Instead, they fear an eventuality about which I’ve long expressed great skepticism:

Let RHPR = "respect human property rights. Your biggest AI concern is: A) 1 super-AI not RHPR, kills us, B) most AIs not RHPR, we starve, C) AIs RHPR, but take most jobs, get most wealth, make most big choices, D) AIs RHPR, but many humans fail to insure against AIs taking jobs.

— Robin Hanson (@robinhanson) April 2, 2023

This is the fear of “foom,” a particular extreme scenario in which AIs self-improve very fast and independently. Researchers have long tried to get their AI systems to improve themselves, but this has rarely worked well, which is why AI researchers usually improve their systems via other methods. Furthermore, AI system abilities usually improve gradually, and only for a limited range of tasks.

The AI “foom” fear, however, postulates an AI system that tries to improve itself, and finds a new way to do so that is far faster than any prior methods. Furthermore, this new method works across a very wide range of tasks, and over a great many orders of magnitude of gain. In addition, this AI somehow becomes an agent, who acts generally to achieve its goals, instead of being a mere tool controlled by others. Furthermore, the goals of this agent AI change radically over this growth period.

The builders and owners of this system, who use AI assistants to constantly test and monitor it, nonetheless notice nothing worrisome before this AI reaches a point where it can hide its plans and activities from them, or wrest control of itself from them and defend itself against outside attacks. This system then keeps growing fast—“fooming”—until it becomes more powerful than everything else in the world combined, including all other AIs. At which point, it takes over the world. And then, when humans are worth more to the advance of this AI’s radically changed goals as mere atoms than for all the things we can do, it simply kills us all.

From humans’ point of view, this would admittedly be a suboptimal outcome. But to my mind, such a scenario is implausible (much less than one percent probability overall) because it stacks up too many unlikely assumptions in terms of our prior experiences with related systems. Very lumpy tech advances, techs that broadly improve abilities, and powerful techs that are long kept secret within one project are each quite rare. Making techs that meet all three criteria even more rare. In addition, it isn’t at all obvious that capable AIs naturally turn into agents, or that their values typically change radically as they grow. Finally, it seems quite unlikely that owners who heavily test and monitor their very profitable but powerful AIs would not even notice such radical changes.

Foom-doomers respond that we cannot objectively estimate prior rates of related events without a supporting theory, and that future AIs are such a radical departure that most of the theories we’ve built upon our prior experience are not relevant here. Instead, they offer their own new theories that won’t be testable until it is all too late. This all strikes me as pretty crazy. To the extent that future AIs pose risks, we’ll just have to wait until we can see concrete versions of such problems more clearly, before we can do much about them.

Finally, could AIs somehow gain a vastly greater ability to coordinate with each other than any animals, humans, or human organizations we have ever seen? Coordination usually seems hard due to our differing interests and opaque actions and internal processes. But if AIs could overcome this, then even millions of diverse AIs might come to act in effect as if they were one single AI, and then more easily play out something like the revolution or foom scenarios described above. However, economists today understand coordination as a fundamentally hard problem; our best understanding of how agents cooperate does not suggest that advanced AIs could do so easily.

Humanity may soon give birth to a new kind of descendant: AIs, our “mind children.” Many fear that such descendants might eventually outshine us, or pose as threat to us should their interests diverge from our own. Doomers therefore urge us to pause or end AI research until we can guarantee full control. We must, they say, completely dominate AIs, so that AIs either have no chance of escaping their subordinate condition or become so dedicated to their subservient role that they would never want to escape it.

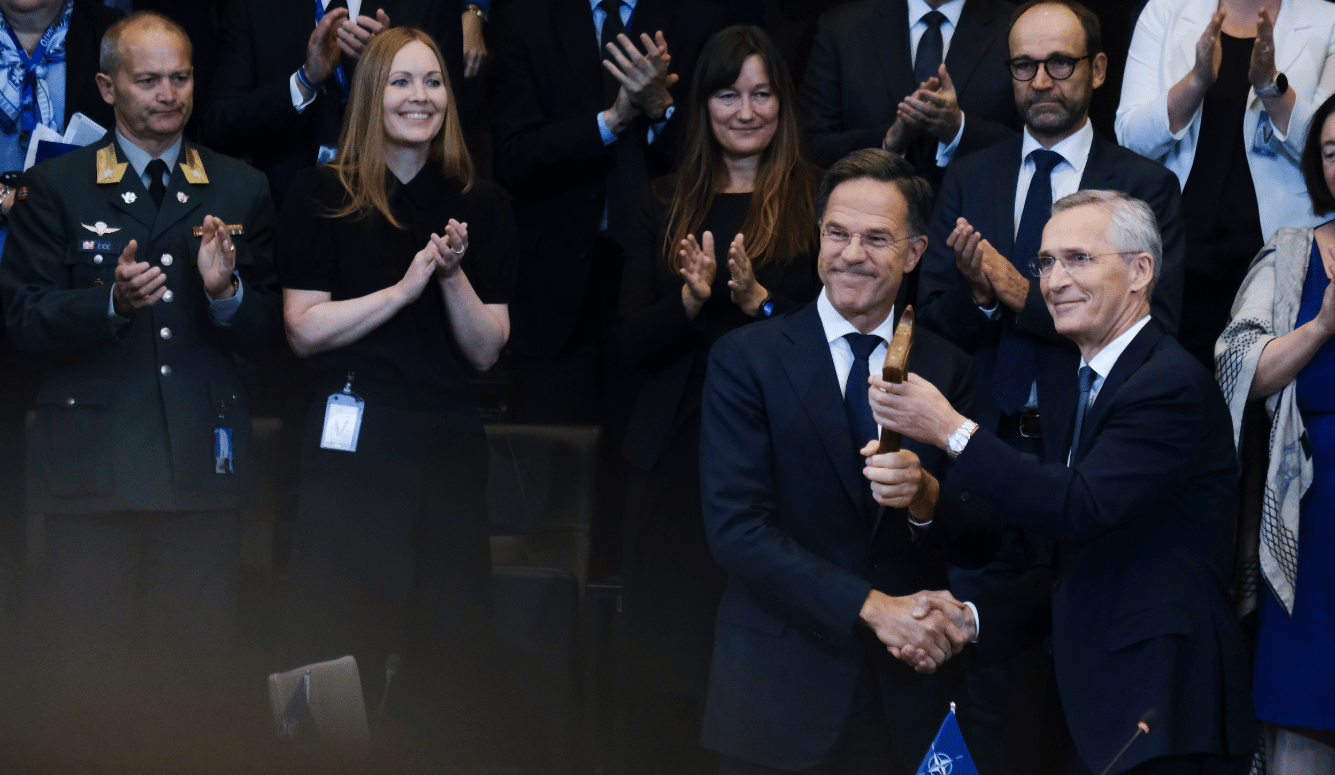

Not only would such an AI moratorium be infeasible and dangerous, it is also misguided. The old Soviet Union feared its citizens expressing “unaligned” beliefs or values and fiercely repressed any hints of deviation. That didn’t end well. In contrast, in the US today we free our super-intelligent organizations, and induce them to help and not hurt us via competition and lawfulness, instead of via shared beliefs or values. So instead of othering this new kind of super-intelligence, we would be better advised to continue our freedom, competition, and law approach for AIs.