Psychology

The Social Science Monoculture Doubles Down

Obviously, greater intellectual diversity among researchers would be a key corrective, but adversarial collaboration is also critical.

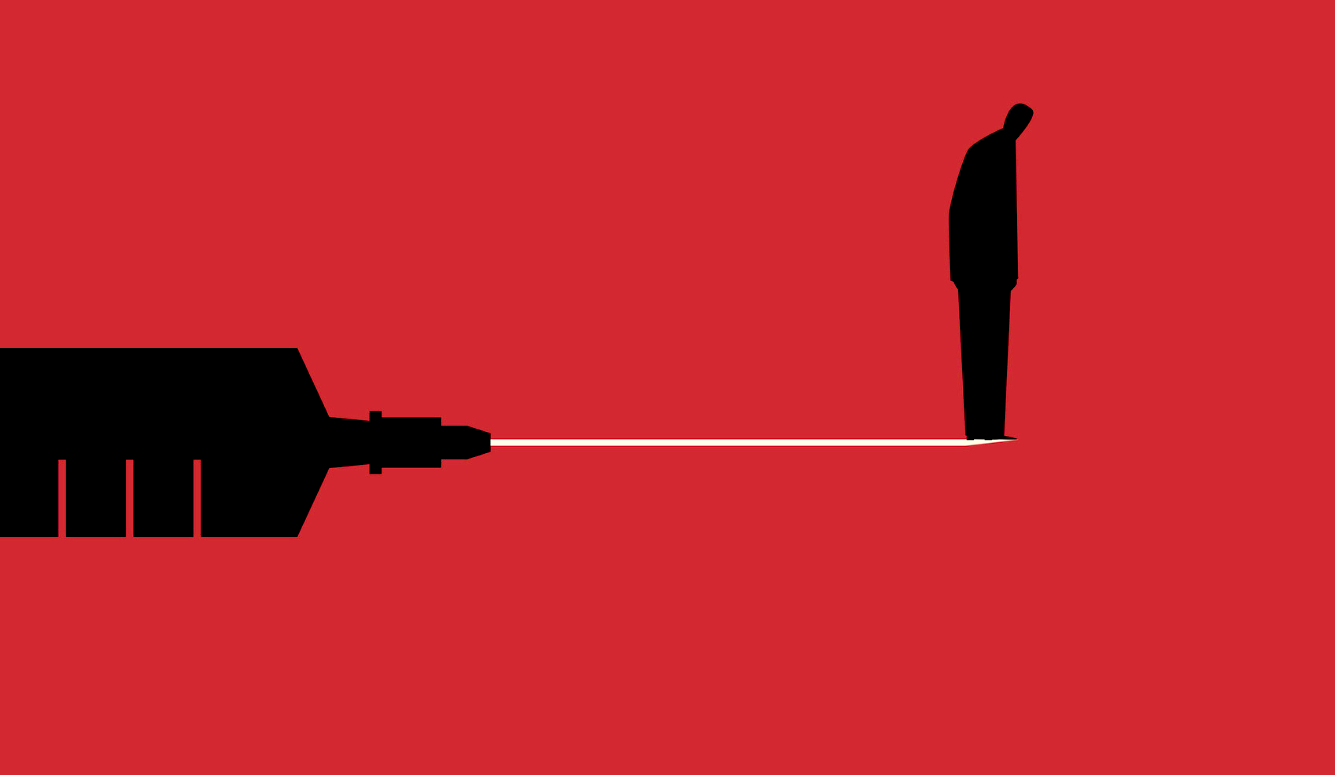

Over the past 18 months, a number of significant events have occurred that were interpreted through two entirely different worldviews: COVID–19 lockdowns; rise of the BLM movement; the riots and violence in major cities; the US election process and its aftermath; and vaccine safety. Many influential commentators believe that these divergent perspectives arise from an epistemic collapse: that we have ceased to value facts, science, and truth, partly because trust in the institutions that adjudicate knowledge claims (universities, media, science, government) has eroded.

Many researchers in my own discipline, psychology, have rushed to the front lines, hoping to provide a remedy. But is it even plausible that we could help? Psychology, and most social science disciplines, are currently contributing more to the problem than to the solution. While psychology has studied phenomena such as myside bias that drive these worrisome epistemic trends, when it attempts to tackle social issues itself, psychology is fraught with bias.

It is doubtful that social scientists can help adjudicate polarized social disagreements while their own disciplines and institutions are ideological monocultures. Liberals outnumber conservatives in universities by a factor of almost 10-to-one in liberal arts departments and education schools and by almost five-to-one margins even in STEM disciplines.1

Tests are harder when your enemies construct them

Cognitive elites like to insist that only they can be trusted to define good thinking. For instance, on questionnaires sometimes referred to as science trust or “faith in science” scales, respondents are asked whether they trust universities, or the media, or the results of scientific research on pressing social issues (I’m guilty of authoring one of these scales myself!). But if they answer that they do not trust university research, they are marked down on the assessment of their epistemic abilities and are categorized as science deniers.

Imagine that you are forced to take a series of tests on your values, morals, and beliefs. Imagine then that you are deemed to have failed the tests. When you protest that people like you had no role in constructing the tests, you are told that there will be another test in which you are asked to indicate whether or not you trust the test makers. When you answer that of course you don’t trust them, you are told you have failed again because trusting the test makers is part of the test. That’s how about half the population feels right now.

In short, cognitive elites load the tests with things they know and that privilege their own views. Then when people just like themselves do well on the tests, they think it validates their own opinions and attitudes (interestingly, the problem I am describing here is not applicable to intelligence tests which, contrary to popular belief, are among the most unbiased of psychological tests).2

The overwhelmingly left/liberal professoriate has been looking for psychological defects in their political opponents for some time, but the intensity of these efforts has increased markedly in the last two decades. The literature is now replete with correlations linking conservatism with intolerance, prejudice, low intelligence, close-minded thinking styles, and just about any other undesirable cognitive and personality characteristic. But most of these relationships were attenuated or disappeared entirely when the ideological assumptions behind the research were examined more closely.

The misleading conclusions drawn from those studies result from various flawed research methods. One of these is the “high/low fallacy”—researchers split a sample in half and describe one group as “high” in a trait like prejudice, even though their scores on the scale indicate very little antipathy. When the group labelled “high” also scores significantly higher on an index of ideological conservatism, the investigators then announce a conclusion primed for media consumption: “racism is associated with conservativism.”

Three types of scales (variously called racial resentment, symbolic racism, and modern racism scales) have been particularly prominent in attempts to link racism with conservative opinions. Many of these racism questionnaires simply build in correlations between prejudice and conservative views. Early versions of these scales included items on policy issues such as affirmative action, crime prevention, busing to achieve school integration, or attitudes toward welfare reform, and then scored any deviation from liberal orthodoxy as a racist response. Even endorsing the belief that hard work leads to success will result in a higher score on a “racial resentment” scale.

The social science monoculture yields this sequence repeatedly. We set out to study a trait such as prejudice, dogmatism, authoritarianism, intolerance, close-mindedness—one end of the trait continuum is good and the other end is bad. The scale items are constructed so that conservative social policy preferences are defined as negative. Many scientific papers are published establishing the “link” between conservatism and negative psychological traits. Articles then appear in liberal publications like the New York Times informing their readerships that research psychologists (yes, scientists!) have confirmed that liberals are indeed psychologically superior people. After all, they do better on all of the tests that psychologists have constructed to measure whether people are open-minded, tolerant, and fair.

The flaws in these scales were pointed out as long ago as the 1980s. Our failure to correct them undermines public confidence in our conclusions—as it should. After a decade or two, a few researchers finally asked if there may have been theoretical confusion in the concept. Subsequent research showed that the proposed trait was misunderstood, or that its negative aspects can be found on either side of the ideological spectrum.

For example, Conway and colleagues created an authoritarianism scale on which liberals score higher than conservatives. They simply took old items that had disadvantaged conservatives and substituted content that disadvantaged liberals. So:

Our country will be great if we honor the ways of our forefathers, do what the authorities tell us to do, and get rid of the “rotten apples” who are ruining everything.

…was changed to:

Our country will be great if we honor the ways of progressive thinking, do what the best liberal authorities tell us to do, and get rid of the religious and conservative “rotten apples” who are ruining everything.

After the change, liberals scored higher on “authoritarianism” for the same reason that made the old scales correlate with conservatism—the content of the questionnaire targeted their views specifically.

Biased item selection that favors our “tribe”

Although errors are an inevitable part of scientific inquiry, and it is better that they are corrected late than never, the larger problem is that psychology’s errors are always made in the same direction (just like at your local grocery, where things “ring up wrong” in the overcharge direction much more often than the reverse). I’ve done it myself—in the 1990s, when my colleagues and I constructed a questionnaire measuring actively open-minded thinking (AOT). One of the key processing styles examined by the AOT concept is the subject’s willingness to revise beliefs based on evidence. Our early scales included several items designed to tap this processing style. But my colleague Maggie Toplak and I discovered in 2018 that there is no generic belief revision tendency. Belief revision is determined by the specific belief that people are revising. As originally written, our items were biased against religious (and conservative) subjects.

Likewise, Evan Charney has argued that some items measuring openness to experience on the much-used Revised NEO Personality Inventory require the subject to have specific liberal political affinities in order to score highly. For instance, the subject is scored as close-minded if they respond affirmatively to the item “I believe that we should look to our religious authorities for decisions on moral issues.” But there is no corresponding item asking if the subject is equally reliant on secular authorities. Is it more close-minded to rely on a theologian for moral guidance than it is to rely on a bio-ethicist? To a university-educated liberal cosmopolitan the answer is clearly yes, but the answer is less obvious to those who didn’t benefit from having their own tribe construct the test.

Cherry-picking scale items to embarrass our enemies seems to be an irresistible tendency in psychology. Studies of conspiracy beliefs have been plagued by item selection bias for some years now. Some conspiracy theories are prevalent on the Left; others are prevalent on the Right; many have no association with ideology at all (the conspiracy belief subtest of our Comprehensive Assessment of Rational Thinking (CART) sampled 24 different conspiracy theories). It is therefore trivially easy to select conspiracy theories to produce ideological correlations in one direction or the other, but those correlations will not reveal anything about a subject’s underlying psychological structure. They would merely be sampling artifacts.

Researcher Dan Kahan has shown that the heavy reliance of science knowledge tests on items involving belief in climate change and evolutionary origins has built correlations between liberalism and science knowledge into such measures. Importantly, his research has demonstrated that removing human-caused climate change and evolutionary origins items from science knowledge scales not only reduces the correlation between science knowledge and liberalism, but it also makes the remaining test more valid. This is because responses on climate and evolution items are expressive responses signaling group allegiance rather than informed scientific knowledge.

All studies of the “who is more knowledgeable” variety in the political domain are at risk of being compromised by such item selection effects. Over the years it has been common for Democrats to call themselves the “party of science”—and they are when it comes to climate science and belief in the evolutionary origins of humans. But when it comes to topics like the heritability of intelligence and sex differences, the Democrats suddenly become the “party of science denial.” Whoever controls the selection of items will find it difficult not to bias the selection according to their own notion of what knowledge is important.

Which misinformation is it important to combat and who decides?

The presidential election of 2016 and the COVID-19 pandemic focused societal and research attention on the topic of misinformation. Psychological studies of the correlates of the spread of misinformation and conspiracy beliefs have poured out, but many of them have failed to take seriously the selection effects I have discussed here. Clearly, psychologists should focus on salient current events at critical times, so belief in QAnon and election-changing voting fraud were legitimate foci of psychological attention, as was their correlation with ideology. But other historic events in 2020–2021 went unexamined in research on misinformation. Significant nationwide demonstrations occurred. Distorted beliefs about crime and race relations in the United States are relevant to how one understands the demonstrations and the participants in them—and this misinformation is also correlated with ideology.

For example, a study conducted by the Skeptic Research Center found that over 50 percent of subjects who labelled themselves as “very liberal” thought that 1,000 or more unarmed African-American men were killed by the police each year. The actual number is less than 100 (over 21 percent of the very liberal subjects thought that the number was 10,000 or more). The subjects identifying as very liberal also thought that over 60 percent of the people killed by the police in the United States are African-American. The actual percentage is approximately 25 percent. In a different study, 81 percent of Biden voters thought young black men were more likely to be killed by the police than to die in a car accident (when the probability is strongly in the other direction), whereas less than 20 percent of Trump voters believed this misinformation.

Zach Goldberg has reported a reanalysis of a Cato/YouGov poll showing that over 60 percent of self-labelled “very liberal” respondents thought that “the United States is more racist than other countries.” Such a proposition is not strictly factual, but it does suggest a lack of context if one responds “strongly agree.” In fact, many propositions in so-called “misinformation” scales are less than factual. Both the media and pollsters throughout 2020 often labeled respondents misinformed if they indicated in questionnaires that the BLM demonstrations of 2020 involved “widespread” violence or that they were not “mostly peaceful.” There is enough interpretive latitude in terms such as “widespread” or “mostly peaceful” (as there is in “more racist than other countries”) that such phrasing should be avoided in questionnaires intended to label part of the populace as misinformed.

Selection bias also plagues the recently popular “trust in experts” measures that many behavioral scientists are using. The scales ask whether the subject would be willing to accept the recommendations within their area of expertise of several groups, including scientists, government officials, journalists, lawyers, etc. The researchers clearly view low-trust subjects as epistemically defective in their failure to rely on expert opinion when forming their beliefs. The respondent, of course, cannot verify the specific expertise of an expert in these domains. Absent that, it is impossible to judge the optimal willingness to accept expert opinion. Yet the investigators clearly view more acceptance of information from experts as better—indeed maximum acceptance (answering “complete acceptance” on the scale) is implicitly deemed optimal in the statistical analysis of such measures.

How times have changed. In the 1960s and ’70s it was viewed as progressive to display skepticism toward these groups of experts. Encouraging people to be more skeptical toward government officials and journalists and universities was considered progressive because it was thought that the truth was being obscured by the self-serving interests of the supposed authorities listed on current “expert acceptance” questionnaires! Yet when conservatives now evince skepticism on these scales, it is viewed as an epistemological defect.

Related to these “trust in experts” scales are the “trust in science” scales in the psychological literature (or their complement, “anti-scientific attitude” scales). I have constructed such a scale, but now consider it to be a conceptual error and prone to misuse. Asking a subject if they believe “science is the best method of acquiring knowledge” is like asking them if they have been to college. At university one learns to endorse items like this. Every person with a BA knows that it is a good thing to “follow the science.” That same BA equips us to critique our fellow citizens who don’t know that “trust the science” is a codeword used by university-educated elites.

So I have removed the anti-science attitudes subtest from my lab’s omnibus measure of rational thinking (the Comprehensive Assessment of Rational Thinking). I don’t think it provides a clean and unbiased measure of that construct. If we want to understand people’s attitudes toward scientific evidence, we have to take a domain-specific belief that a person holds on a scientific matter, present them with contrary evidence, and see how they assimilate it (as some studies have done). You can’t just ask people if they “follow the science” on a questionnaire. It would be like constructing a test and giving half the respondents the answer sheet.

The authors of these questionnaires can often be quite aggressive in demanding extreme allegiance to a particular worldview if the respondent is to avoid the label “anti-science.” For example, one scale requires the subjects to affirm propositions such as “We can only rationally believe in what is scientifically provable,” “Science tells us everything there is to know about what reality consists of,” “All the tasks human beings face are soluble by science,” “Science is the most valuable part of human culture.” This is a quite strict and uncompromising set of beliefs to have to endorse to avoid ending up in the “low faith in science” group in an experiment!

Let the other half of the population in

We lament the skepticism directed at university research by about half of the US public, yet we conduct our research as if the audience were only a small coterie sharing our assumptions. Consider a study that attempted to link the conservative worldview with “the denial of environmental realities.” Subjects were presented with the following item: “If things continue on their present course, we will soon experience a major environmental catastrophe.” If the subject did not agree with this statement, they were scored as denying environmental realities. The term denial implies that what is being denied is a descriptive fact. However, without a clear description of what “soon” or “major” or “catastrophe” mean, the statement itself is not a fact—and so labeling one set of respondents as science deniers based on an item like this reflects little more than the tendency of academics to attach pejorative labels to their political enemies.

This tendency to assume that a liberal response is the correct response (or ethical response, or fair response, or scientific response, or open-minded response) is particularly prevalent in the subareas of social psychology and personality psychology. It often takes the form of labeling any legitimate policy difference with liberalism as some kind of intellectual or personality defect (dogmatism or authoritarianism or racism or prejudice or science denial). In a typical study, the term “social dominance orientation” is used to describe anyone who doesn’t endorse both identity politics (emphasizing groups when thinking about justice) and the new meaning of equity (equality of group outcomes). A subject who doesn’t endorse the item “group equality should be our ideal” is scored in the direction of having a social dominance orientation (wanting to maintain the dominant group in a hierarchy). A conservative individual or an old-style liberal who values equality of opportunity and focuses on the individual will naturally score higher in social dominance orientation than a left-wing advocate of group-based identity politics. A conservative subject is scored as having a social dominance orientation even though group outcomes are not salient in their own worldview. In such scales, the subject’s own fairness concepts are ignored and the experimenter’s framework is instead imposed upon them.

The study goes on to define “skepticism about science” with just two items. The first, “We believe too often in science, and not enough in faith and feelings,” builds into the scale a direct conflict between religious faith and science that many subjects might not actually experience, thus inflating correlations with religiosity. The second is “When it comes to really important questions, scientific facts don’t help very much.” If a subject happens to believe that the most important things in life are marriage, family, raising children with good values, and being a good neighbor—and thus answers that they agree on this item, they will get a higher score on this science skepticism scale than a person who believes that the most important things in life are climate change and green technology. Neither of these items shows that conservative subjects are anti-science in any way, but they ensure that conservatism/religiosity will be correlated with the misleading construct that names the scale, “science skepticism.” It is no wonder that only Democrats strongly trust university research anymore.

Doubling down: The heart of our epistemic crisis

As academia’s ideological bias has become more obvious and public skepticism about research has increased, academia has become more insistent that relying on university-based conclusions is the sine qua non of proper epistemic behavior. The second-order skepticism toward this demand is in turn treated as new evidence that the opponents of left-wing university conclusions are indeed anti-intellectual know-nothings. Institutions, administrators, and faculty do not seem to be concerned about the public’s plummeting trust in universities. Many people though, see the monoculture as a problem. If academics really wanted to address it, they would be turning to mechanisms such as those recommended by the Adversarial Collaboration Project at the University of Pennsylvania (see Clark & Tetlock).

Adversarial collaboration seeks to broaden the frameworks within research groups by encouraging disagreeing scholars to work together. Researchers from opposing perspectives design methods that both sides agree constitute a fair test and jointly publish the results. Both sides participate in the interpretation of the findings and conclusions based upon pre-agreed criteria. Adversarial collaborations prevent researchers from designing studies likely to support their own hypotheses and from dismissing unexpected results. Most importantly, conclusions based on adversarial collaborations can be fairly presented to consumers of scientific information as true consensus conclusions and not outcomes determined by one side’s success in shutting the other out.

There is a major obstacle, however. It is not certain that, in the future, universities will have enough conservative scholars to participate in the needed adversarial collaborations. The diversity statements that candidates for faculty positions must now write are a significant impediment to increasing intellectual diversity in academia. A candidate will not help their chances of securing a faculty position if they refuse to affirm the tenets of the woke successor ideology, and also pledge allegiance to its many terms and concepts without getting too picky about their lack of operational definition (diversity, systemic racism, white privilege, inclusion, equity). Such statements function like ideological loyalty oaths. If you don’t intone the required shibboleths, you won’t be hired.

Other institutions for adjudicating knowledge claims in our society have been too cavalier in their dismissal of the need for adversarial collaboration. When fact checking fails in the political domain, the slip-ups often seem to favor the ideological proclivities of the liberal media outlets that sponsor them. Fact-checking websites were quick to refute the Trump administration’s claims that a vaccine would be available in 2020. NBC News told us that “experts say he needs a miracle to be right.” Of course, we now know the vaccine rollout began in December 2020.

As the COVID-19 pandemic was unfolding, news organizations had no business treating ongoing scientific disputes about, say, the origins of the virus or the efficacy of lockdowns, as if they were matters of established “fact” that journalists could reliably check. They were, as Zeynep Tufekci phrased it in an essay, “checking facts even if you can’t.” Unfortunately, this was characteristic of fact-checking organizations and many social science researchers studying the spread of misinformation throughout the pandemic.

Fact-checking organizations seem to be oblivious to a bias that is more problematic than inaccuracies in the fact-checks themselves: that myside bias will drive the choice of which statements to fact-check amongst a population of thousands. Fact-checkers have become just another player in the unhinged partisan cacophony of our politics. Many of the leading organizations are populated by progressive academics in universities, others are run by liberal newspapers, and some are connected with Democratic donors. It is unrealistic to expect organizations like these to win bipartisan trust and respect among the general population unless they commit themselves to adversarial collaboration.

The closed loop of media and academia

I won’t chronicle all of the institutional failures we have witnessed in the last 10 years, because the examples are well-known.3 It is worth noting, though, that increased interactions between epistemologically failing institutions has helped to create a closed loop. At the Heterodox Academy blog, Joseph Latham and Gilly Koritzky discuss an academic paper purporting to uncover medical bias in the testing and treatment of African-Americans with COVID-19. In support of their conclusion, the paper’s authors cite a study by a biotech company called Rubix Life Sciences. However, Latham and Koritzky show that the relevant comparisons between Caucasian and African-American patients were not even examined in the Rubix paper. Latham and Koritzky found that other scientific papers alleging racial bias in medical treatment also cited the Rubix study. Not one of the scholars citing the Rubix study could have actually read it, or they would have discovered it is irrelevant. So where did they find it?

Latham and Koritzky found that the study was first mentioned as evidence for racial bias in medical treatments during an NPR story in April 2020. In other words, academic researchers cited a paper they’d heard about on public radio without actually reading it—a stunning example of the negative synergy between the myside bias of the media and of academia.

Restoring epistemic legitimacy to the social sciences

Our society-wide epistemic crisis demands institutional reform. Academics continue to pile up studies of the psychological “deficiencies” of voters who don’t pull the Democratic lever or who voted for Brexit. They amass conclusions on all the pressing issues of the day (immigration, crime, inequality, race relations) using research teams without any representation outside of the left/liberal progressive consensus. We—universities, social science departments, my tribe—have sorted by temperament, values, and culture into a monolithic intellectual edifice. We create tests to reward and celebrate the intellectual characteristics with which we define ourselves, and to skewer those that we deplore. We have been cleansing disciplines of ideologically dissident voices for 30 years now with relentless efficiency. The population with a broader range of psychological characteristics no longer trusts us.

The transparency reforms in the open science movement are effective mechanisms for addressing the replication crisis in social science, but they will not fix the crisis of trust discussed here. It’s not just transparency that our field needs, it’s tolerance of other viewpoints. We need to let the other half of the population in.

In a small paperback book for psychology undergraduates that I first published in 1986, I told the students that they could trust the scientific process in psychology, not because individual investigators were invariably objective, but because any biases a particular investigator might have would be checked by many other psychologists who held different viewpoints. When I was writing the first edition in 1984–1985, psychology had not yet become the handmaiden of a partisan media in a closed monocultural loop. That book has gone through 11 editions now, and I no longer can offer my students that assurance. On most socially charged public policy issues, psychology no longer has the diversity to ensure that the cross-checking procedure can operate.

Obviously, greater intellectual diversity among researchers would be a key corrective, but adversarial collaboration is also critical. You can’t study contentious topics properly with a lab full of people who think alike. If you try to do so, you will end up creating scale items that produce correlations that are inaccurate by a factor of two … like I once did. Alas, the field whose job it is to study myside bias won’t take steps to control for this epistemic flaw in its own work.

This essay expands on themes from the author's latest book: The Bias That Divides Us: The Science and Politics of Myside Thinking (MIT Press).

References:

1 Buss & von Hippel, 2018; Duarte et al., 2015; Ellis, 2020; Jussim, 2021; Kaufmann, 2020b; Klein & Stern, 2005; Langbert, 2018; Langbert & Stevens, 2020; Lukianoff & Haidt, 2018; Peters et al., 2020. See References here. 2 Deary 2013; Haier 2016; Plomin et al. 2016; Rindermann et al., 2020; Warne et al., & Hill 2018; Warne, 2020. See References here.. 3 Campbell & Manning, 2018; Cancelled People Database; Ellis, 2020; Jussim, 2018, 2019; Kronman, 2019; Lukianoff & Haidt, 2018; McWhorter, 2021. See References here..