Middle East

Could an Invisible Military Laser Steal Your Privacy?

Face recognition gives law enforcement this unique authority, this unique power, that does pose risks to our constitutional rights, and this needs to be very closely scrutinized now.

In early 2006, I sat in the Pashtun tribal areas on the Af/Pak border. When not rinsing grit out of my teeth from the thick fog in the air, I awaited information from capricious “allies,” on whether their informant could identify a senior al Qaeda leader who I’ll call “The Moroccan.” At the time, he was al Qaeda’s regional operational commander and well worth removing from the board.

I lusted for certainty on the whereabouts of the Moroccan. He remained stubbornly elusive, while I chafed with impatience aggravated by living conditions halfway between a prison and an armed bunker. I watched tantalizing video from “eyes in the sky” of vehicles associated with the Moroccan heading towards the funeral of a militant. The funeral was attended by hundreds of men, all sporting traditional bushy beards and the long, baggy shalwar-kameezs Pashtuns favored, topped by either a turban or pakol cap. Making a positive ID in that crowd was like locating a particular stalk of wheat in a bumper crop. Was the Moroccan there? Or in a safehouse 100 miles away?

Because we tried not to create more enemies with indiscriminate attacks than we killed, we didn’t take a shot that day. This was only a few months after the Damadola strike, intending to kill al Qaeda No 2, Ayman al-Zawahiri, but instead killing 19 locals. The Moroccan remained alive until a few years later, when he was killed by a Hellfire missile.

Unfortunately, before his subsequent demise, the Moroccan allegedly helped assassinate a major political reformer hoping to modernize a destitute and corrupt country.

The face recognition genie

Identification tech has come a long way since the hunt for the Moroccan, but not always to society’s benefit. Face recognition is still not on most Westerners’ list of top 100 issues to worry about. And it should be.

Even Google, years before it quietly axed the motto, “Don’t Be Evil,” opted out of face recognition tech. Then-Google CEO Eric Schmidt explained it was the only tech Google had built and then decided not to use because it could be used “in a very bad way.”

Clare Garvie, an attorney at the Georgetown Center for Privacy and Technology, testified before Congress in 2019 about the misuse of face recognition technology in the US. It wasn’t pretty.

Garvie told the House Committee on Oversight and Reform, “Face recognition presents a unique threat to the civil rights and liberties protected by the US Constitution.”

After her testimony, I asked Garvie why unique? She told me face recognition chills First Amendment free speech, and as used by many police departments, outright violates Fourth Amendment rights on unreasonable search, and 14th Amendment rights on due process. She obtained over 20,000 pages of documents from Freedom of Information Act requests and lawsuits, and found the majority of US police departments that had face recognition tech were not forthcoming about how it was used, or even whether they had it.

The NYPD, for example, claimed it had no documents on face recognition. It later turned over 2,000 pages on it when forced to. The NYPD was found to have fabricated evidence repeatedly. In one case, it tried to catch a beer thief that face recognition software couldn’t ID by using a photo of Cheers actor Woody Harrelson. The detectives thought the thief looked like him, so they fed in Harrelson’s photo to try to force a match from the software. Police routinely used falsified “probe photos” when they couldn’t get a match from the software.

Privacy laws and civil rights lawsuits, if they ever catch up to surveillance overreach, take years to get through the courts, at tremendous expense. Clearview AI, one of the biggest vendors of face recognition data and services to police, private companies, and even rich individuals, faces multiple class action lawsuits for a litany of legal and regulatory violations. Clearview’s clients include 2,200 domestic and foreign law enforcement agencies, the DOJ, DHS, universities, Walmart, Best Buy, Macys, and on and on. Did Clearview AI ask your permission to use photos you posted to Facebook or Twitter or Instagram in a criminal line up? No? Clearview AI lets police, or a random corporate cog at Walmart, do it anyway, even in localities where that has been specifically ruled illegal.

To make matters worse, face recognition tech can do a horrible job in identifying non-white suspects, putting non-Caucasians at higher risk for “false positives,” e.g., being arrested for a crime they did not commit because of a bad match. Per 2019 testing by the National Institute of Standards and Technology (NIST), “False positive rates often vary by one or two orders of magnitude (i.e., 10x, 100x),” depending on the racial or ethnic group being tested.

Amazon also sells a face recognition service, Rekognition. Rekognition has been excoriated for providing inadequate, incorrect, or non-existent training and documentation to clients, which inevitably leads to misuse. In a Washington Post article on Rekognition, the police department that helped develop it, the Washington County Sherriff’s Office in Oregon, stated it never had a complaint from defendants or defense attorneys on the use of face recognition software. But detective Robert Rookhuÿzen also said: “Just like any of our investigative techniques, we don’t tell people how we catch them. We want them to keep guessing.”

Isn’t that obstruction of justice? US police are required to share their evidence with defense attorneys, particularly exculpatory evidence, e.g., a search for a criminal suspect generated multiple matches in the face recognition software. As Georgetown’s Garvie pointed out, refusing to share those matches is a breach of the 14th Amendment.

Clearview AI and Rekognition are but two of dozens of developers of face recognition technology in the US. While much of it has been improving, it is still a mixed bag. Some versions of it can achieve a 99 percent match rate under ideal conditions. Under less-than-ideal conditions, that number can fall to 50 percent. And the rate of accurate matches from composite sketches is unbelievably low, with as few as 20 percent valid matches.

None of these shortfalls are highlighted to the public, defendants, and quite frequently, even the police using the face recognition systems. Instead, cops are told by vendors like Amazon that it works like magic. Per Rekognition’s website, “Deep learning uses a combination of feature abstraction and neural networks to produce results that can be … indistinguishable from magic.” Perhaps because “magic” is involved, some police don’t seem to grasp that face recognition has to be used within pre-existing rules of evidence and precedent.

Some police agencies do require face recognition matches to be reviewed by trained human experts, as the FBI does. But as Steven Talley can attest, human face recognition experts are not infallible either.

Talley was falsely arrested twice for bank robbery even after police had video footage showing Talley at his job while the robbery occurred. The second arrest, disregarding both fingerprint evidence and a rock-solid alibi, was based on the opinion of an FBI face recognition expert.

The arrests cost Talley his job, home, marriage, and access to his children. Talley also suffered a broken sternum, broken teeth, ruptured discs, blood clots, nerve damage, and a fractured penis during the first brutal false arrest.

Face recognition is a shinier, sexier iteration of the implicit promise justifying mass installation of CCTVs years ago. It ran, “If only we unleash unblinking and ever-present surveillance technology, we’ll reap a harvest of safety.”

The facts show otherwise. The largest ever meta-analysis on surveillance cameras, including 40 years of data, is that they reduce car theft and other property crime by 16 percent. And violent crime not at all. They can help catch violent criminals and terrorists after the fact, but surveillance cameras do not prevent violent crime.

Jetson

In 2019, the MIT Technology Review reported the US Special Operations Command (SOC) had asked for a new system for positive identifications on the battlefield. The goal was to help snipers ID terrorist/insurgent targets.

The gadget was dubbed Jetson. It is an infrared laser that, in 30 seconds, at distances of 200 meters (close to 700 ft), claims to identify an individual via his or her heartbeat, a “heartprint,” if you will, with 95 percent or greater accuracy, using laser vibrometry. The 200 meter range figure is likely an underestimate of what Jetson can do, as is the 95 percent accuracy figure. Steward Remaly, the program manager who ran Jetson development for the DOD’s Combatting Terrorism Technical Support Office (CTTSO)* told MIT that longer ranges and greater accuracy should be possible (and the DOD rarely publicizes the full capability of new gear).

The first mention of Jetson I found was the CTTSO’s 2017 Review Book, which said it will ID targets within five seconds. That five-second figure was absent in the CTTSO’s 2018 Review Book. When the 2019 MIT story appeared a year later, it said 30 seconds, as did all subsequent coverage. We don’t know which figure is more accurate.

In February 2021, with help from DOD public affairs, I interviewed Remaly, the Jetson program manager at CTTSO, about Jetson. Remaly was amiable but vague in most of his responses. The main takeaways from the Remaly interview were that Jetson was developed by Ideal Innovations Incorporated, aka I3, working with Washington University in St Louis. And that Jetson development started in 2010.

A start-date of 2010 puts a different spin on Jetson’s status. Instead of a pilot project cobbled together in 2017–2018, Jetson is the fruit of eight years of R&D, suggesting a mature piece of tech was delivered to the Army by the CTTSO. The CTTSO’s 2018 Review Book designated Jetson a “completed project” almost a year before the MIT article ran. The Army has now had that completed project for at least two years, possibly longer.

And it was impressive when it was handed over. The CTTSO’s Remaly told me: “It was great equipment; I’ll tell you that. And Ideal innovations and Washington University frankly did a great job on the parts they were asked to work on, and they got it to the stage that we needed it.”

A win for the good guys?

If the team hunting the Moroccan had Jetson technology, could we have taken him out? Possibly. Certainly, it could have helped figure out who wasn’t the Moroccan, and ruling out targets is huge in a manhunt. For want of a nail, the shoe was lost, etc. The team I served on couldn’t identify the Moroccan in a sea of Pashtun fighters. This meant he lived on to continue to wreak havoc.

You don’t join the military or intelligence community, and I’ve been in both, without endorsing the sentiment behind the Orwell quote, “We sleep soundly in our beds because rough men stand ready in the night to visit violence on those who would do us harm.” My natural impulse is to want to put more effective tools in the hands of those “rough men.”

Reducing unintended battlefield deaths is good. It is not just a reduction in needless killing; one of the biggest creators of motivated insurgents/terrorists is the death of innocents. Jetson would be a boon on asymmetrical battlefields like Afghanistan and Iraq, where only the Americans and our allies wear uniforms and follow formal codes like “rules of engagement.” While we abide by self-imposed constraints, insurgents callously use civilians as human shields, and then gleefully make hay from the collateral damage.

If Jetson works as advertised (or quite possibly, much better), it will make that tactic less effective and hopefully reduce our enemies’ reliance on it.

So, hooray for our SOC warriors and intel operators getting Jetson.

Unfortunately, we have no reason to think only those two groups will get it. For a surveillance technology as game-changing as Jetson, the battlefield is a tiny fraction of the potential market.

You can’t hide from Unique

A challenge in reporting on Jetson was finding cardiologists to examine its central claim—the mysterious ability to ID people via remote measurement of their heartbeat. The majority of cardiologists I contacted declined to comment about Jetson, and wouldn’t say why.

Dr. Ronald Berger of Johns Hopkins is a cardiologist and a biomedical engineer and a professor of both. Despite Dr. Berger’s stellar credentials, he was also reluctant to comment. I told Dr. Berger that Steward Remaly said Jetson produced an ECG-like reading, but I speculated that Remaly might have used the term ECG because it is a common term for a device that measures the heart.

Dr. Berger emailed back: “Seems possible (for Jetson) to get an assessment of heart rate, but not ECG … Even if you had an ECG, it’s not quite like a fingerprint. Even if it were, you would need to know their individual pattern ahead of time in order to ‘recognize’ the individual. If all you have is a pulse reader, then I cannot see how that would allow for individual identification.”

“Sorry,” Dr. Berger wrote, “I don’t know anything about the technology… I don’t really want to guess.”

Dr. Berger was right to be hesitant. In trying to pierce the veil of claims surrounding Jetson, and figure out just what the laser is measuring, I started to think it might work via “computer aided auscultation” (i.e., a fancy laser stethoscope). The hypothesis fit many known facts and suppositions.

But I was wrong.

Further digging invalidated the auscultation hypothesis. A tip identified the engineer (who did not respond to requests for comment) that ran Jetson development at Washington University. A review of his work turned up the 2009 research paper, ‘Laser Doppler Vibrometry Measurements of the Carotid Pulse: Biometrics Using Hidden Markov Models.’

Jetson development began the year after this paper was published. And the smoking gun, Jetson’s mechanism of action, was in the abstract.

Small movements of the skin overlying the carotid artery, arising from pressure pulse changes in the carotid during the cardiac cycle, can be detected using the method of Laser Doppler Vibrometry (LDV) … these variations are shown to be informative for identity verification and recognition … The resulting biometric classification performance confirms that the LDV signal contains information that is unique to the individual. [emphasis added]

This was expanded on in a 2012 paper, ‘Hidden State Models for Noncontact Measurements of the Carotid Pulse using a Laser Doppler Vibrometer.’ A 2016 paper, ‘Cardiorespiratory interactions: Noncontact Assessment Using Laser Doppler Vibrometer‘ noted LDV was useful on a non-contact basis, required no skin preparation, and could be used in harsh environments.

Harsh environments like the battlefield.

Dr. Berger was more forthcoming after I sent him the abstracts. While noting he didn’t have time to do a full review of the literature, he wrote, “This seems like a credible explanation of how it works and what it does.”

I3 refused to discuss Jetson, but I3’s website does offer this “Standoff Biometric Service.”

I3 designed, developed and demonstrated [sic] man-portable system for heart biometrics using a standoff laser vibrometer to measure pulse response at distances up to 200m. This system enables the operator to covertly collect heart biometrics from multiple body locations, including some that might be covered by clothing. The matching algorithm uses unique aspects of the heart pulse shape to create biometric signatures that cannot be disguised by the subject. [emphasis added]

There is that word again—unique. First used in the 2009 academic paper describing what might become Jetson, and now used by I3 advertising Jetson tech as a service. What does a unique identifier mean? It means far better than fingerprints. It means when Jetson gets a clean heartprint from a target (clothes, smoke, rain, or movement could all make that harder), it will be as good or better than a DNA fingerprint. And no messy lab-work necessary.

Jetson is a “win” for the US military and intelligence community, and both fortunately operate under federal oversight.

But there is no better advertisement for a new surveillance tech than that the “Tier One” operators of US Special Operations Command have it. What police department or intelligence agency in the world wouldn’t want the new tech? And what biometric company wouldn’t want to sell it to them? (Note: much of I3’s other business comes from selling face recognition services.)

We should probably think a dozen times before any organization not subject to strict federal oversight, e.g., local law enforcement, gets access to an identification technology as powerful and unaccountable as Jetson.

Where did Jetson go?

Steward Remaly told me twice he’d transferred responsibility for the tech to the Army Night Vision Lab when CTTSO concluded development. As an engineer and former Army colonel himself, it is unlikely he mixed that up.

When I reached out to the Army Night Vision Lab to get Jetson’s status, Richard Levine from Army Public Affairs advised me,

I checked with the U.S. Army Combat Capabilities Development Command, and the Command, Control, Communications, Computers, Cyber, Intelligence, Surveillance and Reconnaissance Center, which runs the Night Vision and Electronic Sensors Directorate—which I think is what you meant when you wrote the “Army Night Vision lab.”

No one has heard of this program.

I was subsequently told, unofficially, it might have gone to the Air Force, then that the Army did indeed have Jetson, somewhere.

Then, per a DOD spokesman Mike Howard,

The Army was asked to conduct a standard technical assessment when we sent the research to them in 2019. However, because this is proprietary information, it would be neither appropriate nor prudent to publicly discuss any aspect of the assessment.

But it could also be that the DOD opted not to discuss Jetson further when they saw my follow-up questions to the Remaly interview. They included, “To whom will Jetson be licensed? Will it be shared with allies? What policy work has DOD done on ‘rules of engagement’ for Jetson? Will it be made available to domestic law enforcement?”

My questions as to possible restrictions on who might get to use it, or how it gets used, were ignored. But I was assured Jetson’s intellectual property would be protected.

According to a DOD spokesman,

The United States welcomes fair and open market competition. We stand against policies or actions that undermine international norms. This includes theft of commercial intellectual property, forced joint venture and licensing requirements, and state-funded acquisitions to transfer sensitive technology.

International norms will be followed, for an ID technology that has never before existed. Ergo, any norms of use will arise from how the DOD, or anyone DOD authorizes it be sold to, decides how to use it.

Who has Jetson and who else is likely to get it?

My best guess of who has custody of the Jetson program is the Army’s Defense Forensics and Biometrics Agency (DFBA), whose motto is “Deny the Enemy Anonymity.” The guess rests on a few points. Jetson is a new biometric device for IDing enemies. I3 has three different contracts with the DFBA worth over $76 million. DFBA lists counterinsurgency as part of its mission, explaining on its website, “Terrorists, foreign fighters, and insurgents utilize anonymity to shield themselves from US Forces.” One of I3’s contracts include support to the DFBA’s Biometrics Operations Division (a division not mentioned on the DFBA’s website).

What we don’t know and can’t guess is who will be allowed, or has already been allowed, to buy Jetson as a device or service. We know per CTTSO’s 2018 Review Book that the Special Operations Command and the Intelligence Community will get access to it first. But both of those groups have a network of local and international tech and data sharing arrangements. Additionally, the 2018 Review Book notes, “CTTSO directly manages bilateral agreements with five partner countries: Australia, Canada, Israel, Singapore, and the United Kingdom … In addition to CTTSO’s bilateral partners, CTTSO cooperates with other countries when appropriate.”

Also, the Intelligence Community is not just the 17 US intelligence agencies. It potentially includes terrorism-info sharing arrangements, like the “Fourteen Eyes” partnership, which includes the US, UK, Canada, Australia, New Zealand, Germany, Spain, Sweden, Belgium, Italy, Denmark, Norway, France, and the Netherlands. We can’t know if some, all, or none of the various partnered law enforcement and intelligence agencies will get access to Jetson.

Or if your local cops are already buying Jetson or testing it right now.

More than halfway done

On the battlefield, Jetson could be employed with a streamlined process. If you are a US soldier, you don’t need to know the name or face of your enemy, just that he’s participated in hostile activity. Once Jetson has remotely surveyed an armed enemy combatant and captured their “heartprint,” the next time Jetson encounters that enemy, the soldier will know from the heartprint match the person is an enemy combatant, even if the combatant is at that moment unarmed and hiding among civilians.

In the civilian world, Jetson will have to be paired with another ID method that already has a name attached to it. That could be fingerprints, DNA, gait analysis, an iris scan, but far and away the most likely candidate is a face recognition scan, as face photos comprise the largest biometric databases by far (the MIT article noted Jetson was likely to be paired with face recognition).

Assume you are James Doe, standing in a crowd at a demonstration (or sitting on a park bench, or at a stoplight in your car, or standing in line at a DMV). Jetson’s optical tracker is aimed at you, which kicks on a beam director, which gimbals Jetson’s laser into alignment, and shoots the invisible infrared beam at you from a box about 8×8″ (20 cm) square. You won’t see the box, let alone the invisible laser, because it is hundreds of feet away from you. The interferometers sensing reflections of the IR laser quantify the individual-specific movements in your skin from the combination of your carotid pulse and breathing.

Now your unique “heartprint” is in a Jetson database.

To usefully identify and track you, that heartprint will have to be attached to a name. A camera linked to a face recognition database will search for a picture of James Doe. If it finds one, or one “close enough” according to the face recognition algorithm, the heartprint and face match will be a permanently linked record. Thereafter, no further use of camera or face recognition software is necessary; you will be considered positively IDed any time another Jetson laser is aimed your way and matches the heartprint on file.

To work, Jetson will need to build a database of heartprints and linked face IDs. But it gets more than halfway there by linking Jetson to photos accessed by face recognition systems. Counting only Clearview AI or Rekognition, Jetson database developers would have instant access to billions of photos of potential suspects (aka ordinary citizens).

If the DFBA has custody of Jetson, it is reasonable to assume it will use its existing storehouse of suspect IDs as a base on which to build out a Jetson heartprint database. The Army sub-agency states it currently has, which have so far generated in excess of 300,000 watchlist hits.

We don’t know if the DFBA or I3 has paired with any federal, state, or local police departments, to gather “heartprints.” We do know per the “Technology Transition” section in CTTSO’s Review Book, CTTSO builds production partnerships and independent operational testing into every project. Many of CTTSO’s projects involve partnerships with, or are developed specifically for, local law enforcement. A partnership with a sheriff’s department in Oregon is how Amazon Rekognition’s photo databases were started.

Does a heartprint database already populated by thousands or millions of heartprints, busily being paired to face recognition matches, exist? We don’t know, and I3 and the DOD won’t discuss testing or sales of Jetson.

As Jetson’s beam is invisible, any current or future James Doe won’t know to protest the violation of his 4th Amendment right to be “secure in his person” against unreasonable search and seizure with no probable cause. Violators can decide its more convenient to keep the citizenry that might make such complaints in the dark, just as the Washington County Sherriff’s Office in Oregon and NYPD did for years with face recognition.

The privacy experts weigh in

Jeramie Scott is Senior Counsel at the Electronic Privacy Information Center. He runs EPIC’s Surveillance Oversight Project. In EPIC’s February 25th, 2021 response letter to the NYPD, “EPIC comments to the NYPD,” he found that the NYPD failed reporting requirements and transparency across a range of measures, (exactly what Clare Garvie found in her earlier investigations of the NYPD’s face recognition use). EPIC’s comments can be summed up by one of the headlines: “New Yorkers are Subject to Constant Invasive Dangerous Mass Surveillance.”

In speaking to Scott about Jetson, my hope that Jetson might not be shared with police simply because it started as a DOD program quickly died.

Scott told me: “I’m not aware of any law preventing transfer of military technology to law enforcement.”

Scott didn’t believe domestic use of Jetson would be regulated with an eye towards maintaining civil rights.

“What I generally see in the use of surveillance technology is the law is interpreted as broadly as they think they can, in order to justify the use of the technology or argue that it is legal to use, is constitutional, and doesn’t require a warrant,” he said. “The way folks want to use it leads to interpretations broader and more lenient than other folks that might look at it.”

Scott also wondered about what kind of testing Jetson might undergo to substantiate the claims about it.

“They’d have to test this on a pretty broad scale,” he said. “Face recognition is used in part because so many pictures are available, pictures taken without consent. You’d need a lot of data to improve this (Jetson) tech. Whose data are you using? Are you testing on volunteers?”

Speaking of testing data and volunteers, we also don’t know how much info Jetson harvests about any individual’s heart. Will it reveal a murmur? An occluded left ventricle? You’d have to be someone like cardiologist/engineer Dr. Berger, and have access to Jetson’s data, to answer that question.

Laser vibrometry is being tested in medical labs on cardiac conditions. Information on those conditions would be tightly restricted if obtained by tests from your medical provider, but think of the “broad and lenient” legal interpretations for surveillance tech EPIC’s Scott has seen. Now, imagine a police spokesman adept at verbal sleight-of-hand saying, “Jetson doesn’t collect medical data, because we don’t collect heartprints for medical purposes.”

Sharon Polsky is president of the Privacy and Access Council of Canada. She has decades of experience as a privacy advisor to corporations, governments, and legislatures. She co-authored the competency standards for data protection professionals in Canada. Polsky thinks Jetson can leverage the deliberately lax oversight of all manner of surveillance technology:

With all of this surveillance tech, privacy is a fundamental misnomer. The concept of privacy has never been given anything more than a grudging shrug. It has always been characterized as impediment to innovation. No politician will require organizations to do better or limit their use of surveillance tech. If they had, the multibillion data broker industry wouldn’t exist. The government couldn’t profitably tax that, and surveillance industries employ people. There are huge financial incentives to encourage surveillance, and governments encourage that messaging, governments influenced by very strong lobby groups.

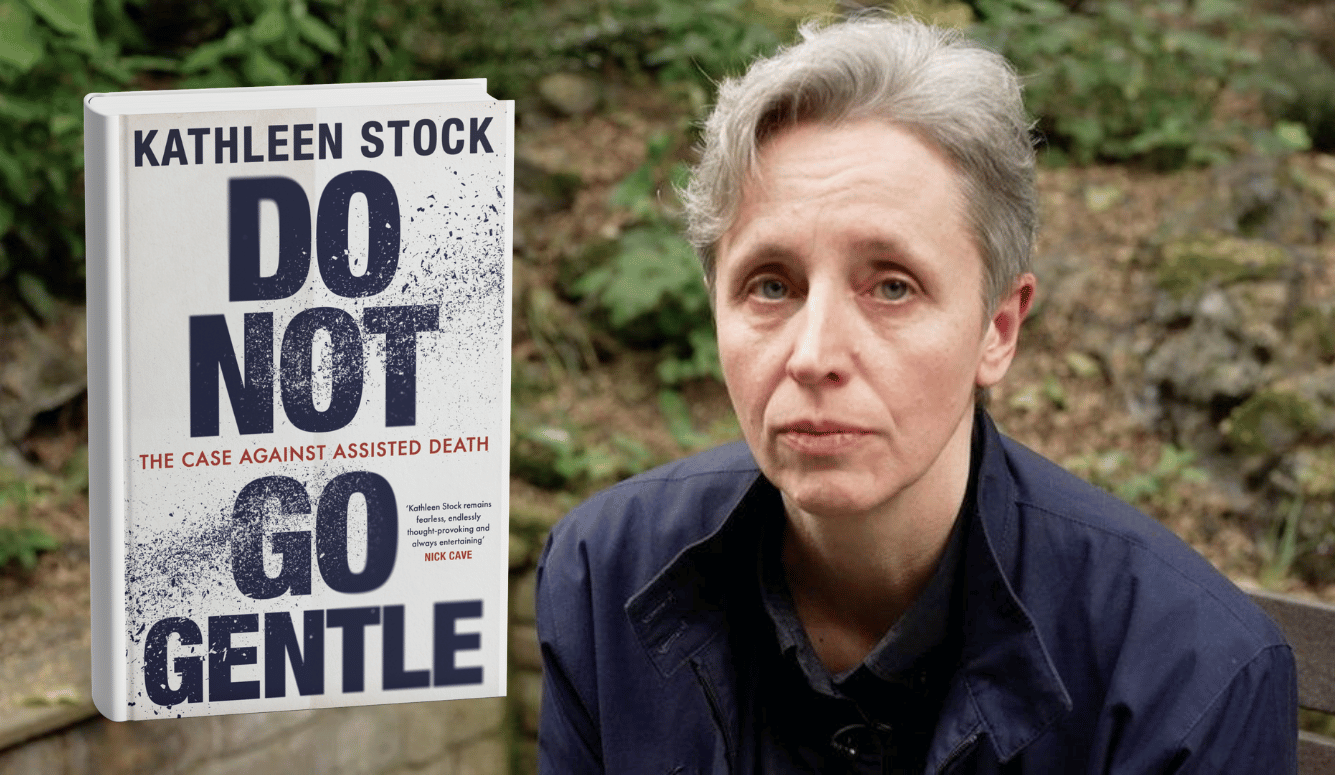

Clare Garvie of the Georgetown Center on Privacy and Technology gave me this warning about face recognition tech when we last spoke.

Face recognition gives law enforcement this unique authority, this unique power, that does pose risks to our constitutional rights, and this needs to be very closely scrutinized now. It probably should have been scrutinized years ago when law enforcement first started implementing face recognition. It’s high time that legislators take a close look at how this technology is being used, and rein it in. [emphasis added]

Where we are now with Jetson is where we were 20 years ago with face recognition tech. So far, years of warnings about face recognition have been largely ignored, allowing Clearview AI to metastasize in a blight on privacy.

There are excellent reasons we don’t put tools of mass surveillance or war in the hands of civil authorities. It’s not about supporting the police. It’s about what kind of society we want to live in.

Journalist Jon Fasman writes for the Economist. He also wrote the 2021 book about surveillance tech used by American police, We See It All: Liberty and Justice in An Age of Perpetual Surveillance.

Fasman perfectly encapsulated the challenge surveillance technologies pose to civil society:

I want to make a point about efficacy as justification. There are a whole lot of things that would help police solve more crimes that are incompatible with living in a free society. Suspension of habeas corpus would probably help police solve more crimes. Keeping everyone under observation all the time would help police solve more crimes. Allowing detention without trial might help the police solve more crimes.

All of these things are incompatible with living in a free, open, liberal democracy.

*(now Irregular Warfare Technical Support Directorate or IWTSD)

Art Keller has covered Iranian issues in and out of government for 20 years. His novel about the CIA and Iran is Hollow Strength. You can follow him on Twitter at @ArtKeller.