Science / Tech

Microbes on Venus May Herald Human Extinction (Though Not in the Way You Think)

Many of these cultures surely had their share of Elon Musks—beings who wished to colonize other worlds.

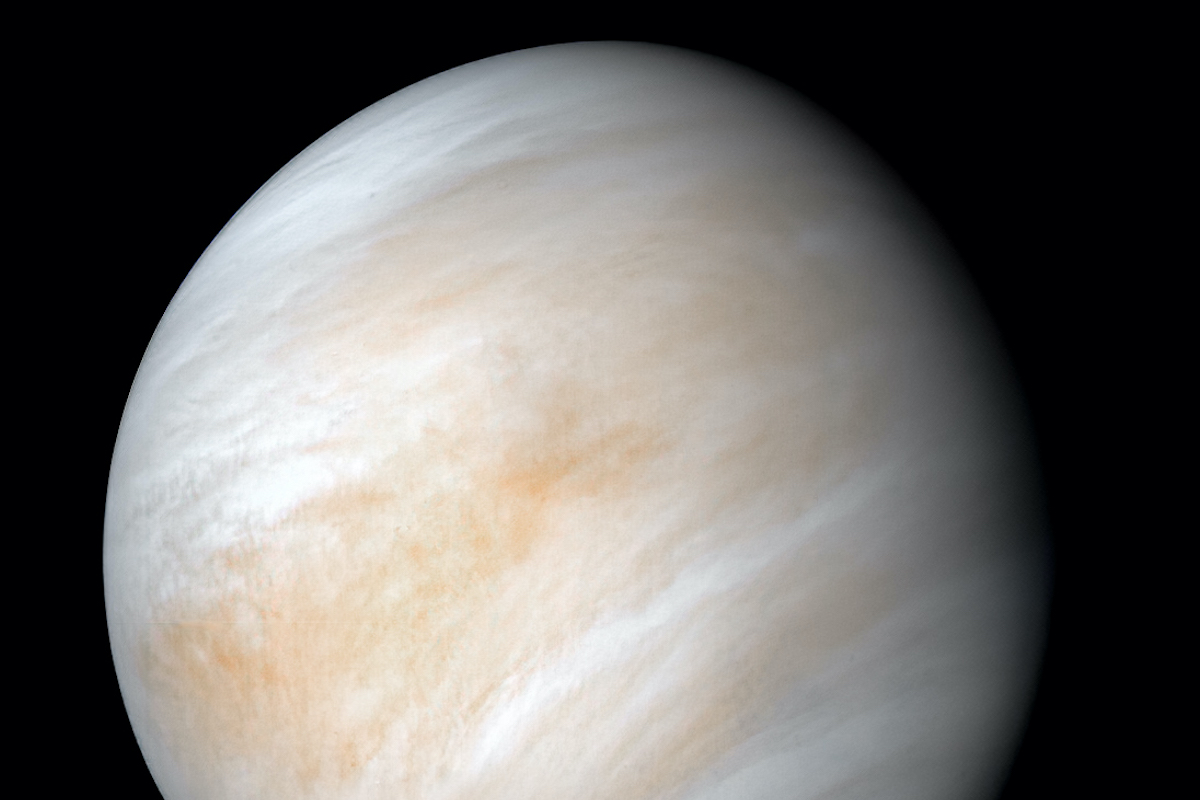

This week’s report that astronomers have discovered possible evidence of life on Venus is good news for science journalists. But it may be bad news for the future of humanity.

One theory on why we haven’t encountered advanced civilizations from other star systems is that the conditions that lead to life are extremely rare. But if those conditions aren’t rare—as illustrated by, say, the appearance of two life-bearing planets in the same solar system—then another reason is more likely: We haven’t seen aliens because advanced civilizations tend to self-destruct before they have time to colonize the stars.

Much of this discussion is rooted in the so-called Fermi paradox—whose Italian-American originator, Enrico Fermi (1901–1954), famously wondered why humanity seems alone in the vastness of the universe. Our galaxy alone contains an estimated 20 billion Earth-like planets. Many of these worlds are likely over a billion years older than Earth. So you would think there’s been plenty of time for an alien civilization to reach us, even if it expanded at only a tiny fraction of light speed. Why hasn’t at least one planet in our neighborhood birthed an intergalactic civilization that we can detect?

Consider a terrestrial analogy: Evolution on Earth has pushed life to every sustainable niche on the planet, from deep ocean crevices to mountain peaks. Why hasn’t the same happened in our galaxy? If advanced life is common, we should expect that alien races would want to colonize as much of the universe as they could in the same way as humans have occupied every habitable region of Earth.

Within a thousand years or so, humanity will likely start sending spaceships to colonize other star systems, so long as our technology evolves sufficiently. Those new worlds will, in turn, eventually send ships to still more planets. Within a million years, which is practically nothing on the timescale of galactic existence, we should reach every star system in our galaxy and then start to explore the Laniakea Supercluster, in which the Milky Way resides. Once we have gone far enough, we will have spread beyond the reach of any one local disaster, and so should survive until the end of the universe. Why haven’t aliens already done this?

Hopefully, we have not seen aliens because they do not exist, and the universe waits empty for mankind to fill up its trillions upon trillions of unoccupied planets. But this week’s news from Venus would seem to cast doubt on that. It turns out the acidic atmosphere of Venus bears traces of a rare molecule called phosphine, which—on Earth, at least—is linked to microbes that live in oxygen-free environments. No, researchers aren’t claiming that they’ve detected life per se. And even if such microbes did exist on Venus, there are all sorts of reasons why Venusian evolution might have become arrested well before the development of intelligent life forms. I’m certainly not arguing that some civilization grew and self-exterminated on Venus. But if it does turn out that Venus is home to microbes, it would help show that abiogenesis—the development of life forms, such as amino acids and proteins, out of non-living matter—isn’t unique to Earth.

Economist Robin Hanson has used the term “the Great Filter” to describe the force that stops star systems from giving birth to life that colonizes other systems. The Great Filter is whatever causes advanced expansionist civilizations to be rare enough so that we have never seen evidence of one. The Great Filter could be the difficulty of life developing (a theory that, as noted above, seems weaker now than it was last week), or it could more closely resemble the plotline of dystopian science fiction. The critical question for mankind is whether we have already passed through the Great Filter many millions of years ago, or if we still need fear it.

Imagine learning that thousands of advanced civilizations had arisen in our galaxy eons ago. Many of these cultures surely had their share of Elon Musks—beings who wished to colonize other worlds. And they contained groups such as the Pilgrims, who had reasons for abandoning their homes and settling far-off places. Pretend that we knew that each and every one of these numerous civilizations succumbed to internal conflict, destroying themselves with nuclear weapons (or who knows what) before they could spread to another star system. Evolution, in this hypothetical situation, would be shown to have unwittingly prevented space colonization by pairing intelligence with a tendency toward self-destruction.

Hopefully, if we do find life on Venus, it will turn out to have the same origin as life on Earth. Perhaps simple life arose only once in our solar system—and perhaps even only once in the visible universe—on either Earth or Venus, and then spread to the other planet by asteroid. Or maybe life got its start somewhere else, and somehow got to our solar system by comet. Or possibly one of the spacecraft we sent to Venus accidently infected our sister planet with microbes. In all of these scenarios, life on both planets would have a common ancestor. In this case, we could still reasonably hope that this was a one-off, or near-one-off, event. If Venus does contain microbes, it will be interesting to examine their DNA (or whatever it has instead of DNA) to help test all these theories.

Besides civilizational self-destruction through war, or catastrophes such as asteroid impacts, there is another way that advanced societies could perish wholesale: a trap hidden in the laws of physics. The men who designed the first atomic bomb had real fears that they might ignite the atmosphere and destroy humanity. They did a bit of research and satisfied themselves that this wouldn’t happen. But what if they were wrong? (American physicists did significantly underestimate the explosive yield of a 1954 hydrogen bomb test, because they didn’t understand how the presence of lithium-7 would affect the reaction.) What if igniting an atomic bomb really could destroy a planet’s atmosphere, but through long and complex chemical and thermodynamic chain reactions that we wouldn’t understand until long after the first one had been detonated? What if splitting the atom—or some analogous future scientific breakthrough, of which we can’t currently conceive—is the Great Filter? If that were the case, then every civilization would eventually extinguish in the same way (though for this theory to work, we would have to presuppose that the fatal technological step inevitably is taken before the development of the technology that allows for the colonization of other planets).

Many of the Great Filters that have been proposed over time are more banal and, to my mind, unrealistic. For example, the Great Filter is probably not the economic cost of colonizing other worlds. Once a species masters self-replicating machines, it seems likely it could take over other star systems at a tiny cost relative to its (by then) enormous economic output.

Bacteria are self-replicating. And if they are situated within an abundant food source, they expand their numbers at an exponential rate. This is why, as mentioned above, it’s possible that a small number of bacteria from an Earth ship really could have already had a big impact on Venus, assuming they could find nourishment in the atmosphere. By analogy, humans could one day create intelligent robots capable of space travel. They could use materials in other star systems to make billions of copies of themselves, much like bacteria. These new robots could then set about terraforming the planets to make them suitable for the robots’ human creators (or, more realistically, their descendants). The cost, to a sufficiently advanced species, of terraforming an entire galactic supercluster might become trivial.

Another banal explanation for the Great Filter is a lack of necessity: A civilization that is advanced enough to colonize other planets would be advanced enough to fix problems on its own planet. But even the most advanced creature can’t change the laws of nature. If our understanding of physics is correct, our universe has a limited amount of what is called free energy—the stuff you need to do anything. One would think that an advanced civilization would want to expand as fast as it could, if only to grab and store as much as possible. Collisions of black holes waste enormous amounts of free energy. Basic free-energy economics, writ large, would dictate that advanced alien races would be busily restructuring the universe so as to prevent this wastage. Think of it as the ultimate act of environmental conservation.

If economics didn’t drive aliens to expand, military necessity should. Earth could easily have been taken over by a Genghis Khan-like leader who believed it his divine duty to conquer the universe. Benevolent alien races would want to control the resources of as many star systems as possible to protect themselves against such ruthless aliens. A failure to expand serves to forfeit militarily valuable real estate to enemies.

What can we do to ensure life, of some variety, survives in the universe? One problem is that we don’t know what technological step might be our last—the one that unleashes the Armageddon that, in retrospect, turns out to be the Great Filter. But what if others could learn from our example before they, too, pass through the filter?

Imagine long-lived satellites that constantly update with the latest news from Earth, recorded in a way that might conceivably allow an alien civilization to understand our meaning. If the satellites don’t hear from us for a year, they will start transmitting warnings to the stars, indicating that their creators have been wiped out. As well, they will broadcast the last news from Earth before we went dark—such as, perhaps, “Physicists excited about a new high-energy experiment…”

In theory, such transmissions might help nearby aliens survive the Great Filter. At the very least, this gesture would help convince any aliens we encounter on our (hopefully successful) colonizing missions that we’re a species they can trust.

It isn’t true, of course. But it’s worth a shot. They don’t know us yet.