Art & Culture

Money Laundering for the Soul — The Unbearable Ease of Moral Self-Exoneration

We do this with surprising ease, often basing sustained bouts of deliberate nastiness on nebulous reasoning.

Perhaps it was the recent steady trickle of headlines reporting banned refugees, gunned down immigrants, and desecrated graveyards that got me thinking about the human ability to do onto others what we would not at all want others to do onto us; the facility with which we come to hurt others in ways that a short time earlier would have seemed inconceivable, and will no doubt seem so again in the not-far future; our ability to suspend our moral principles and ignore — or worse yet, inflict — cruel conduct that is in clear violation of the moral principles we claim to espouse.

We do this with surprising ease, often basing sustained bouts of deliberate nastiness on nebulous reasoning. To quote the writer Loren Eisley, humans “kill for shadowy ideas more ferociously than other creatures kill for food.” And we do it with relish. As the British philosopher Jonathan Glover has noted, “Our species’ fascination and preoccupation with inflicting brutality on itself, the sheer innovative effort dedicated to the task, and the visceral thrill of it are akin in their intensity to the human preoccupation with sex.”

One resonant attempt to describe the psychological mechanisms that allow people to do bad things without seeing themselves as bad people can be found in the writings of the psychologist Albert Bandura. The name, while far from household, might nonetheless ring a bell. Bandura is the researcher behind the famous Bobo Doll experiments from the early 60s, in which young children, having witnessed a model aggress against an inflatable toy doll, preceded to imitate the aggressive interaction, and elaborate creatively on it, when placed a bit later in the presence of the doll.

Bandura’s interests in human aggression — the ease and comfort with which we are willing to perpetrate harm — have extended beyond the playroom: he has since written thoughtfully about our dangerous propensity to divorce our actions from our own moral bearings.

According to Bandura, moral conduct requires constant, active self-regulation. Alas, “there are many psychosocial maneuvers by which moral self-sanctions are selectively disengaged from inhumane conduct.” In other words, our internal self-structure, much like our external social structure, requires active regulatory efforts to keep mischief, malfeasance, and malice in check. Yet just as social regulatory agencies can be corrupted to bad ends, so can our own internal regulators be turned to provide psychological cover for our transgressions.

This, of course, is an inherent weakness in any self-governing system. Here’s Nobel-Prize-winning poet Bob Dylan on the topic:

Preacher was talking there’s a sermon he gave

He said every man’s conscience is vile and depraved

You cannot depend on it to be your guide

When it’s you who must keep it satisfied

The late physicist Richard Feynman has written that, “the first principle is that you must not fool yourself — and you are the easiest person to fool.” This insight applies to human affairs far and wide, but perhaps nowhere more urgently than in the realm of moral (mis)conduct. Bandura’s work has elucidated eight psychological self-exoneration mechanisms that help us fool ourselves into inhumanity by neutralizing our own moral self-regulation:

- The first mechanism is moral justification. “People do not ordinarily engage in harmful conduct until they have justified to themselves the morality of their actions,” Bandura argued. “In this process of moral justification, detrimental conduct is made personally and socially acceptable by portraying it as serving socially worthy or moral purposes.”

In other words, to get a good man to do a bad thing, you need not make the good man bad, but rather make the bad thing appear good. Getting soldiers to kill is not achieved by reshaping their personalities toward evil, but by reframing killing as good, noble, and necessary for protecting cherished values. Bandura quotes Voltaire, “Those who can make you believe absurdities can make you commit atrocities.”

- Euphemistic labeling. Bandura writes: “Activities can take on very different appearances depending on what they are called.” Language affects how we feel and think about the things it describes, largely because language is two-dimensional: Words both denote and connote meaning. Different phrases that denote the same thing may connote different things, thus resonating differently in the minds of those who utter and hear them. “Killing the dog” and “putting the dog to sleep” denote the same action, but offer up vastly different connotations.

Thus, sanitized language may mask the true nature of an event in the same way that a beautifully landscaped graveyard makes our encounter with the idea of death and decay more palatable, less aversive. Or, per Bandura, “bombing missions are described as ‘servicing the target,’ in the likeness of a public utility. The attacks become ‘clean, surgical strikes,’ arousing imagery of curative activities. The civilians the bombs kill are linguistically converted to ‘collateral damage.’”

And sanitized language is also of course is the dark side of extreme political correctness, which, in the name of sparing hurt, may also obscure the truth. “Alternative fact” is softer than “lie.” But using the softer word can no more erase the actual fact and harsh real-world implications of lying than talk of “alternative knowledge” may erase the harsh fact and real world impact of ignorance.

- Advantageous comparison. This refers to the fact that, “how behavior is viewed is colored by what it is compared against.” I may have stolen your watch, goes the logic, but I didn’t kill you, so I’m not that bad, and you should stop griping. In fact, you should thank me. Bandura points out how, “the massive destruction in Vietnam was minimized by portraying the American military intervention as saving the populace from Communist enslavement.” True, our military actions have resulted in massive civilian casualties, but they were not intended to kill civilians, so there.

- Displacement of responsibility. “Self-exemption from gross inhumanities by displacement of responsibility is most gruesomely revealed in socially sanctioned mass executions,” writes Bandura. “Nazi prison commandants and their staffs divested themselves of personal responsibility for their unprecedented inhumanities… They claimed they were simply carrying out orders.” In Milgram’s famous obedience experiment, participant ‘teachers’ placed the responsibility for administering punitive electric shocks to innocent ‘learners’ on the experimenter sitting nearby, even though the ‘teachers’ were the ones pressing the shock machine’s levers.

- Diffusion of responsibility happens when, “a sense of personal agency gets obscured by diffusing personal accountability.” This can be achieved in several ways.

First, division of labor may diffuse accountability. If each person was in charge of only a small part of the bomb, then none of them had built the bomb.

Group decision-making is another way to diffuse responsibility. When the group decides together, individuals that make up the group don’t feel individually responsible for the decision.

Finally, collective action may diffuse one’s sense of individual responsibility. If a hundred people loot the store, no individual is fully responsible for the cumulative destruction brought about by the looting. After all, I only took one TV. I’m not responsible for the devastating loss of the store’s total inventory.

- Disregard of consequences. Moral control may be weakened when the effects of immoral actions are disregarded or distorted. According to Bandura, this mechanism may operate on two levels. The ‘weak version’ involves minimizing the harm. It wasn’t such a big deal. If minimization doesn’t work, one may turn to the ‘strong version’ of this strategy: discrediting the evidence of harm altogether. “As long as the harmful results of one’s conduct are ignored, minimized, distorted or disbelieved, there is little reason for self-censure to be activated.” It is easier psychologically to fire a missile that kills a thousand people you can’t see or hear than to stab one person to death with a knife, because it’s easier to disregard those consequences that are not experienced up close.

- Dehumanization. A political science professor of mine once noted how one sure sign that a politician is preparing to run for president is that their hair begins to look great on TV. In a different but similar way, one sure sign that we are preparing to do harm to another group is that we begin to paint them as less than fully human. Not only are they ‘not us,’ but they are also ‘not like us.’ Not only are they ‘not like us,’ but they are also ‘less than us.’

Writes Bandura: “Self-censure for cruel conduct can be disengaged by stripping people of human qualities. Once dehumanized, they are no longer viewed as persons with feelings, hopes and concerns but as subhuman objects… They are portrayed as mindless “savages,” “gooks,” and the other despicable wretches.” (Like, say, “rapists,” “murderers,” “bad hombres” etc.).

- Attribution of blame: “Blaming one’s adversaries or circumstances is still another expedient that can serve self exonerative purposes,” according to Bandura. We can dismiss, shun, or retaliate against a rape victim once we’ve convinced ourselves that she was asking for it, that she’d elicited the attack with her provocative clothes or intoxicated state; that she deserved it because of her penchant for promiscuity.

Bandura notes that victim-blaming abuse is more psychologically harmful than acknowledged cruelty. “Mistreatment that is not clothed in righteousness makes the perpetrator rather than the victim blameworthy. But when victims are convincingly blamed for their plight, they may eventually come to believe the degrading characterizations of themselves.” If you tell the poor that their plight is due to their laziness as opposed to societal conditions set to exploit them, then over time many of them will come to believe the lie, and behave in ways that reinforce it.

According to Bandura, decent people do not morph into agents of inhumanity overnight, but gradually. The process he’s described will sound as familiar to history buffs as it will to news junkies:

Disengagement practices will not instantly transform considerate persons into cruel ones. Rather, the change is achieved by gradual disengagement of self-censure. People may not even recognize the changes they are undergoing. Initially, they perform milder aggressive acts they can tolerate with some discomfort. After their self-reproof has been diminished through repeated enactments, the level of ruthlessness increases, until eventually acts originally regarded as abhorrent can be performed with little personal anguish or self-censure. Inhumane practices become thoughtlessly routinized.

Bandura has identified several conditions under which such shifts into immorality are more likely to happen.

First, ideology is more dangerous than whim in the context. “The massive threats to human welfare stem mainly from deliberate acts of principle rather than from unrestrained acts of impulse.”

Second, systems that lean autocratic are more likely to move toward immorality than those that lean democratic:

Monolithic sociopolitical systems that exercise tight control over institutional and communications systems can wield greater power of moral disengagement than pluralistic systems that represent diverse perspectives, interests and concerns. Political diversity and institutional protection of dissent allow challenges to suspect moral appeals.

Third, access to information, and public attitudes about information, play an important role in whether or not systemic social pressures toward immoral conduct are successful. “Limited public access to the media has been a major obstacle to reciprocal influence on detrimental social policies and practices. Healthy skepticism toward moral pretensions put a further check on the misuse of morality for inhumane purposes.”

For Bandura, the remedy is found in, “resolute engagement of the mechanisms of moral agency.” This requires us to resist social pressure to the contrary.

In the exercise of proactive morality people act in the name of humane principles when social circumstances dictate expedient, transgressive and detrimental conduct; they disavow use of valued social ends to justify destructive means; sacrifice their well-being for their convictions; take personal responsibility for the consequences of their actions; remain sensitive to the suffering of others; and see human commonalities rather than distance themselves from others or divest them of human qualities.

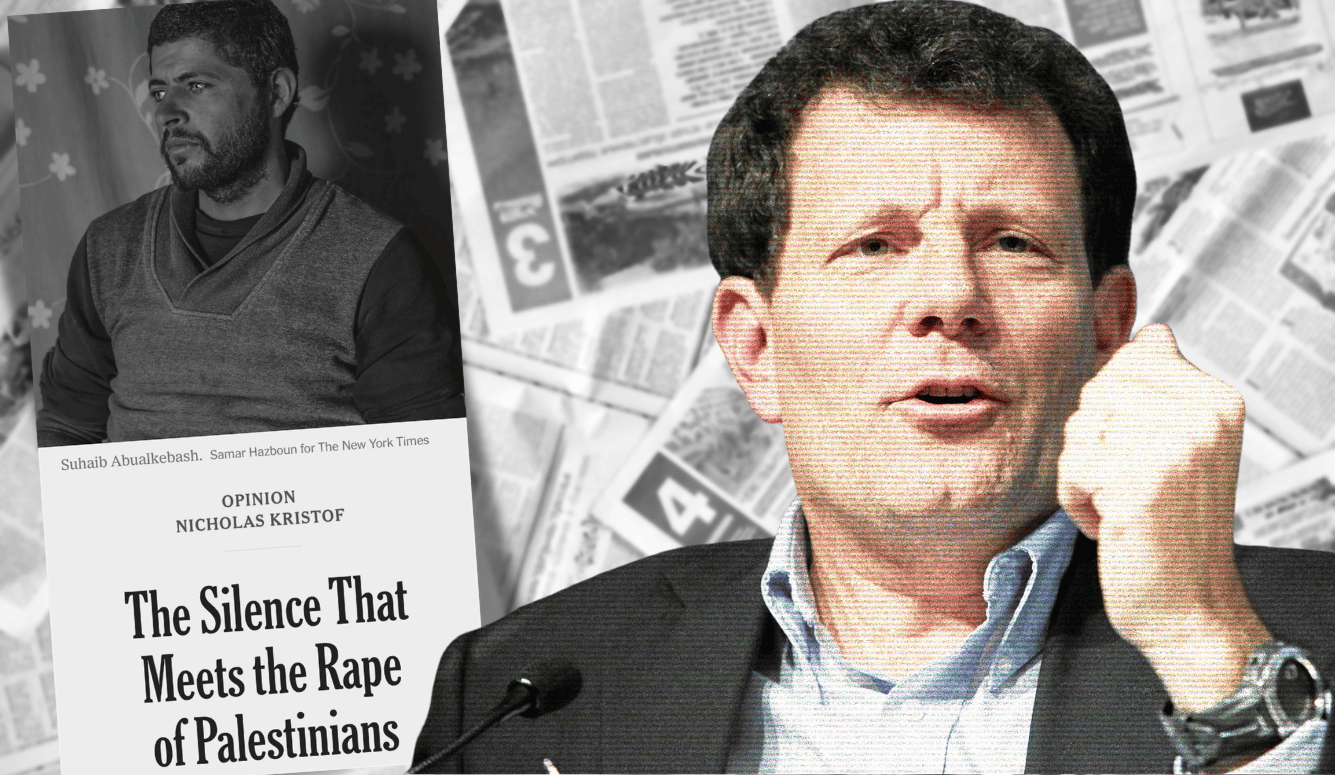

In particular, institutional practices that “insulate the higher echelons from accountability for the detrimental policies over which they preside,” discourses that “cloak inhumane activities in sanitizing language,” and corporate practices that have “injurious human effects” must be “monitored, subjected to negative sanctions, and widely publicized to enlist the public support needed to change them.”

Cogent observations, it seems to me. And dare I say, timely…