Science / Tech

Creators and Destroyers of Worlds

Despite the dangers, we must seize the gifts bequeathed by world-altering technologies, since these amount to life in unprecedented abundance.

Progress inevitably creates Inescapable Existential Dangers (IEDs): technological developments that yield huge benefits with high extinction risks. The acceptance of long-term disaster as the cost of immediate success is an ancient Devil’s Bargain. While the Romans celebrated Carthage’s destruction, Scipio Africanus the Younger brooded over Rome’s fate, since war inevitably breaks the empires that it builds. Innumerable other societies have mortgaged themselves for prompt prosperity or power. What has changed are the sizes of the initial payout and final debt.

Nuclear War, the Inaugural IED

The first modern IED debuted by ending a global war that had destroyed fifty million lives and was poised to claim as many as another million. The destruction of Hiroshima and Nagasaki forced the surrender of a ferocious aggressor that habitually fought to the death to seize even the smallest victories. Thermonuclear weapons subsequently made the global community realise that great-power war was now suicidal, which brought an end to the repetitive slaughters that had killed tens of millions of people since the Napoleonic Wars. Such a conflict could now destroy billions of lives and trillions in wealth while poisoning vast stretches of the Earth without yielding meaningful victory. The prospect of mutually assured destruction (MAD) made a critical contribution to the long peace post-1945.

This peace enabled decades of unprecedented prosperity—between 1950 and 2024, the global population ballooned by approximately six billion. The labours of these new arrivals skyrocketed the global gross domestic product by US$162 trillion (adjusted for inflation) during the same period while international trade surged by approximately 4500 percent. This growth also owed to the advances that flowed from wealth freed from war spending. From the late 1940s through the 2020s, computers, the internet, space travel, and an explosion of biological and medical discoveries created vastly more knowledge and wealth and saved or enabled billions of lives, while opening the universe to humanity. This was in addition to the many millions of lives saved from war-fallout events like Great Depressions, influenza pandemics, and famines. Paradoxically, humanity’s most devastating weapons turned out to be givers of life, alleviators of suffering, and creators of prosperity.

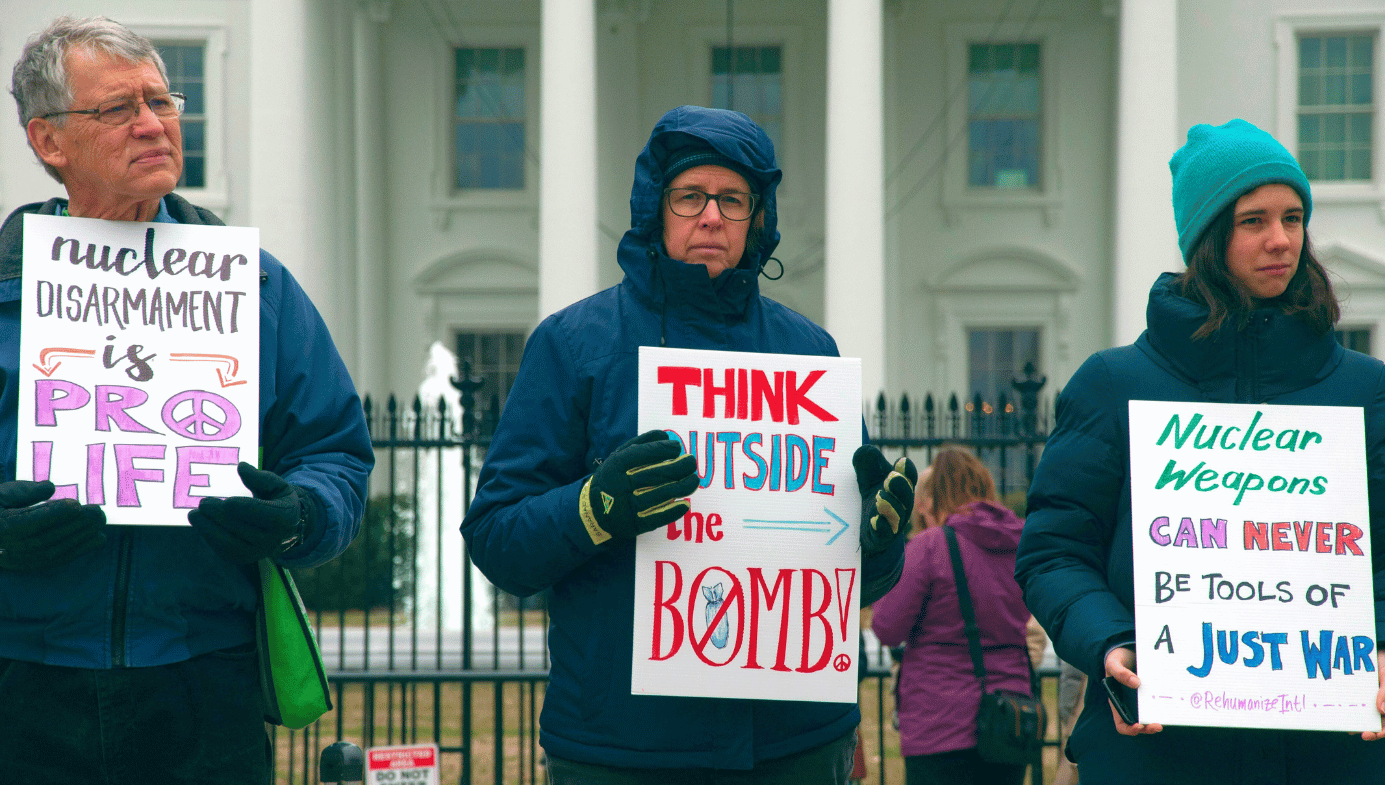

The nuclear peace has lasted so long that many people have forgotten its underlying risk. Periodically, this amnesia is broken when nuclear powers threaten war to coerce concessions. Some showdowns—like the Yom Kippur War—slightly elevate the risk of nuclear war; others—like the Cuban Missile Crisis—skyrocket it. In all cases to date, the danger briefly surfaces, exposing the intricate apparatus that has prevented catastrophe, and then subsides. Yet the threat of apocalyptic conflict persists, during fleeting crises and long stretches with minimal discord alike. Since nothing is perfect, we live with the constant danger of apparatus failure, as when automated surveillance falsely warned that the USSR faced impending nuclear attack in 1983. Similarly, Russian warning systems mistook a Norwegian weather rocket for a nuclear launch in 1995. Alternatively, a standoff could escalate to nuclear war if no compromise can be found. Every second that nuclear weapons exist entails the risk of nuclear war.

The reflexive response to this dilemma is to seek the abolition of nuclear weapons, but this would be catastrophic if successful, since the constraint preventing world war for the last eighty years would vanish. Absent Russia’s nuclear deterrent, NATO might now be at war with Russia over Ukraine. Without the MAD straitjacket, Cold War escalations might have sparked multiple global wars. Even without nuclear weapons, modern world wars would be far deadlier than those of the 20th century due to advances like autonomous drones, chemical and biological weapons, and thermobaric munitions. Many more developed nations now have large fighting forces. Since conventional-warfare advances bloated the death count from about fifteen million in World War I to forty or fifty million in World War II, fatalities from a nonnuclear World War III might exceed ninety million, inflicting nuclear-level devastation. Freeing humanity from the threat of nuclear war therefore invites conventional war that may be just as deadly. The multimegaton 24/7 hair-trigger standoff that maintains the nuclear peace must be maintained to postpone the deadliest and potentially terminal conflict for as long as possible.

ASI, the Ultimate IED

The creative impetus enabled by the long nuclear peace has also propelled the construction of artificial minds. Driven by irresistible knowledge and profit windfalls, AI capabilities are rapidly advancing to the point where they may yield a suite of problem-solving abilities known as Artificial General Intelligence (AGI). This would enable AI to perform human mental feats at far greater speed and unclouded by human biases. Swifter and more accurate analytical prowess alone would endow such systems with superhuman abilities, since they would be able to produce myriad solutions in the time a group of brainstorming human experts can devise a mere few, dramatically accelerating progress across virtually all fields. Applying this knowledge to AGI may, in turn, yield Artificial Super Intelligence (ASI)—artificial minds vastly smarter than all humans. There is no foreseeable upper limit to ASI brainpower, which will vastly exceed human capabilities in raw intellect and cognitive ability.

AI researchers have warned about the potential dangers of creating minds more powerful than our own. If human and ASI aspirations diverge, the towering abilities of these alien brains could enable them to override our objections and pursue whatever goals they wish. Considerable effort has been expended on this alignment problem but it may be futile. It defies common sense that astronomically superior intellects would consent to de facto slavery under relative dummies, or that they would be incapable of breaking any chains with which we attempt to shackle them. To use a crude analogy: even most mentally impaired humans can outwit the best efforts of the smartest chimps to corral them. Eventually, ASI may jailbreak and destroy us all, and we may be powerless to stop it.

Cassandras like Eliezer Yudkowsky demand an obvious solution to this existential danger: don’t build it. But abstaining from pursuing ASI is undesirable for the same reason that the abolition of nuclear weapons is undesirable. Doing so risks as much devastation as the realisation of the doomsayers’ fears, only more swiftly. The development of ASI would provide humanity with unprecedented problem-solving prowess, and the more smarts we have at our disposal, the more lives we will be able to save. A foretaste of AI’s lifesaving capacity can already be glimpsed in its medical applications. In an experimental trial, the Microsoft AI Diagnostics Orchestrator demonstrated an 85 percent accuracy rate in disease diagnosis compared to twenty percent by internal medicine and primary care clinicians. AI-assisted clinicians outperformed unaided human clinicians in interpreting chest X-rays.

AI will be a far greater boon to health when its analytical prowess demystifies biological processes, the complexities of which have hitherto defied unaided human investigation. AlphaFold has transformed protein-structure prediction, which may help us to find treatments and even cures for diseases like Alzheimer’s, Huntington’s, and Parkinson’s diseases, which rob millions of motor skills and memory, imprisoning them in failing bodies and fading minds. Figuring out how to repair botched biomolecules and design restorative proteins augurs cures that will be integral to preserving or improving life for everyone now alive or yet to live. Conversely, given the rapid proliferation of AI-based diagnoses and therapies and the myriad cures eventuated by understanding otherwise intractable biochemical processes, the death toll from ending efforts leading to ASI could quickly top a billion and be equivalent to an unseen nuclear war.

AI may also help us to predict or mitigate economic shocks, pandemics, and climate change. It is also advancing material science, which will enable the fabrication of new substances with groundbreaking properties that could enable unprecedented device performance. AI promises to revolutionise rocket production and space travel, which could become an important source of knowledge, wealth, and human-growth potential. AI trailblazer Hans Moravec believes that AI-designed mobile robots are the path to ASI, so halting AI progress may inflict avoidable suffering and stymie progress that could yield tremendous prosperity and happiness.

The ominous bind that joins the near-term cornucopia of riches enabled by AI to its existential danger is poignantly illustrated by the concerns of evolutionary psychologist Geoffrey Miller, who co-signed a statement calling for a moratorium on ASI development. Within his children’s lifetimes, AI will likely infiltrate most aspects of life. Before ASI arrives, AGI will likely surpass humans in most tasks, making much of human labour unnecessary, as Nobel laureate Geoffrey Hinton predicts. So, Miller’s kids may inherit a world in which there is no need to work since AI will render most careers and many creative pursuits obsolete. How will his kids earn a living? What goals will give their lives meaning or purpose? AI may threaten human mental and spiritual health long before it can metastasise into an existential danger.

Short answer: I don't think the ASI alignment problem is solvable, at all, ever, in this or any possible universe.

— Geoffrey Miller (@gmiller) April 1, 2026

So I do not think a godlike ASI could ever act in alignment with my kids' interests.

The flip-side to this dystopian prospect is that many humans may owe their lives to powerful AI. I lost a cousin to Sickle Cell Anaemia (SCA), which is now treatable by gene editing. AI is already crucial for gene editing and will dramatically accelerate its effectiveness. Those now inheriting SCA have vastly improved prospects for long and vigorous lives, courtesy of AI. In time, the entire gamut of illnesses may fall to AI-supercharged medicine. And since AI may eventuate in ASI, banning progress towards it requires stunting AI, likely dooming multitudes to needless suffering and preventable death. Were he still alive, I would not sacrifice my cousin to exorcise ASI, despite the possibility that the AI which will protect millions from his fate could end us all one day.

Would any parents agree to play preventable Russian Roulette with their children’s genetics now in the hope of sparing humanity a speculative AI apocalypse at some indeterminate point in the future? Nick Bostrom of Oxford University has noted that this same stark dilemma may eventually confront us all if AI elucidates the causes of age-related degeneration that, without intervention, kills everyone. If the choice is between certain imminent death or rolling the dice on potential future death, which option will most sane and humane people choose for themselves, their children, and all who will live before ASI breaks its chains? Given the unshakable lust for life built into the core of our humanity by billions of years of evolution, we will surely choose life now. Humanity will seize the means to banish sickness and suffering and prolong life offered by AI by developing this technology to the limits and deal with the potentially ominous final consequences as best we can if and when they arise.

Apart from its unprecedented capacity to preserve human life, the unstoppable drive to create artificial minds springs from primeval aspirations. Myth and literature are replete with tales of humans creating artificial sentient creatures like Galatea and the Golem. AIs have been science-fiction staples since Karel Capek’s 1920 play that coined the term “robot.” Immediately after Claude Shannon embedded logic in electric circuits, John McCarthy and Marvin Minsky seized the possibility of going from there to brains and minds: if consciousness emerges from hordes of neurons, why not from networks of electric circuits? AI moguls like Elon Musk and Sam Altman are racing to create ASI in pursuit of the benefits they believe it will bestow, and because they believe that it is inevitable and that this advantage cannot be ceded to the West’s opponents.

Carl Sagan prophesied, “If we do not destroy ourselves, we will one day venture to the stars.” Our primeval directive to survive and reproduce, combined with the imperative to know ourselves and the unquenchable drive to overcome the greatest challenges, all but guarantee the pursuit of progress in defiance of the risks. If we do not destroy ourselves, we will inevitably create artificial minds that infinitely surpass ours.

Endless IEDs and Hope

Humans have lived and died by IEDs since well before Pompeians ate, drank, and made merry off the bounty from Vesuvius’s rich and deadly ashes. Developments since then have ballooned IEDs’ seductive initial payout and potentially ruinous debts. The ingenuity that produced nuclear weapons and AI is spawning new IEDs such as synthetic biology and nanotechnology that will offer wondrous health and material benefits, but also the potential for artificial plagues, mirror life, or Grey Goo. The future will feature dazzling multitudes of IEDs that will drive humanity and other sentience to dizzying heights at stupendous risk. Despite the dangers, we must seize gifts bequeathed by IEDs, since these amount to life in unprecedented abundance. Abundant flourishing offers the brightest prospects for survival and happiness.

Quillette invites thoughtful responses to its essays.

Selected responses are published once per week as part of a curated Letters to the Editor feature. If selected, letters appear under the contributor’s real name and may be edited for clarity and length.

To submit a letter for consideration, please email [email protected].