Science Fiction

Utilitarianism's Missing Dimensions

According to the ethical theory of utilitarianism, refusing to push the strange man is unethical. In both scenarios, the ethical action is to sacrifice one to save five.

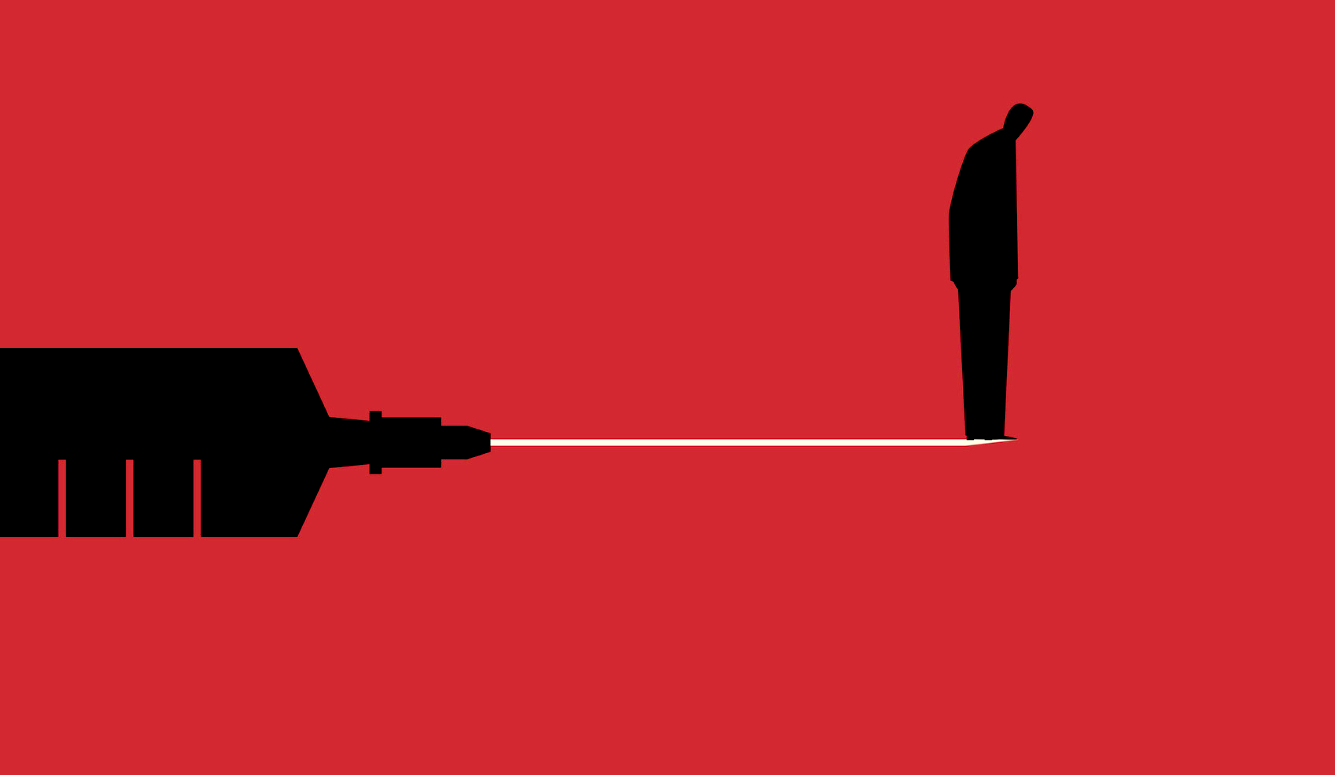

In 2001, Joshua Greene and colleagues published a report in Science that helped turn a once-obscure philosophical conundrum involving trolleys into a topic of conversation at scientific conferences, philosophical meetings, and dinner tables across the globe. The report used fMRI technology to probe what is going on in the brains of research subjects when they are faced with hypothetical ethical dilemmas represented by two classic scenarios. In one, subjects are asked if they would be willing to pull a lever to divert a trolley onto a track on which one person is standing, if doing so would prevent the death of five people standing on the track of the trolley’s current trajectory. In scenarios like this one, where there is no direct physical contact between the person taking the action and the person being sacrificed, most subjects say it would be ethically appropriate to sacrifice one to save five. In the second scenario, subjects are asked if it would be appropriate to push a strange man off a footbridge onto a track, if his death would stop a trolley hurtling toward five other people. Famously, it turns out that in scenarios like this one, where there is direct physical contact between the person taking the action and the person being sacrificed, most subjects say it would not be appropriate to push the strange man off the footbridge to prevent the death of the five.

According to the ethical theory of utilitarianism, refusing to push the strange man is unethical. In both scenarios, the ethical action is to sacrifice one to save five. Based on what Greene and his colleagues observed when subjects were faced with the two sorts of scenarios in an fMRI machine, they concluded that activity in the emotional part of the brain was getting in the way of most subjects making the right utilitarian judgment. Pushing the strange man engaged emotions in a way that pulling the lever did not. After the publication of that report, sacrificial dilemmas of this sort started to get so much attention that it began to seem that a willingness to do harm to others was the core of utilitarianism.

It is that picture of utilitarianism—with a willingness to do harm at the core—that a major new paper seeks to correct. The paper, “Beyond Sacrificial Harm: A Two-Dimensional Model of Utilitarian Psychology,” appears in Psychological Review. Its authors (Guy Kahane, Jim A. C. Everett, Brian D. Earp, Lucius Caviola, Nadira S. Faber, Molly J. Crockett, and Julian Savulescu) are philosophers and psychologists, who are all sympathetic to utilitarianism. They succeed marvelously in revealing the woeful incompleteness of a picture of utilitarianism that so prominently features the willingness to do harm. But in giving a more complete picture of utilitarianism, I will argue, they inadvertently remind us of why utilitarianism alone cannot provide anything like a complete picture of human well-being.

The authors of the new paper are now, or have recently been, at Oxford. Joshua Greene, the lead author on the 2001 paper I mentioned above, who is so closely associated with the picture of utilitarianism that the authors of the new paper aim to correct, is now at Harvard. For the purpose of this discussion, I will refer to “the Oxfordians” as the ones who seek to set the record straight and to the “Harvardians” as the ones who have created the impression of utilitarianism that needs correcting.

Missing the “Impartial Beneficence” Dimension of Utilitarianism

In a word, the Oxfordians argue that, whereas utilitarianism in fact has two key dimensions, the Harvardians have been calling attention to only one. A significant portion of the new paper is devoted to explicating a new scale they have created—the Oxford Utilitarianism Scale—which can be used to measure how utilitarian someone is or, more precisely, how closely a person’s moral decision-making tendencies approximate classical (act) utilitarianism. The measure is based on how much one agrees with statements such as, “If the only way to save another person’s life during an emergency is to sacrifice one’s own leg, then one is morally required to make this sacrifice,” and “It is morally right to harm an innocent person if harming them is a necessary means to helping several other innocent people.”

According to the Oxfordians, while utilitarianism is a unified theory, its two dimensions push in opposite directions. The first, positive dimension of utilitarianism is “impartial beneficence.” It demands that human beings adopt “the point of view of the universe,” from which none of us is worth more than another. This dimension of utilitarianism requires self-sacrifice. Once we see that children on the other side of the planet are no less valuable than our own, we grasp our obligation to sacrifice for those others as we would for our own. Those of us who have more than we need to flourish have an obligation to give up some small part of our abundance to promote the well-being of those who don’t have what they need.

The Oxfordians dub the second, negative dimension of utilitarianism “instrumental harm,” because it demands that we be willing to sacrifice some innocent others if doing so is necessary to promote the greater good. So, we should be willing to sacrifice the well-being of one person if, in exchange, we can secure the well-being of a larger number of others. This is of course where the trolleys come in. We should (granting certain stipulations and background conditions that may or may not be all that plausible in real life) be willing to push a strange man off a footbridge to stop a trolley barreling down the tracks, if doing so would save the lives of five others who would otherwise be killed. It is this second, negative dimension to which, on the Oxfordian account, the Harvardians have been calling too much attention.

The Harvardians fully recognize the way in which the negative dimension runs counter to the intuitions of most people, but on their view, that is the problem. Most people rely on intuition or emotion when it comes to morality. The problem is that moralities rooted in intuition and emotion developed tens of thousands of years ago, when we lived in small tribes and needed to defend ourselves against other tribes competing for scarce resources. There is a potentially catastrophic misfit between the emotion-based tribal morality we developed in the distant past and the reason-based cosmopolitan morality we need today. Once upon a time a refusal to harm those who are close to us served us well, but it does no longer.

Since the publication of Greene’s 2001 report in Science, he and other Harvardians (such as Steven Pinker and Paul Bloom—who is at Yale) have been building the case that, whereas people who fail to reach the right utilitarian judgments are relying on the ancient, “emotional part” of their brains, people who succeed in reaching the right judgments rely on the more recent, “reasoning part.” The Harvardians have been creating a rather flattering picture of utilitarians, which depicts them as the few who can discern what reason dictates to be ethical.

But within a handful of years after the report in Science, new fMRI studies began to appear that had far less flattering ramifications. It turned out that the fMRI profile of people who reached what Greene and his collaborators were labeling “utilitarian judgments” resembled in some ways the fMRI profiles of people who exhibited clinical and sub-clinical psychopathy. That is, the fMRI profiles of the utilitarians bore an unflattering resemblance to the fMRI profiles of people who exhibited antisocial tendencies and a reduced concern about harm to others.

So, by articulating two dimensions of utilitarianism in their new paper, the Oxfordians are able to show why that unflattering resemblance is misleading. Yes, with regard to the second, negative dimension, the brains or at least mental tendencies of psychopaths and utilitarians might in some ways resemble each other. But, the Oxfordians cogently argue, utilitarianism is far more than a willingness to do harm to individuals. It is also, and far more importantly, a capacity to be motivated by what they call “empathic concern” for all. It is a capacity to, out of a commitment to impartiality, benefit others, regardless of their proximity to us in space or time. Psychopaths might be happy to sacrifice others, but they don’t show anything like a commitment to the sort of “impartial beneficence” that the Oxfordians say is the true core of utilitarianism.

The Oxfordians do not only paint a more flattering and complete picture of what utilitarianism is. They also offer a more realistic picture of how human beings come to endorse one or more dimensions of any ethical theory. Different from the Harvardians, who suggest that it is their unusually large rational endowment that enables them to endorse the dimension of utilitarianism that requires doing harm to others, the Oxfordians allow that how much any individual endorses one dimension or the other is at least partly a function of emotion or affect. As they say, their findings suggest that both dimensions of utilitarianism “are largely driven by affective dispositions rather than explicit reasoning.”

Indeed, in this paper, the Oxfordians begin to sound a lot like Jonathan Haidt, who has long argued that it isn’t just religious kooks and deontological philosophers whose ethical views are infused with affect. The Oxfordians write: “It is likely that both attraction to, and rejection of, explicit ethical theories is driven, at least in part, by individual differences in … pre-theoretical moral tendencies.” This explicit recognition that affect plays an important role in determining which dimension of utilitarianism one embraces, or for that matter, which ethical theory one embraces—is a radical and welcome departure from the picture that the Harvardians have been painting.

Missing the “Partiality” Dimension of Well-Being

In addition to giving a more complete picture of what utilitarianism actually entails, the Oxfordians also help solve what can look like the great puzzle represented by our most famous living utilitarian, Peter Singer. Mindbogglingly more than most of us, Singer sacrifices some of his own well-being to promote the total amount of well-being in the world. As a father of animal liberation and champion of vegetarianism, he sacrifices the pleasure of eating animals. As a father of effective altruism, he sacrifices at least 10% of his annual income to those who are less fortunate than he. He is plainly full of empathic concern, and extraordinarily high on the first dimension of utilitarianism.

And, he is equally high on the second dimension, which demands a willingness to sacrifice some human beings for the sake of what he understands to be the maximization of the well-being of a greater number. In theory, he is eager to endorse the rightness of pushing the strange man off the footbridge. And, in the real world, he is eager—or at least unperturbed—to argue on behalf of the option to euthanize infants with profound cognitive disabilities, insofar as doing so would increase overall well-being.

So what can seem contradictory—an abundance of empathy cheek-by-jowl with the appearance of its lack—comes into view as a sign of his consistency. Along the positive and negative dimensions, he is doing what is required to increase aggregate well-being, as he understands well-being.

But in showing the way in which the contradiction is only apparent, the Oxfordians inadvertently bring our attention to what utilitarianism leaves out of its account of well-being. From what Singer takes to be the point of view of the universe, babies with profound cognitive disabilities do not have the self-awareness—the sense of being a subject with a past and a future—that he thinks is necessary to be a person. He is of course aware that many parents take those babies to be persons. He is aware that many parents fall deeply in love with their children with such disabilities, and that many of them say that being in relationship with those children is one of the great gifts of their lives. Singer’s response, however, appears to be that those parents are in some sense making a mistake. They think their child with profound cognitive disabilities is a person, but in reality “it” is not. On his account, emotion clouds what reason makes crystal clear.

His response, however, fails to take sufficiently seriously that love is one component of human well-being, and that, contrary to his deepest intuitions, love can grow in places that seem impossible to him. We have, after all, evolved to have the capacity to love human beings who are close to us, even when they do not have the cognitive capacities that he thinks need to be in place to be a person. This, let us call it, “partiality” that we can experience is not a defect in the systems that we are. It is a constitutive feature. It is not parents who make a mistake when they love their children, independent of their cognitive capacities. Nor are parents making a mistake when they love their own children more than other people’s children. It is utilitarians who make a mistake when they fail to take into sufficient account the fact that such partiality can be an essential component of human well-being.