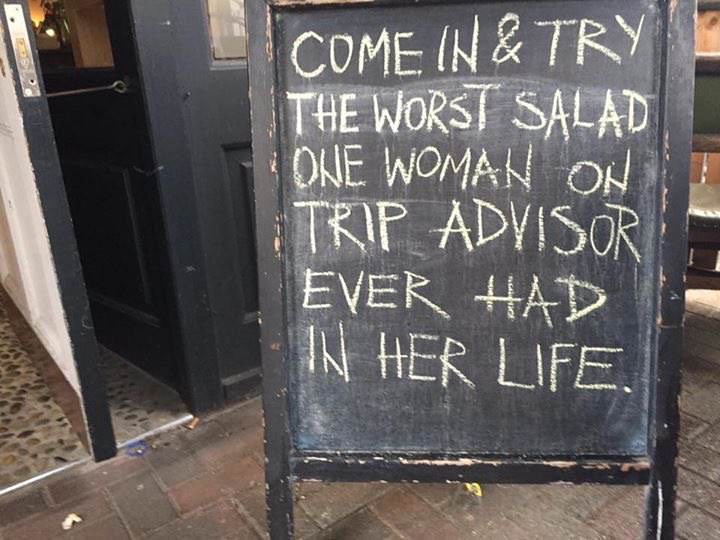

Five Stars or Nothing

Ratings and written consumer reviews are important. In markets, they alleviate information asymmetry.

Ratings and written consumer reviews are important. In markets, they alleviate information asymmetry.

Join the newsletter to receive the latest updates in your inbox.